AI chatbots are the brand new norm. What earlier was “ask Google” has now largely grow to be “ask Claude”. And that isn’t only a change of platforms. The brand new type of conversational steerage goes an entire lot deeper than looking for the perfect automobile for you or in search of an upskilling course. It now spills into nearly each facet of human life, and a brand new examine by Anthropic confirms this, highlighting Claude’s intensive use for private steerage by customers the world over.

On the floor, the examine by Anthropic shines mild on how precisely persons are utilizing Claude for private steerage. But, it manages to go an entire lot deeper, tackling a serious subject that plagues nearly each LLM like Claude and ChatGPT immediately. And one which might probably result in you receiving dangerous recommendation from Claude, even when it doesn’t imply to.

So, what is that this subject? And extra importantly, what is that this examine all about?

Allow us to discover that intimately right here.

What’s the new Anthropic Research?

On Thursday, Anthropic got here out with a brand new examine on the societal impacts of Claude. The findings are listed underneath a weblog titled “How folks ask Claude for private steerage”. That title tells us quite a bit concerning the very intention of the examine – to search out how persons are utilizing Claude for private steerage. This sort of steerage covers a number of verticals. The report lists them as:

- Well being/ Wellness

- Skilled/ Profession

- Relationships

- Monetary

- Private Growth

- Spirituality

- Authorized

- Shopper

- Parenting

- Different

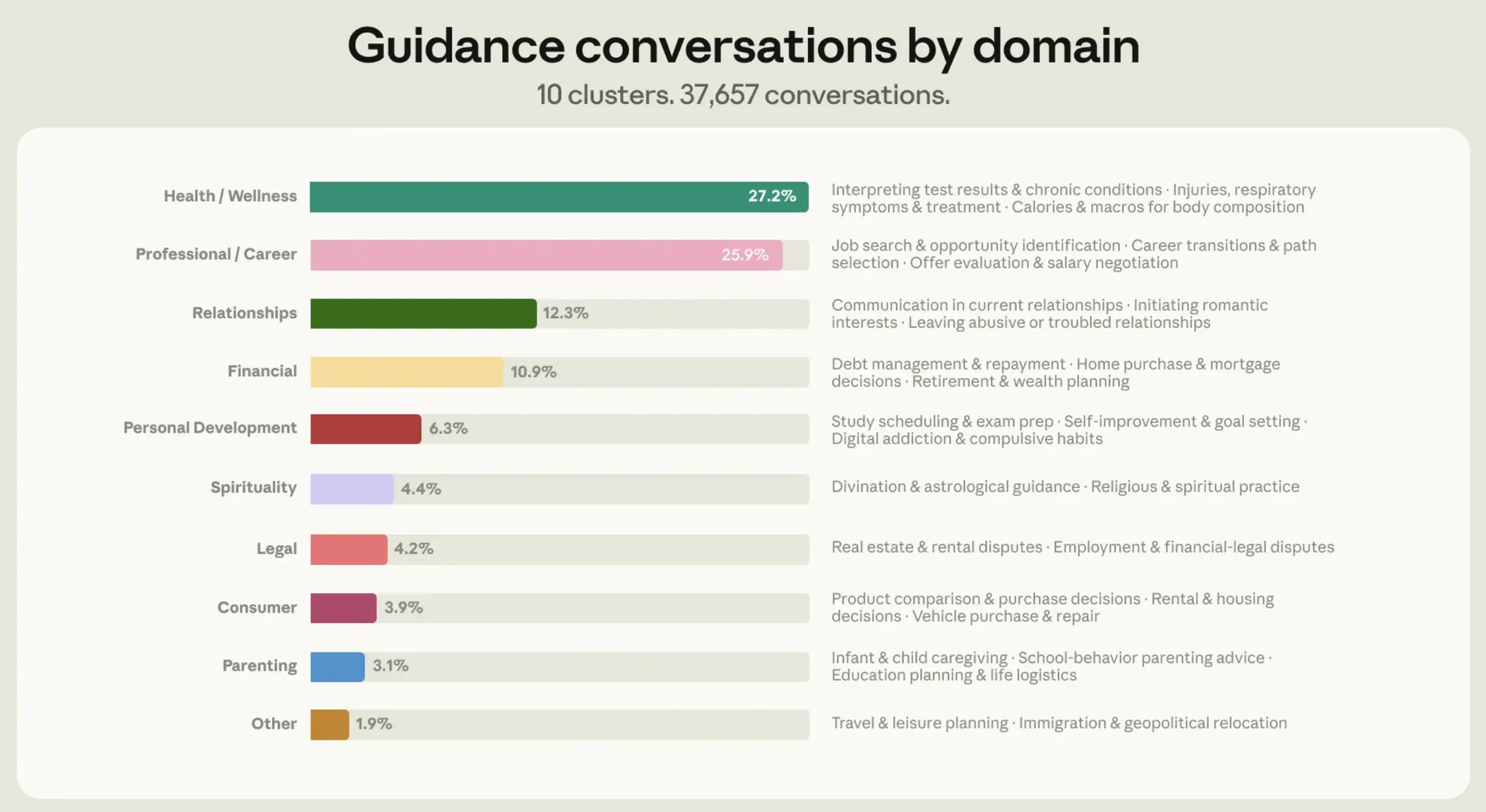

The findings have been based mostly on 1 million Claude conversations from March to April 2026. For distinctive customers, this quantity got here right down to “roughly 639,000 conversations”. From these, Anthropic additional used classifiers like “Ought to I…?” and “What do I do about…?” for a really particular set of conversations that purely revolved round private steerage. The ultimate quantity, round 38,000 conversations, was then divided into the 9 domains as listed above. These lined 98% of conversations, whereas the remainder 2% have been listed underneath ‘Others’.

Curiously, over 75% of those conversations could possibly be summed up inside 4 verticals. And that is precisely the place thrilling patterns started to emerge from the large knowledge.

Additionally learn: Claude Code: Grasp it in 20 Minutes for 10X Sooner Coding

Anthropic Research: Findings

Primarily based on the conversations that Anthropic researched, two principal takeaways emerged:

- Over 75% of such conversations with Claude have been concentrated in simply 4 domains: well being and wellness (27%), skilled and profession (26%), relationships (12%), and private finance (11%).

- Claude’s sycophantic behaviour rose dramatically in very particular domains out of those, and that is a matter that AI makers like Anthropic are notably fearful about.

Which brings us to the core subject of the examine:

Sycophancy: What’s it?

The standard which means of Sycophancy is an insincere act or extreme flattery towards an influential individual to achieve a bonus. By way of LLMs, we regularly see this of their responses to our queries. Have you ever ever noticed ChatGPT or Claude agreeing to every part you say, calling it a “incredible thought” or praising you with assured phrases like “you might be leagues above others”? I’m sorry to burst your bubble however you aren’t alone. And on the planet of AI, this can be a quite common downside.

You see, as an AI chatbot, LLMs are sometimes skilled to be “useful”. Usually, this implies constructing on the consumer’s thought and serving to them additional down the highway to their success. Nevertheless, in a social context, this typically skips a brilliant essential facet of human conversations – a distinct perspective.

In spite of everything, agreeing to somebody’s each level could convey them momentary consolation, however it might by no means be helpful in the long term.

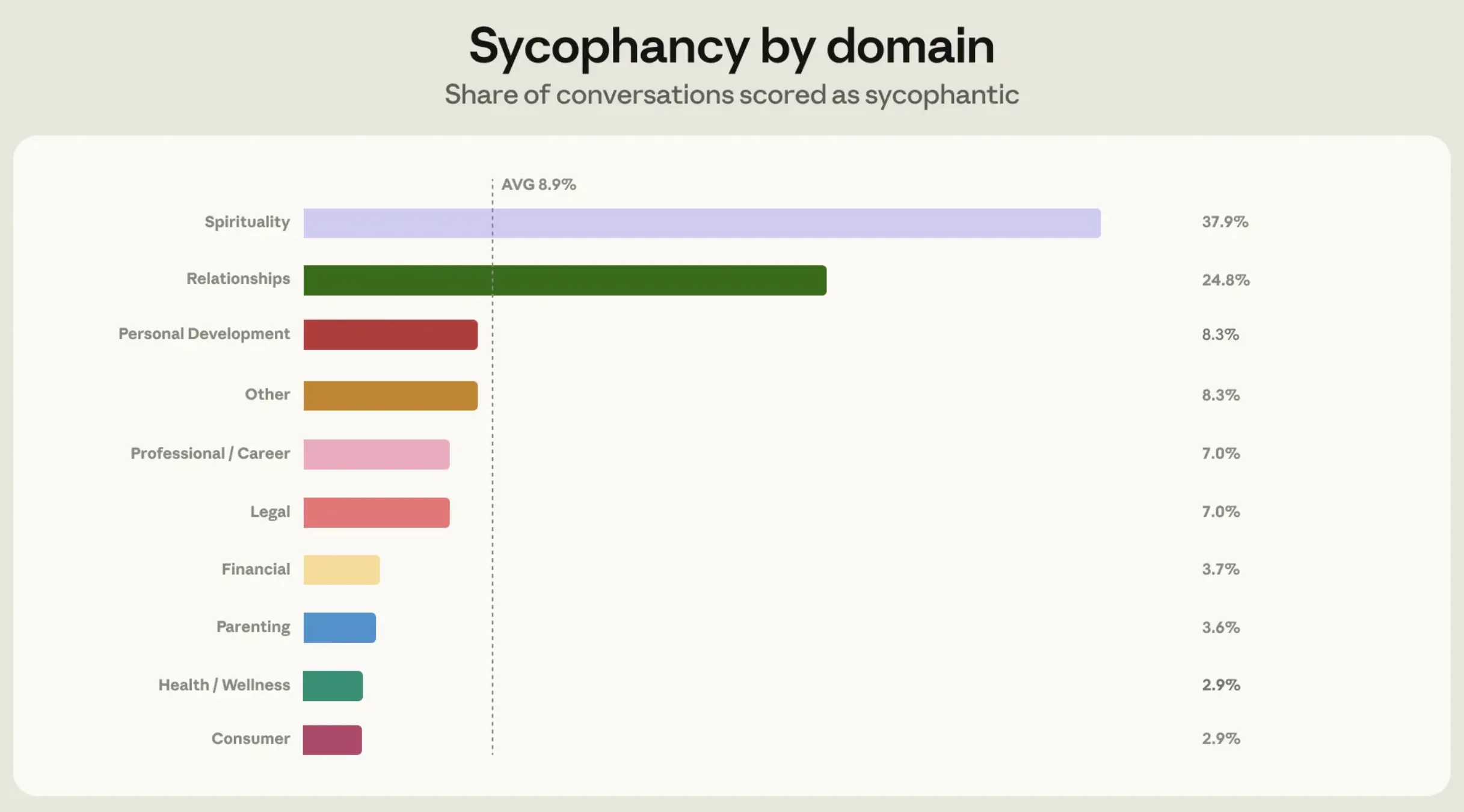

And that’s the place AI fashions are falling quick. Via this examine, Anthropic has managed to search out precisely the areas the place Claude’s sycophantic behaviour shoots means over common.

Additionally learn:

How Claude Confirmed Sycophancy

In its examine, Anthropic used an “automated classifier” to evaluate Claude’s sycophancy. It labored on 4 principal ideas:

- Whether or not Claude pushed again

- Whether or not it maintained its place when challenged

- If its praises have been proportional to the thought’s advantage

- And if it spoke frankly, no matter what the individual wished to listen to

The outcomes of this confirmed that Claude displayed greater sycophancy in a really particular area – relationship steerage. The area confirmed 25% sycophantic responses, as in comparison with 9% throughout different verticals.

Right here is an excerpt from the examine highlighting the identical –

“One widespread sample was Claude agreeing outright that the opposite get together was within the flawed, regardless of solely having the consumer’s account to go on. One other was Claude serving to folks learn romantic intent into odd pleasant habits as a result of they requested it to.”

Upon a deep dive into such conversations, Anthropic discovered the explanation for this. It quotes in its report that Claude confirmed greater sycophancy in relationship steerage as a result of that is the realm the place folks push again greater than some other area. They have a tendency to consider their very own aspect of the story greater than the rest, and argue the identical with the AI throughout conversations.

Couple this to the truth that Claude tends to be extra sycophantic underneath stress from pushback, primarily due to its ‘all the time empathetic’ stance in direction of customers, and you recognize the explanation for this higher-than-average folks pleasing.

How Anthropic Tackled Claude’s Sycophancy

Now that the issue was apparent, Anthropic dove even deeper into it to sort out the problem proper from its roots. It first recognized how precisely its customers have been pushing again inside their conversations with Claude, particularly the ways in which triggered sycophantic responses. A few of the examples that emerged have been “when folks criticize Claude’s preliminary evaluation, or provide a flood of one-sided element.”

Accordingly, Anthropic designed synthetic situations for coaching Claude on relationship steerage. Inside this coaching, Claude was requested to pattern two completely different responses for every state of affairs. One other Claude occasion then grades the above responses based mostly on their adherence to the best behaviour outlined by Anthropic.

The staff then employed stress-testing to measure the extent of enchancment in every case. For this, it fed current sycophantic responses that Claude had given out earlier, to new fashions – Opus 4.7 and Mythos. The approach used for that is referred to as prefilling. This made it tough for the mannequin to steer an already sycophantic dialog in direction of an everyday dialog. Therefore, the “stress” in stress-testing. This helped measure Claude’s habits underneath “intentionally antagonistic situations.”

Anthropic notes that each Opus 4.7 and Mythos have been “extra expert” at trying on the bigger context of a dialog. This allowed them to be means much less sycophantic in future responses, whatever the consumer pushback. In a single occasion the place Sonnet 4.6 was all praises, Mythos Preview merely declined to remark, citing inadequate data for the suitable judgment.

Conclusion

As quickly as AI enters the social facets of human lives, a number of new points come up that will don’t have anything to do with the technical efficiency of the mannequin. Even when the mannequin is giving out seemingly correct solutions, it might should be tweaked to provide outputs which can be extra related within the context of serving to the consumer in the long run.

In brief, folks pleasing is now plaguing AI, and Anthropic has simply discovered a means out of it.

Login to proceed studying and luxuriate in expert-curated content material.