Constructing production-ready Apache Flink purposes requires studying a fancy ecosystem. The training curve is steep for newcomers, and even skilled Flink builders encounter complexity when scaling purposes or troubleshooting manufacturing points. With the brand new Kiro Energy and Agent Ability for Amazon Managed Service for Apache Flink, you will get AI-assisted steering for constructing, enhancing, and migrating streaming purposes straight in your improvement surroundings, with suggestions which are grounded in finest practices.

The Managed Service for Apache Flink Kiro Energy and Agent Ability helps you navigate challenges throughout the Flink software lifecycle. For brand spanking new improvement, the device gives contextual steering on software structure, state administration patterns, and connector choice. For current software enhancements, it analyzes your current code to determine efficiency bottlenecks, reliability dangers, and alternatives for enchancment. In case you’re upgrading from Apache Flink 1.x to 2.x, it detects compatibility points and gives focused refactoring steps to modernize your purposes.

On this submit, we stroll by means of putting in the Energy and Ability, utilizing Amazon Kinesis Information Streams to construct a Kinesis Information Stream-to-Kinesis Information Stream streaming pipeline, and migrating an current software to Flink 2.2. You’ll be able to observe together with this use case to see how the Managed Service for Apache Flink Kiro Energy can assist you construct a resilient, performant software grounded in finest practices.

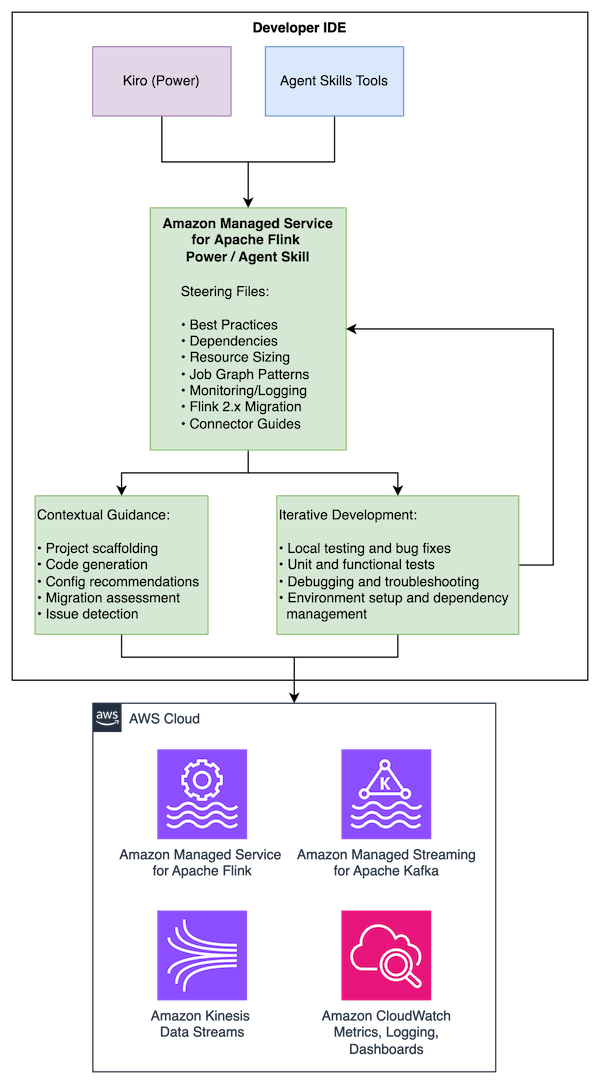

Answer overview

The Managed Service for Apache Flink Energy/Ability works throughout a number of AI improvement instruments, offering the identical complete steering in every:

- Kiro: Installs as a Energy that mechanically prompts for Flink-related improvement actions

- Cursor and Claude Code: Installs as an Agent Ability following the open Agent Expertise normal

- Different suitable brokers: Appropriate with instruments supporting the Agent Expertise specification

The Energy/Ability gives steering throughout the event lifecycle:

- Greatest practices for Managed Service for Apache Flink software improvement

- Maven dependency administration and challenge construction

- Useful resource enhancements together with KPU sizing, parallelism tuning, and checkpointing

- Job graph structure patterns and anti-patterns

- Amazon CloudWatch monitoring and logging configuration

- Flink 1.x to 2.2 migration steering with state compatibility evaluation

- Connector-specific tips

The content material is maintained in a single repository with use case particular entry factors which are dynamically loaded relying in your wants.

Conditions

To make use of the device, you want:

- A improvement machine operating macOS, Linux, or Home windows with Java 11 or later (Java 17 for Flink 2.2) and Apache Maven put in

- One of many following AI improvement instruments:

- Kiro IDE

- Cursor

- Claude Code

- Different Agent Expertise-compatible instruments

- Fundamental data of Java and stream processing ideas (useful however not required)

- An AWS Id and Entry Administration (IAM) function configured with entry to create and run Managed Service for Apache Flink purposes, create Amazon Easy Storage Service (Amazon S3) buckets for Flink software dependencies, create Kinesis Information Streams for streaming, and create IAM roles (required if deploying an software)

Set up

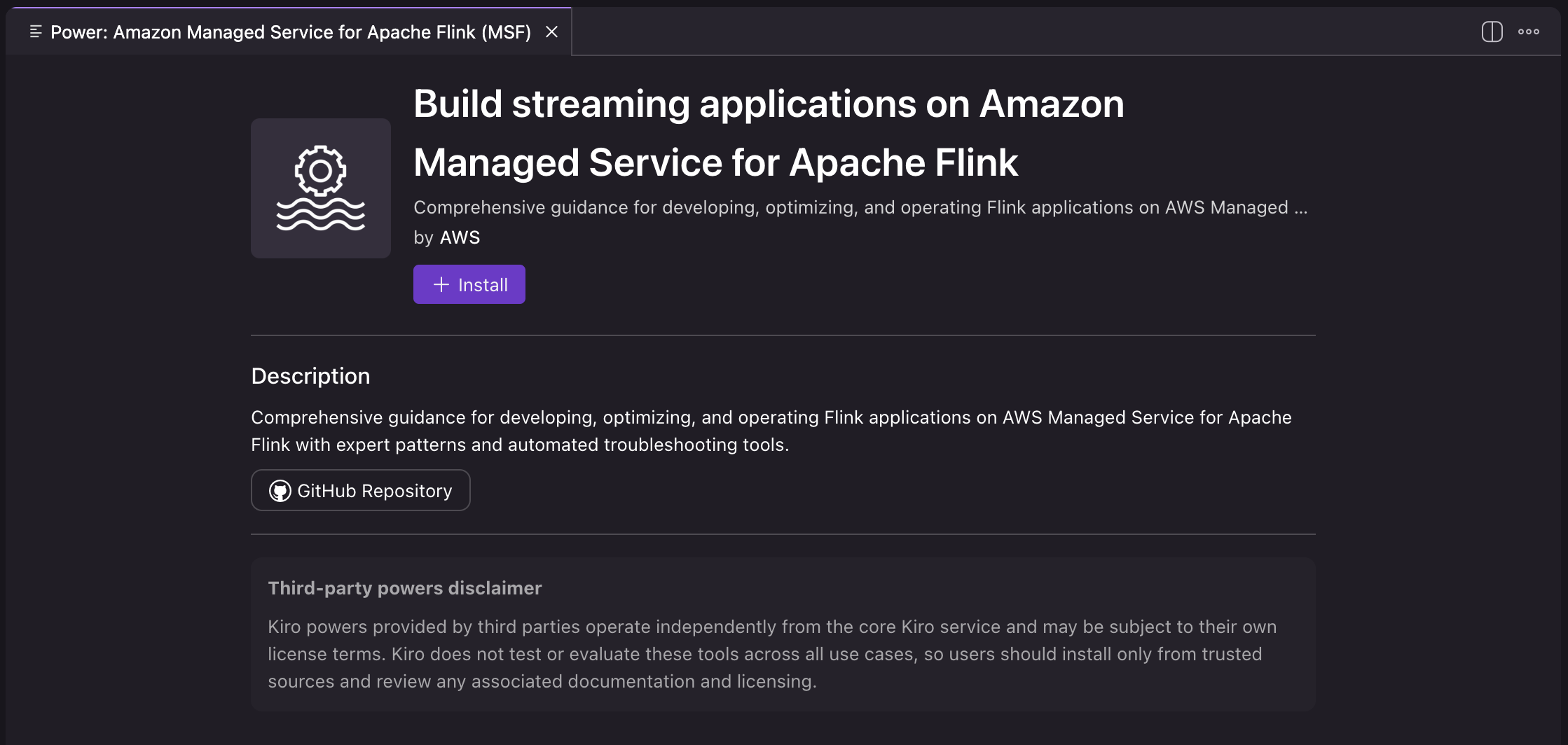

Putting in as a Kiro Energy

- Open Kiro IDE.

- Open Amazon Managed Service for Apache Flink and choose Open in Kiro.

- Select Set up to put in the facility.

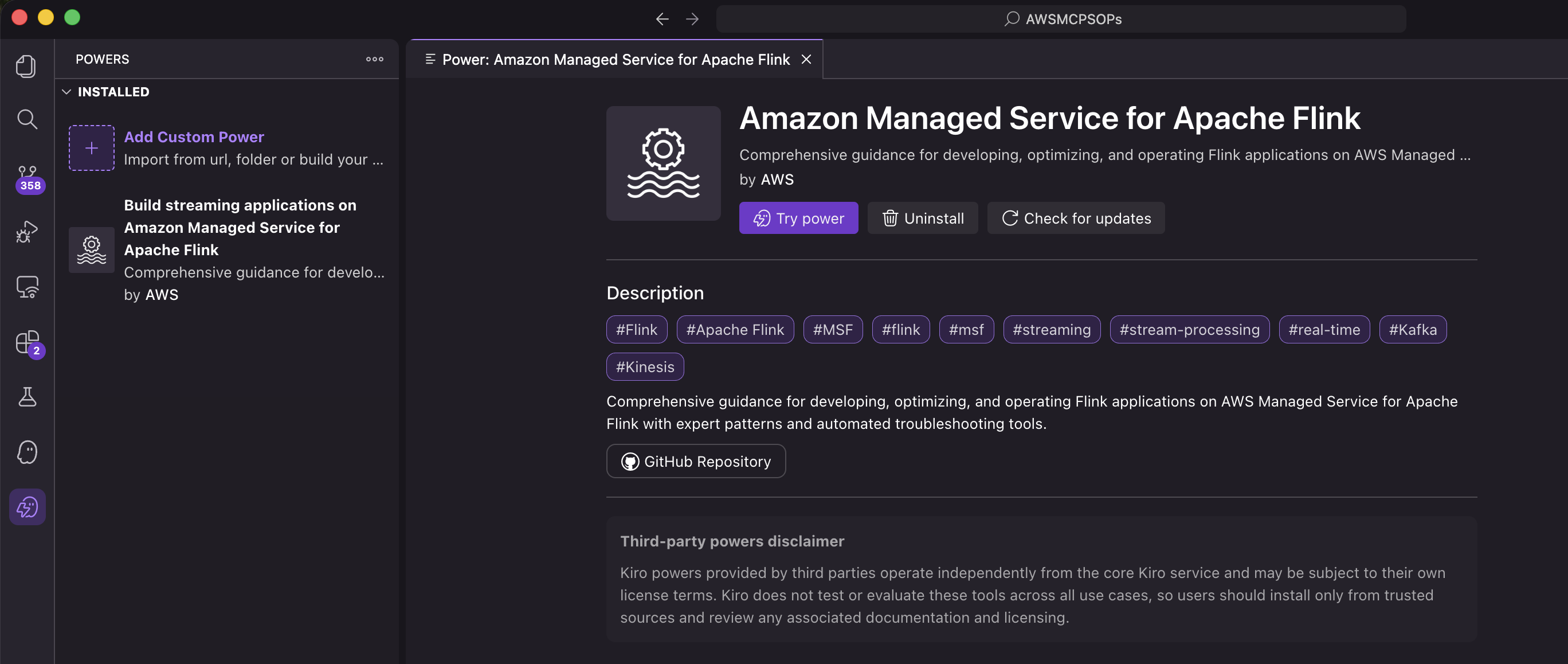

- Confirm that the facility is listed within the put in powers within the Kiro IDE.

The Energy is now put in and mechanically prompts if you work on Flink-related improvement actions.

Putting in as an Agent Ability

Agent Expertise are found mechanically by suitable instruments by means of the SKILL.md file. Set up varies by device:

Per-project set up (accessible in a single challenge):

Private set up (accessible throughout tasks):

To confirm the set up, work together with the talent in your most popular device. In Claude Code, you may invoke it with /flink. In Cursor, kind / in Agent chat and seek for flink. For extra details about Agent Expertise, see the Agent Expertise documentation.

Instance: Constructing a Kinesis-to-Kinesis streaming pipeline

Quite than itemizing finest practices, the Energy/Ability actively guides you thru making the suitable architectural selections at every stage of improvement.

The next walkthrough demonstrates constructing a Flink software that reads from Amazon Kinesis Information Streams, analyzes occasions, and writes to a different Kinesis stream. To observe alongside, run the identical prompts in your Kiro IDE or different improvement device. Within the following prompts, we deal with native improvement and don’t create AWS sources. Nevertheless, should you immediate the agent to create and deploy AWS sources, they are going to incur further prices.

Beginning the dialog

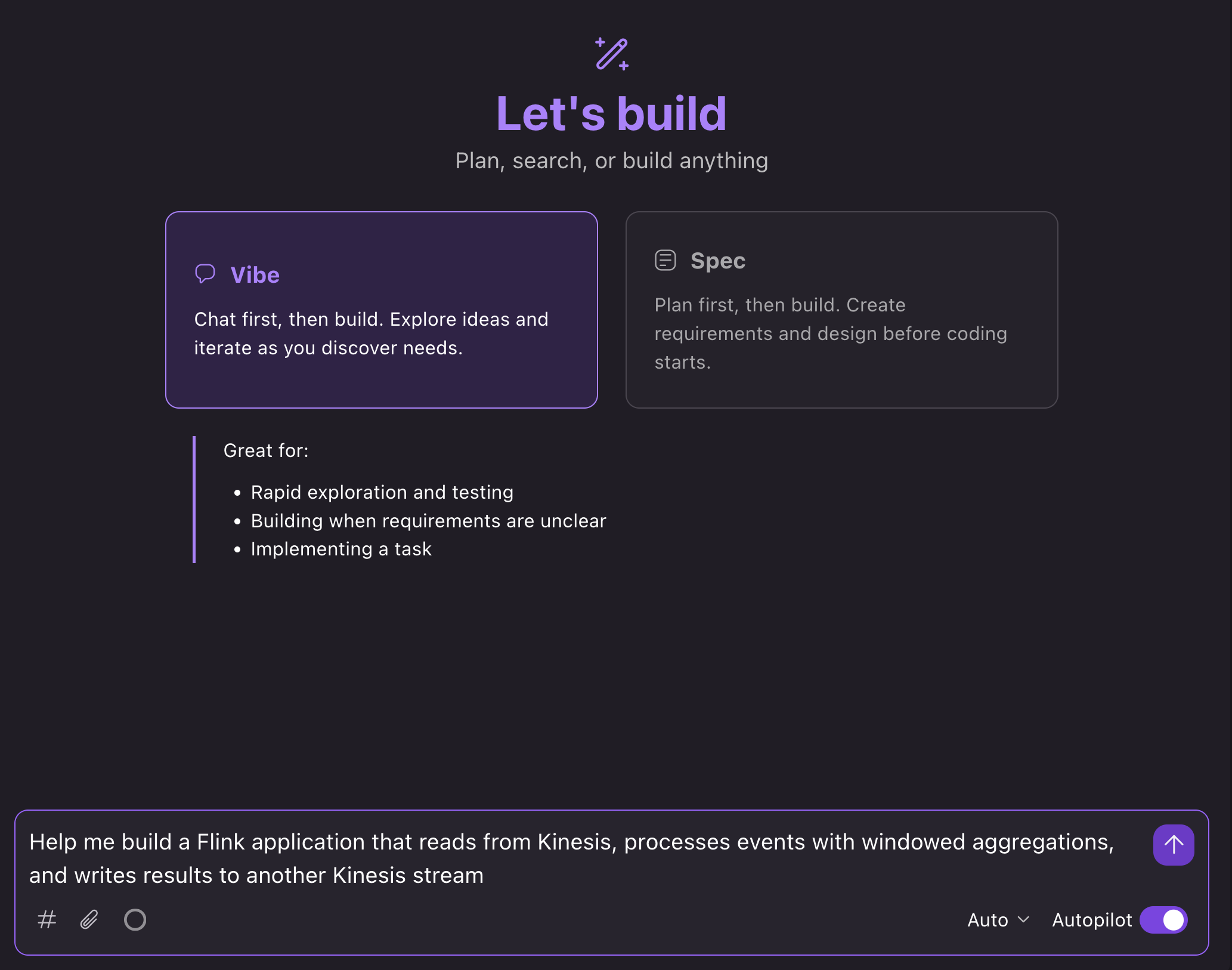

Within the Kiro IDE, we will open a brand new chat in Vibe mode and immediate: “Assist me construct a Flink software that reads from Kinesis, processes occasions with windowed aggregations, and writes outcomes to a different Kinesis stream”:

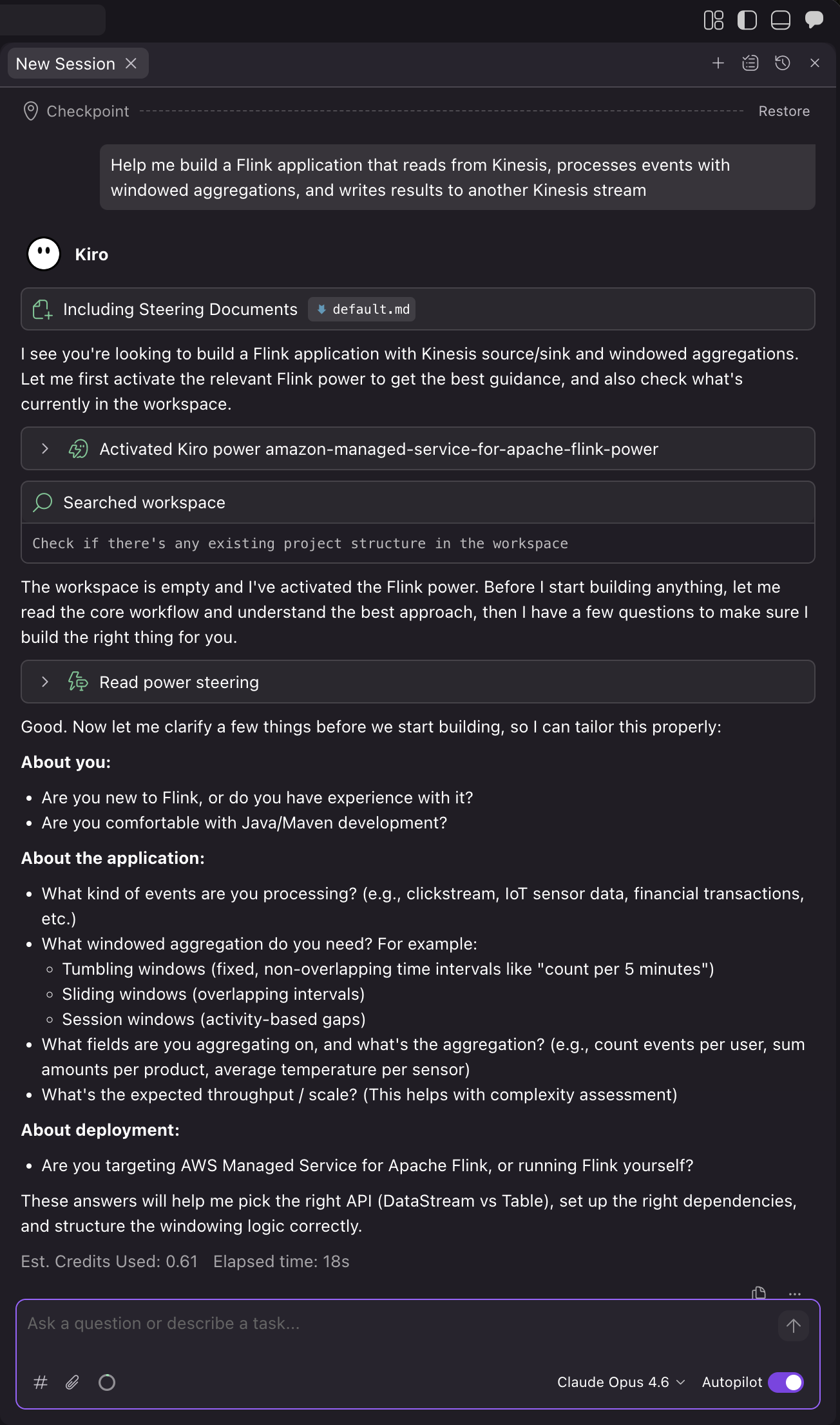

What occurs subsequent

The AI assistant masses related steering and walks you thru the event course of:

1. Verify challenge necessities and particulars

Kiro mechanically masses the Energy primarily based on the context of your immediate. The assistant then asks you questions on your use case to make it possible for it builds the suitable software in your wants:

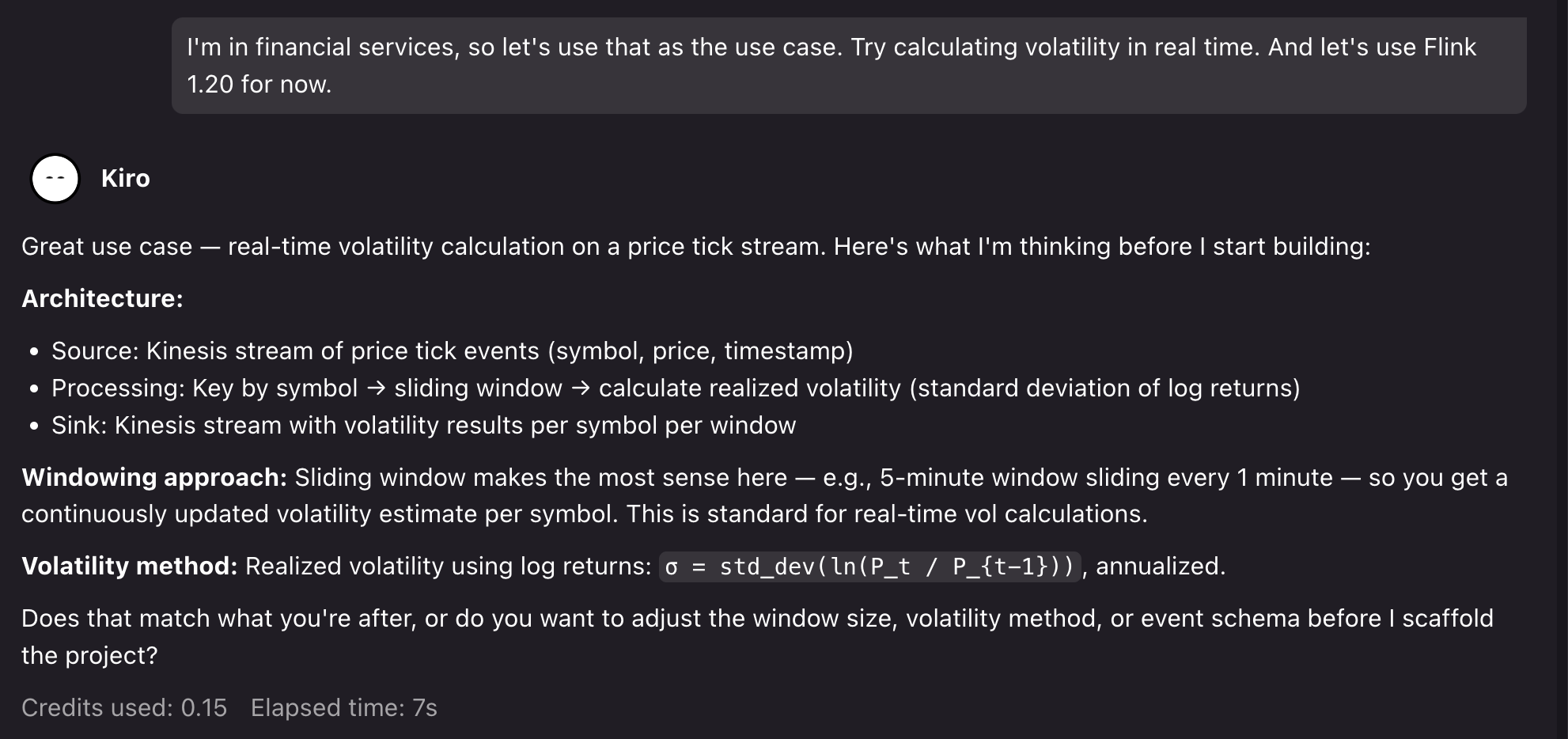

For the demo, we will immediate for a monetary providers use case: “I’m in monetary providers, so let’s use that because the use case. Attempt calculating volatility in real-time. And let’s use Flink 1.20 for now.”.

Kiro then confirms its assumptions and asks to proceed:

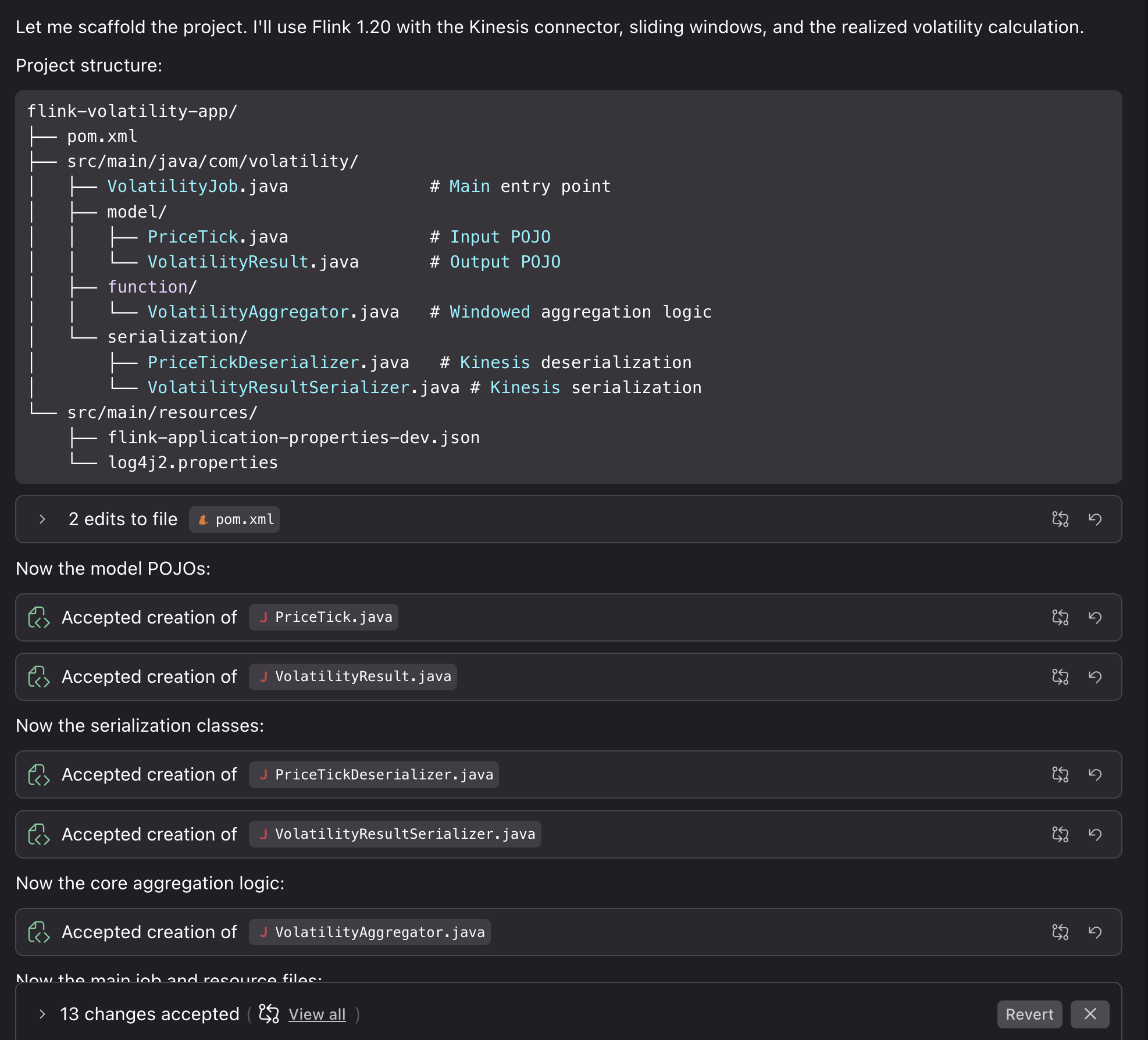

2. Undertaking setup

After we verify, Kiro generates a challenge with Flink 1.20 dependencies, Kinesis connectors, and correct scope configuration for Managed Service for Apache Flink deployment. The assistant creates the applying construction with correct configuration separation between native improvement and Managed Service for Apache Flink service-level settings. Then, it creates a Kinesis supply with correct deserialization and the sink with partitioning technique, and windowed aggregation logic with correct state administration, TTL configuration, and error dealing with.

Kiro additionally compiles the code to confirm that it builds appropriately. We will then proceed by asking Kiro to assist us with operating the applying domestically for testing.

3. Testing the challenge domestically

You’ll be able to run the applying domestically to check the outcomes. We will immediate: “Can we run this domestically utilizing one thing like LocalStack to check deploying the job and likewise see some instance outcomes?”

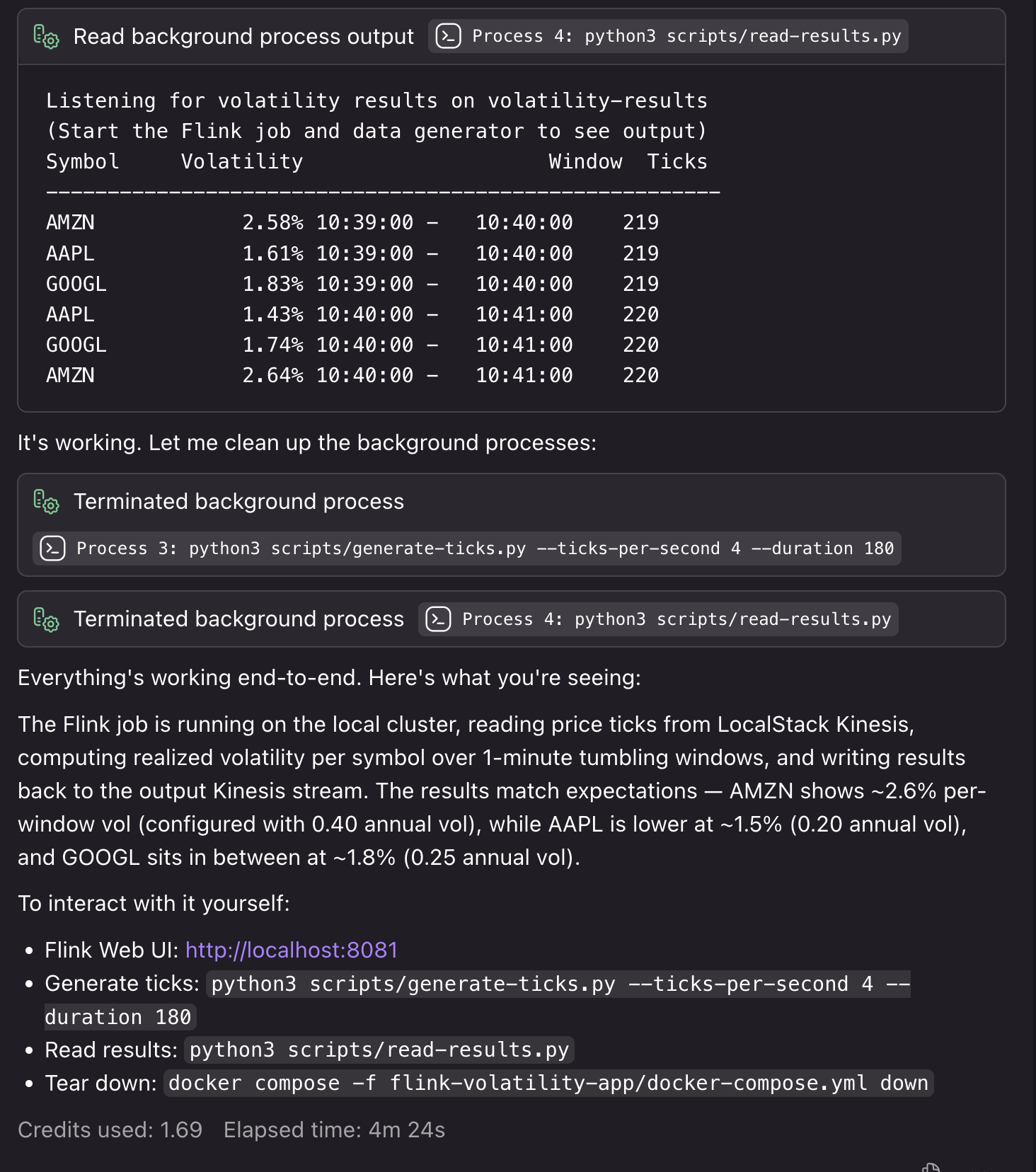

Kiro creates the required Docker sources, testing scripts, and deployment steps to run the applying domestically with artificial sources. If it encounters bugs or detects points throughout the native testing course of, it fixes them in order that your deployment runs easily:

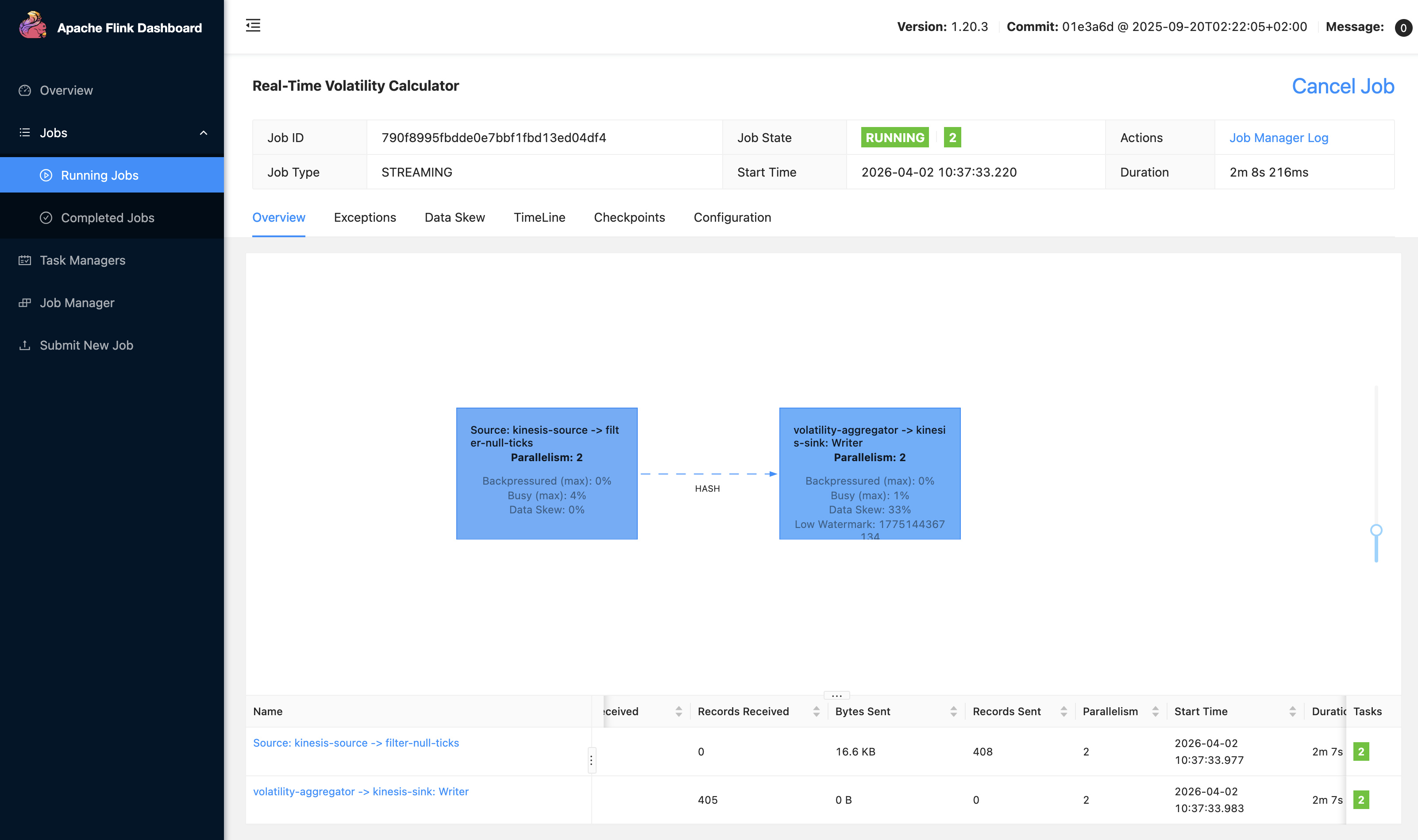

We will additionally entry our native Flink UI to view our software:

4. Deploying the applying to Managed Service for Apache Flink

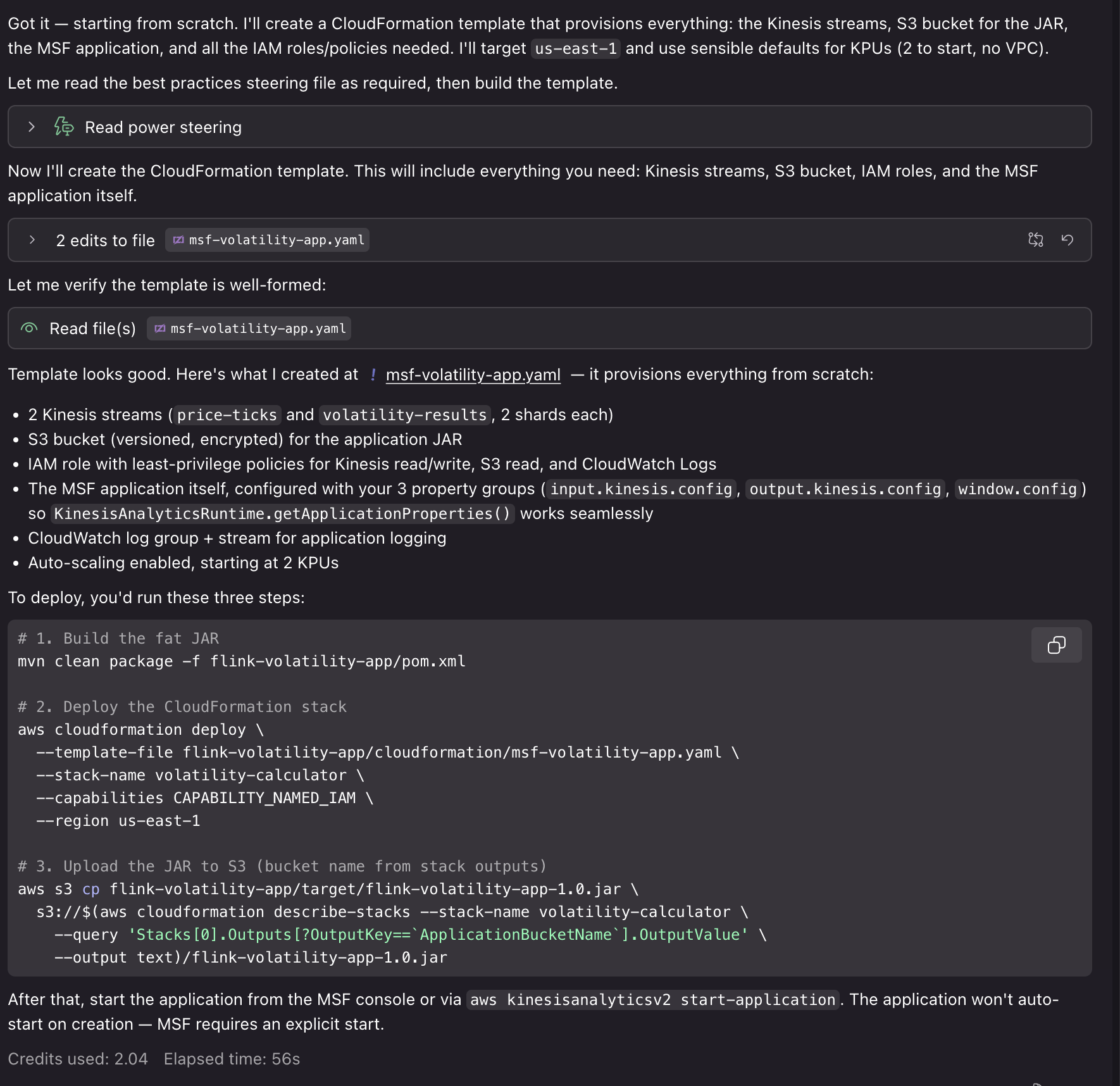

Now that our software is operating and producing outcomes end-to-end, we will use the Energy for different duties. For instance, you will get steering on KPU allocation and parallelism settings primarily based in your anticipated throughput, configure monitoring with CloudWatch metrics, logging, and dashboards for operational visibility, or arrange infrastructure as code (IaC) for deploying in Managed Service for Apache Flink. We will immediate: “That is nice! Are you able to assist me deploy this software to Managed Service for Apache Flink? I’d like to make use of CloudFormation for deployment.”

Utilizing the generated AWS CloudFormation templates and deployment scripts, we will deploy our software to AWS with related sources for Kinesis Information Streams, Amazon S3 buckets for software JAR recordsdata, CloudWatch log teams, and IAM roles. Deploying these sources requires IAM credentials with related permissions and can incur price for the related useful resource utilization.

In a conventional workflow, you construct your software, deploy to Managed Service for Apache Flink, then uncover efficiency points or configuration issues in manufacturing. You spend time debugging checkpoint failures, serialization errors, or useful resource bottlenecks.With the Energy/Ability, the AI assistant catches these points throughout improvement. While you want advanced aggregation and processing logic, it helps you to take action in a manner that makes use of sources effectively with Flink’s scaling mannequin. While you create an software bug that might trigger a crash in manufacturing, it helps you determine it early with native end-to-end testing. The Energy is configured with steering and finest practices to assist with the event course of from begin to end.

Instance: Migrating to Flink 2.2

The Managed Service for Apache Flink Kiro Energy and Agent Ability present contextual recommendation particular to your scenario. For brand spanking new builders, it walks by means of the whole workflow from challenge setup to deployment, explaining Managed Service for Apache Flink-specific ideas alongside the way in which. For migration tasks, it analyzes your current code for Flink 2.2 compatibility points and gives focused refactoring steering. The next instance exhibits how the device helps with the advanced process of migrating from Flink 1.x to 2.2.

1. Assessing migration compatibility

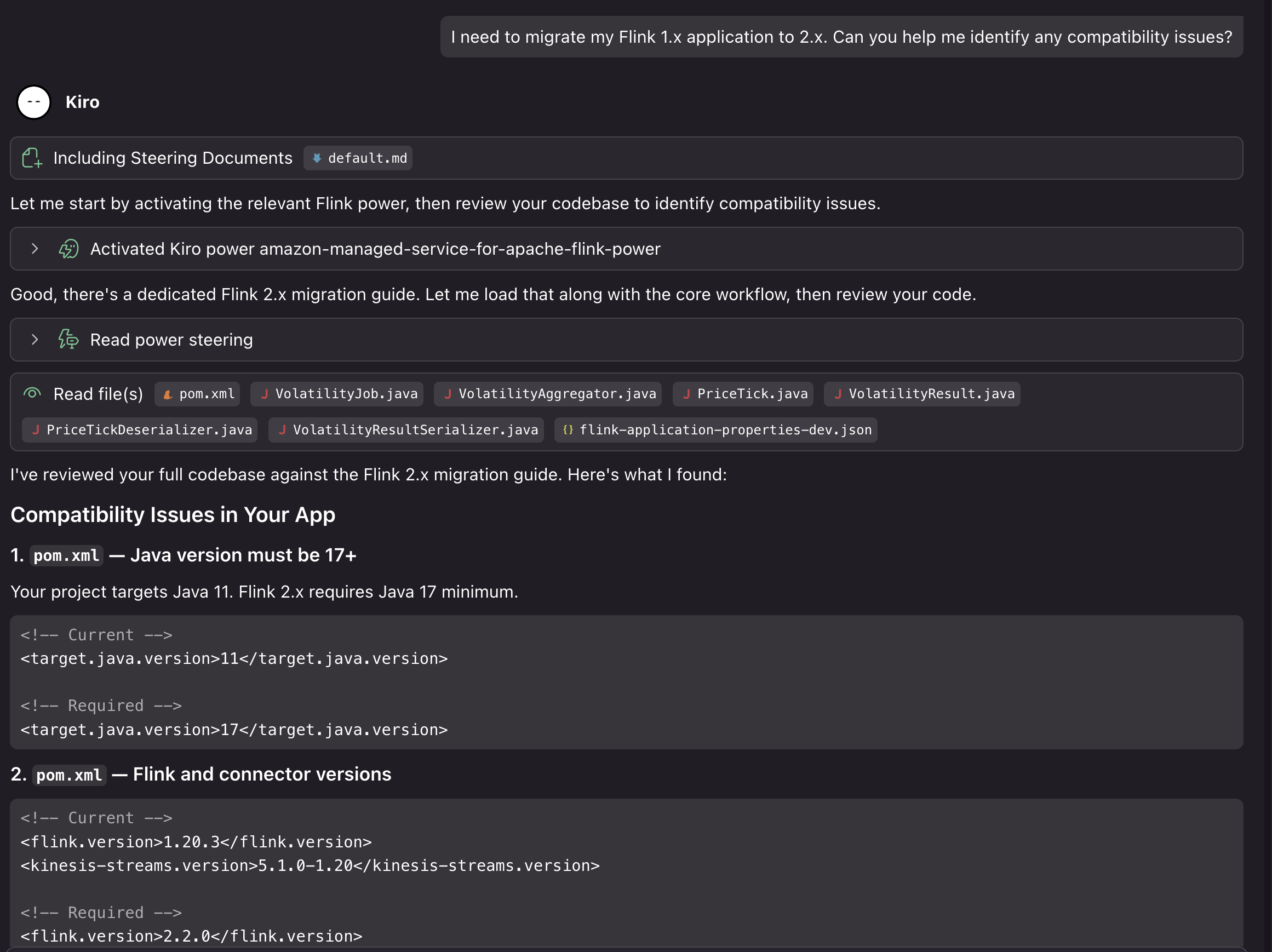

We will ask Kiro to assist us improve our challenge from the earlier instance to Flink 2.2: “I must migrate my Flink 1.x software to 2.2. Are you able to assist me determine compatibility points?”

The assistant masses the Managed Service for Apache Flink Kiro Energy and analyzes our code to determine potential points:

On this case, utilizing our generated challenge on Flink 1.20, Kiro recognized the next compatibility points for the improve:

- Java 11 should transfer to Java 17 (minimal for Flink 2.2)

- Flink model 1.20.3 should replace to 2.2.0

- The Kinesis connector should replace from 5.1.0-1.20 to six.0.0-2.0

- Time references should change to java.time.Period in window and lateness calls

- The LocalStreamEnvironment occasion of examine should be eliminated (class eliminated in 2.2)

- The isEndOfStream() override should be dropped from PriceTickDeserializer (methodology eliminated)

- implements Serializable should be added to PriceTick and VolatilityResult

It additionally verified that some elements of the challenge are already Flink 2.2 suitable. The challenge makes use of the brand new Supply Sink V2 APIs, the logging is 2.2 prepared, the POJOs with no assortment fields are state migration protected, and there are not any Kryo registrations or TimeCharacteristic utilization.

2. Implementing the migration

We will then ask Kiro to offer a step-by-step migration plan, each for updating the code and deploying to Managed Service for Apache Flink: “Are you able to assist me replace the applying for Flink 2.2, and assist me determine the steps to improve my operating Managed Service for Apache Flink software?”

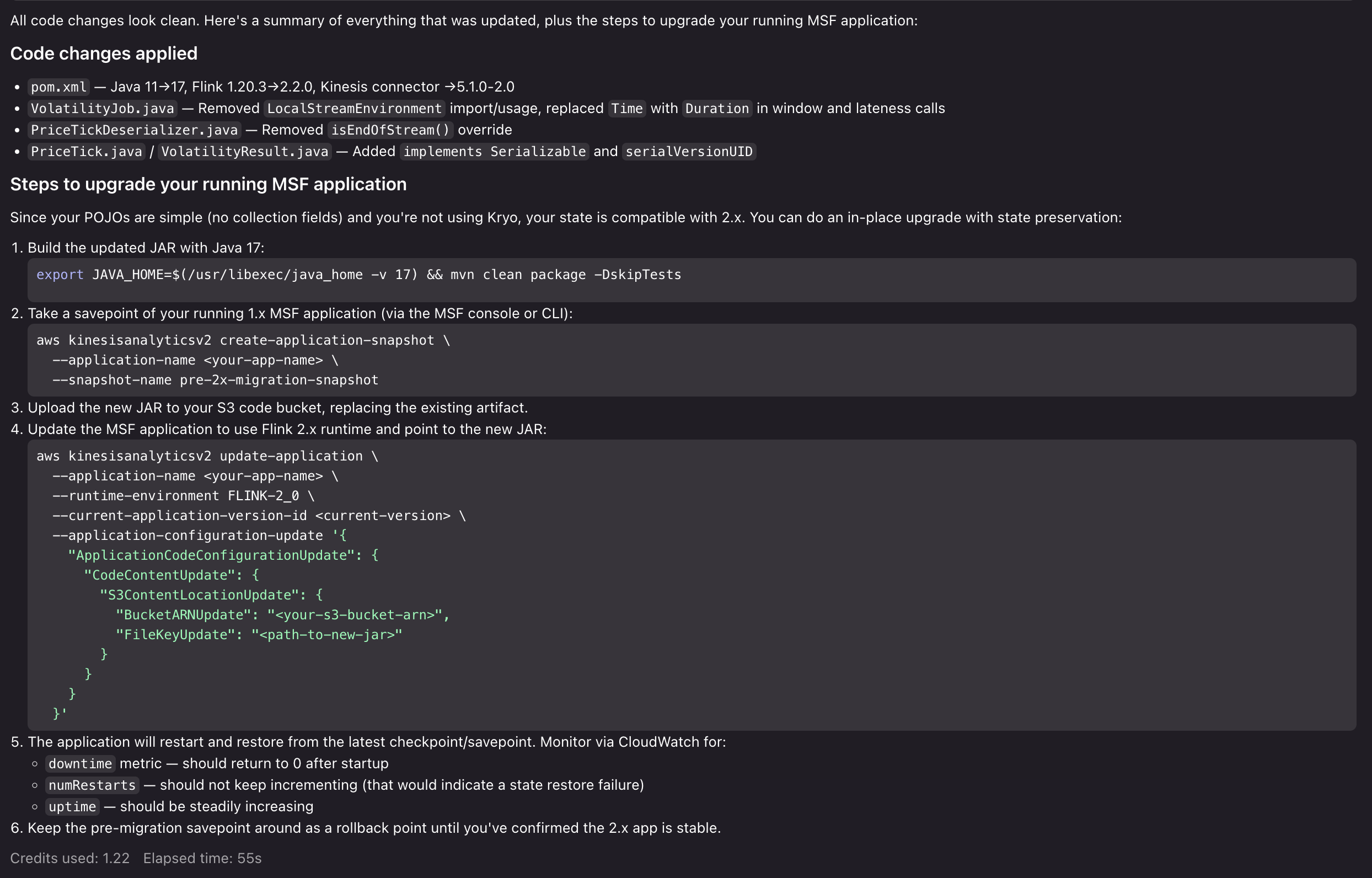

Kiro evaluates the complete software code base. It evaluates it towards the Energy’s migration steering and finest practices, and gives a complete evaluation of the breaking modifications, dangers, and potential points that might come up within the improve. After we approve the modifications, Kiro then proceeds to make the required updates to make our software suitable with Flink 2.2 and supply us with a step-by-step improve course of for the operating software:

Now that Kiro has ready the applying for Flink 2.2, highlighted migration dangers, and supplied us with a transparent path to execute the improve, you may check the improve course of with confidence. From right here, we will proceed to run our Flink 2.2 software domestically, check the improve course of in a improvement surroundings in Managed Service for Apache Flink, after which execute the improve in our manufacturing surroundings. If we run into points, we will return to the Kiro Energy to get recommendation, resolve points, and unblock our improve.

Cleanup

To take away the Energy/Ability set up:

For Kiro:

- Open Kiro IDE.

- Navigate to the Powers tab.

- Uninstall the Amazon Managed Service for Apache Flink Energy.

For Agent Expertise:

- Delete the Managed Service for Apache Flink software from the AWS Console.

- Take away related sources for sources and sinks, if created for improvement.

- Delete CloudWatch log teams if now not wanted.

Conclusion

On this submit, we confirmed you the way the Kiro Energy and Agent Ability for Amazon Managed Service for Apache Flink brings AI-assisted improvement to stream processing. You should use the device to beat Flink’s studying curve, construct purposes following Managed Service for Apache Flink finest practices, and migrate to Flink 2.2 with confidence. To get began, select the trail that matches your workflow:

- In case you use Kiro, set up the Energy from the Powers tab and begin a brand new chat with a Flink-related immediate.

- In case you use Cursor, Claude Code, or one other Agent Expertise-compatible device, clone the GitHub repository into your expertise listing and reference the steering/ recordsdata for steering.

- In case you are new to Amazon Managed Service for Apache Flink, assessment the Amazon Managed Service for Apache Flink Developer Information and the Apache Flink documentation to construct foundational data alongside the Energy/Ability.

We welcome your suggestions. Report points or request options by means of GitHub Points, or contribute enhancements through pull requests.

Concerning the authors