Introduction

Within the Databricks intelligence platform, we recurrently discover and use new AI methods to enhance engine efficiency and simplify the consumer expertise. Right here we current experimental outcomes on making use of frontier fashions to one of many oldest database challenges: be part of ordering.

This weblog is a part of a analysis collaboration with UPenn.

The Drawback: Be a part of Ordering

Since their inception, question optimizers in relational databases have struggled to seek out good be part of orderings for SQL queries. As an example the problem of be part of ordering, contemplate the next question:

What number of films had been produced by Sony and starred Scarlett Johansson?

Suppose we wish to execute this question over the next schema:

| Desk identify | Desk columns |

|---|---|

| Actor | actorID, actorName, actorDOB, … |

| Firm | companyID, companyName, … |

| Stars | actorID, movieID |

| Produces | companyID, movieID |

| Film | movieID, movieName, movieYear , … |

The Actor, Firm, and Film entity tables are related through the Produces and Stars relationship tables (e.g., through overseas keys). A model of this question in SQL is perhaps:

Logically, we wish to carry out a easy be part of operation: Actor ⋈ Stars ⋈ Film ⋈ Produces ⋈ Firm. However bodily, since every of those joins are commutative and associative, we’ve got a variety of choices. The question optimizer might select to:

- First discover all films starring Scarlett Johansson, then filter out for simply the flicks produced by Sony,

- First discover all films produced by Sony, then filter out these films starring Scarlett Johansson,

- In parallel, compute Sony films and Scarlett Johansson films, then take the intersection.

Which plan is perfect is determined by the information: if Scarlett Johansson has starred in considerably fewer films than Sony has produced, the primary plan is perhaps optimum. Sadly, estimating this amount is as troublesome as executing the question itself (typically). Even worse, there are usually way over 3 plans to select from, because the variety of doable plans grows exponentially with the variety of tables — and analytics queries recurrently be part of 20-30 completely different tables.

How does be part of ordering work right now? Conventional question optimizers remedy this drawback with three elements: a cardinality estimator designed to rapidly guess the scale of subqueries (e.g., to guess what number of films Sony has produced), a price mannequin to match completely different potential plans, and a search process that navigates the exponentially-large house. Cardinality estimation is very troublesome, and has led to a variety of analysis searching for to enhance estimation accuracy utilizing a variety of approaches [A].

All of those options add vital complexity to a question optimizer, and require vital engineering effort to combine, preserve, and productionize. However what if LLM-powered brokers, with their skills to adapt to new domains with prompting, maintain the important thing to fixing this decades-old drawback?

Agentic be part of ordering

When question optimizers choose a foul be part of ordering1, human specialists can repair it by diagnosing the difficulty (usually a misestimated cardinality), and instructing the question optimizer to decide on a unique ordering. This course of usually requires a number of rounds of testing (e.g., executing the question) and manually inspecting the middleman outcomes.

Question optimizers sometimes want to choose be part of orders in just a few hundred milliseconds, so integrating an LLM into the recent path of the question optimizer, whereas probably promising, will not be doable right now. However, the iterative and handbook means of optimizing the be part of order for a question, which could take a human knowledgeable a number of hours, might probably be automated with an LLM agent! This agent tries to automate that handbook tuning course of.

To check this, we developed a prototype question optimization agent. The agent has entry to a single software, which executes a possible be part of order for a question and returns the runtime of the be part of order (with a timeout of the unique question’s runtime) and the scale of every computed subplan (e.g., the EXPLAIN EXTENDED plan).

We let the agent run for 50 iterations, permitting the agent to freely check out completely different be part of orders. The agent is free to make use of these 50 iterations to check out promising plans (“exploitation”), or to discover risky-but-informative alternate options (“exploration”). Afterwards, we accumulate the perfect performing be part of order examined by the agent, which turns into our last consequence. However how do we all know the agent picked a legitimate be part of order? To make sure correctness, every software name generates a be part of ordering utilizing structured mannequin outputs, which forces the mannequin’s output to match a grammar we specify to solely admit legitimate be part of reorderings. Observe that this differs from prior work [B] that asks the LLM to choose a be part of order immediately within the “scorching path” of the question optimizer; as a substitute, the LLM will get to behave like an offline experimenter that tries many candidate plans and learns from the noticed outcomes – similar to a human tuning a be part of order by hand!.

To guage our agent in DBR, we used the Be a part of Order Benchmark (JOB), a set of queries that had been designed to be troublesome to optimize. Because the dataset utilized by JOB, the IMDb dataset, is just round 2GB (and subsequently Databricks might course of even poor be part of orderings pretty rapidly), we scaled up the dataset by duplicating every row 10 occasions [C].

We let our agent take a look at 15 be part of orders (rollouts) per question for all 113 queries within the be part of order benchmark. We report outcomes on the perfect be part of order discovered for every question. When utilizing a frontier mannequin, the agent was capable of enhance question latency by an element of 1.288 (geomean). This outperforms utilizing good cardinality estimates (intractable in apply), smaller fashions, and the latest BayesQO offline optimizer (though BayesQO was designed for PostgreSQL, not Databricks).

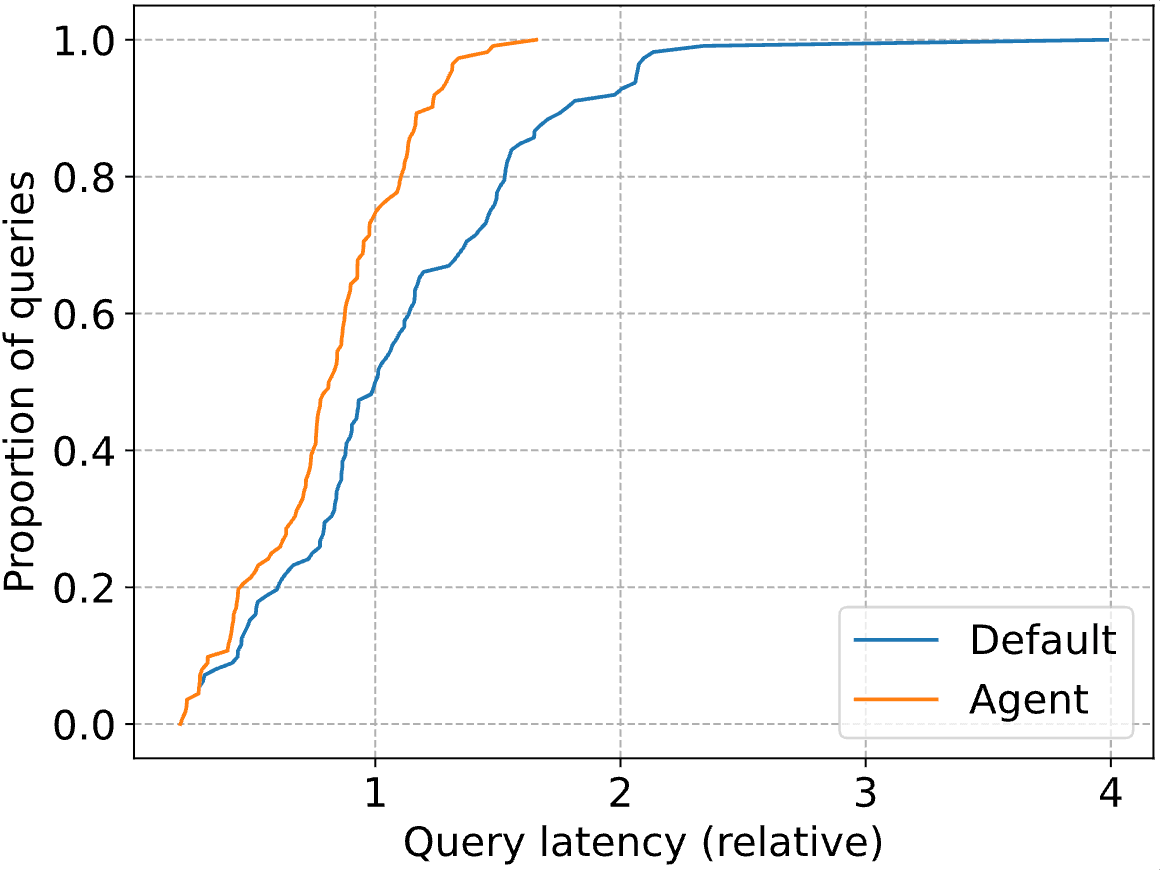

The true spectacular positive aspects are within the tail of the distribution, with the P90 question latency dropping by 41%. Beneath, we plot your entire CDF for each the usual Databricks optimizer (”Default”) and our agent (”Agent). Question latencies are normalized to the median latency of the Databricks optimizer (i.e., at 1, the blue line reaches a proportion of 0.5).

Our agent progressively improves the workload with every examined plan (typically known as a rollout), making a easy “anytime algorithm” the place bigger time budgets will be translated into additional question efficiency. After all, finally question efficiency will cease enhancing.

One of many largest enhancements our agent discovered was in question 5b, a easy 5-way be part of which seems for American manufacturing corporations that launched a post-2010 film on VHS with a notice referencing 1994. The Databricks optimizer targeted first on discovering American VHS manufacturing corporations (which is certainly selective, producing solely 12 rows). The agent finds a plan that first seems for VHS releases referencing 1994, which seems to be considerably sooner. It is because the question makes use of LIKE predicates to establish VHS releases, that are exceptionally troublesome for cardinality estimators.

Our prototype demonstrates the promise of agentic programs autonomously repairing and enhancing database queries. This train raised a number of questions on agent design in our minds:

- What instruments ought to we give the agent? In our present strategy, the agent can execute candidate be part of orders. Why not let the agent concern particular cardinality queries (e.g., compute the scale of a selected subplan), or queries to check sure assumptions in regards to the information (e.g., to find out that there have been no DVD releases previous to 1995).

- When ought to this agentic optimization be triggered? Certainly, a consumer can flag a problematic question manually for intervention. However might we additionally proactively apply this optimization to regularly-running queries? How can we decide if a question has “potential” for optimization?

- Can we mechanically perceive enhancements? When the agent finds a greater be part of order than the one discovered by the default optimizer, this be part of order will be considered as a proof that the default optimizer is selecting a suboptimal order. If the agent corrects a scientific error within the underlying optimizer, can we uncover this and use it to enhance the optimizer?

After all, we’re not the one ones occupied with the potential of LLMs for question optimization [D]. At Databricks, we’re enthusiastic about the potential of harnessing the generalizability of LLMs to enhance information programs themselves.

In case you are on this subject, see additionally our followup UCB weblog on “How do LLM brokers assume by means of SQL be part of orders?“.

Be a part of Us

As we glance forward, we’re excited to maintain pushing the boundaries of how AI can form database optimizations. If you happen to’re obsessed with constructing the subsequent technology of database engines, be part of us!

1 Databricks use methods like runtime filters to mitigate the affect of poor be part of orders. The outcomes introduced right here embody these methods.

Notes

A. Methods for cardinality estimation have included, for instance, adaptive suggestions, deep studying, distribution modeling, database idea, studying idea, and issue decompositions. Prior work has additionally tried to thoroughly exchange the normal question optimizer structure with deep reinforcement studying, multi-armed bandits, Bayesian optimization, or extra superior be part of algorithms.

B. RAG-based approaches, for instance, have been used to construct “LLM within the scorching path” programs.

C. Whereas crude, this strategy has been utilized in prior work.

D. Different researchers have proposed RAG-based question restore programs, LLM-powered question rewrite programs, and even complete database programs synthesized by LLMs.