Enterprise knowledge isn’t helpful in a silo. Answering questions like, “Which of our merchandise have had declining gross sales over the previous three months, and what doubtlessly associated points are introduced up in buyer opinions on numerous vendor websites?” requires reasoning throughout a mixture of structured and unstructured knowledge sources, together with knowledge lakes, overview knowledge, and product info administration methods. On this weblog, we display how Databricks Agent Bricks Supervisor Agent (SA) may help with these complicated, real looking duties by multi-step reasoning grounded in a hybrid of structured and unstructured knowledge.

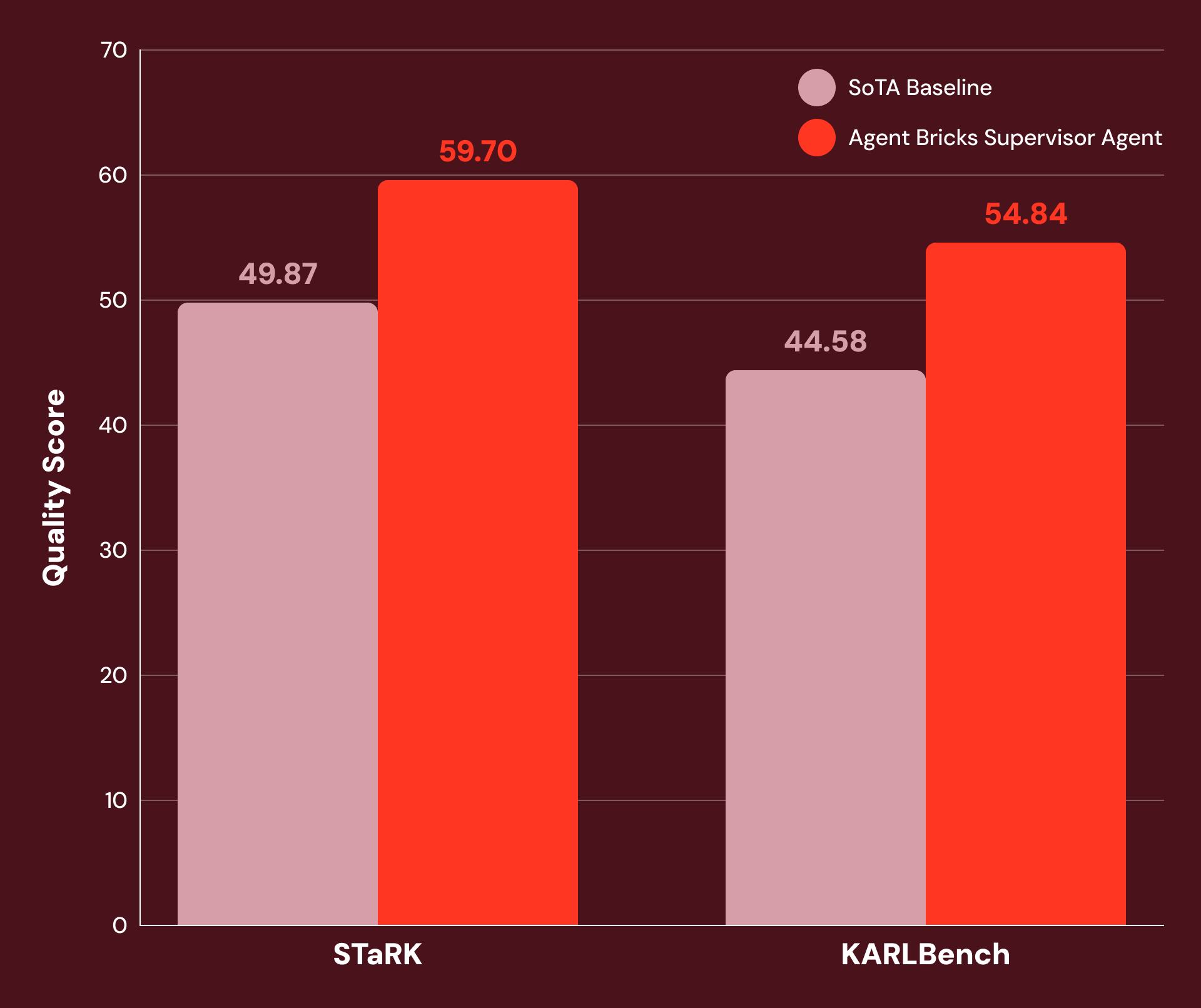

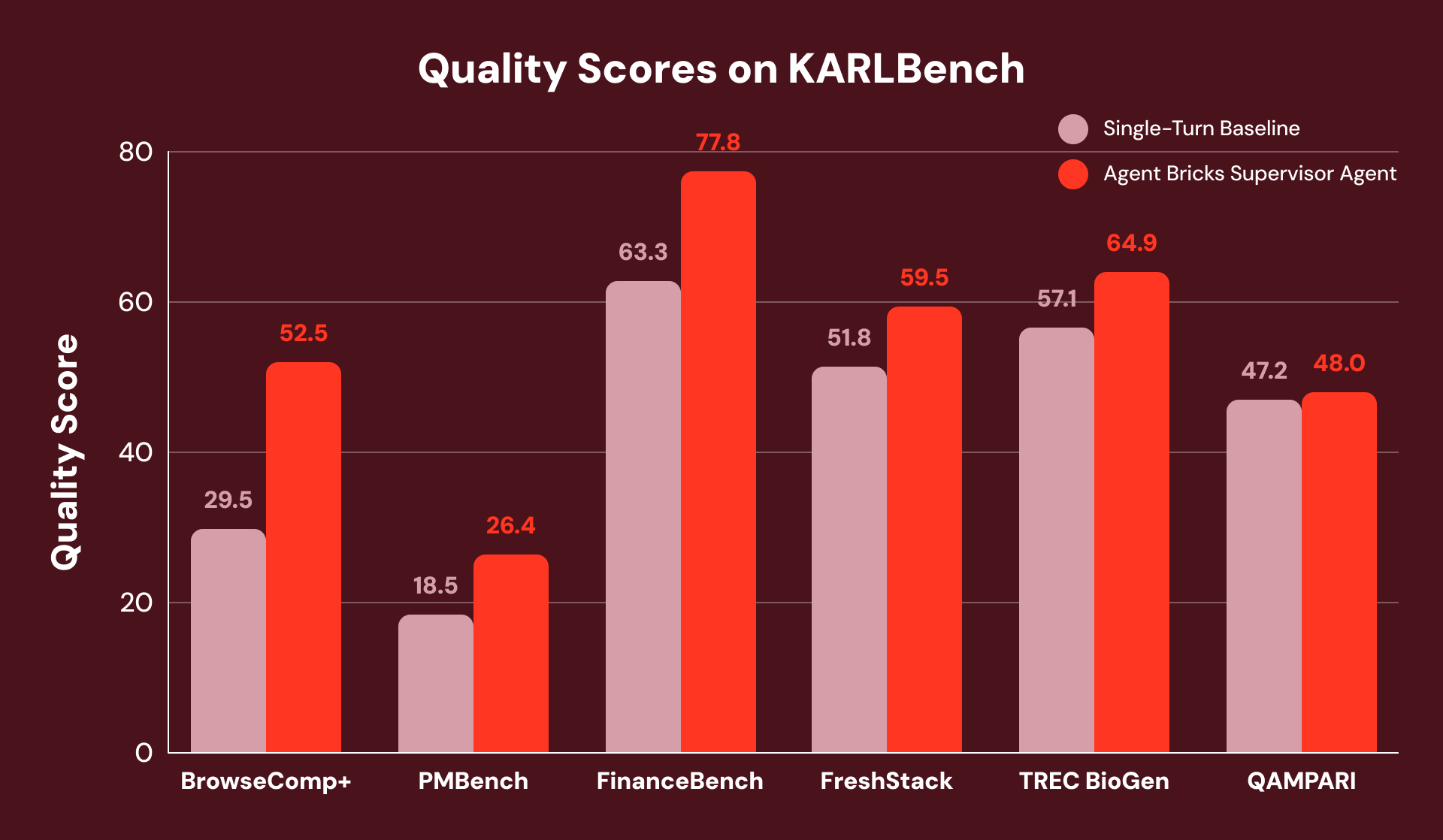

With tuned directions and cautious device configuration, we discover SA to be extremely performant on a variety of knowledge-intensive enterprise duties. Determine 1 exhibits that SA achieves 20% or extra enchancment over SoTA baselines on:

- STaRK: a collection of three semi-structured retrieval duties printed by Stanford researchers.

- KARLBench: a benchmark suite for complicated grounded reasoning just lately printed by Databricks.

Supervisor Agent demonstrates vital positive factors on a variety of economically worthwhile duties: from tutorial retrieval (+21% on STaRK-MAG) to biomedical reasoning (+38% on STaRK Prime) and monetary evaluation (+23% on FinanceBench).

Agent Setup

Agent Bricks Supervisor Agent is a declarative agent builder that orchestrates brokers and instruments. It’s constructed on aroll — an inside agentic framework for constructing, evaluating, and deploying multi-step LLM workflows at scale.1 aroll and SA have been particularly designed for the superior agentic use instances our prospects ceaselessly encounter.

aroll permits including new instruments and customized directions by easy configuration adjustments, can deal with hundreds of concurrent conversations and parallel device executions, and incorporates superior agent orchestration and context administration strategies to refine queries and get better from partial solutions. All of those are tough to attain with SoTA single-turn methods at the moment.

As a result of SA is constructed on this versatile structure, its high quality might be frequently improved by easy person curation, corresponding to tweaking top-level directions or refining agent descriptions, with no need to write down any customized code.

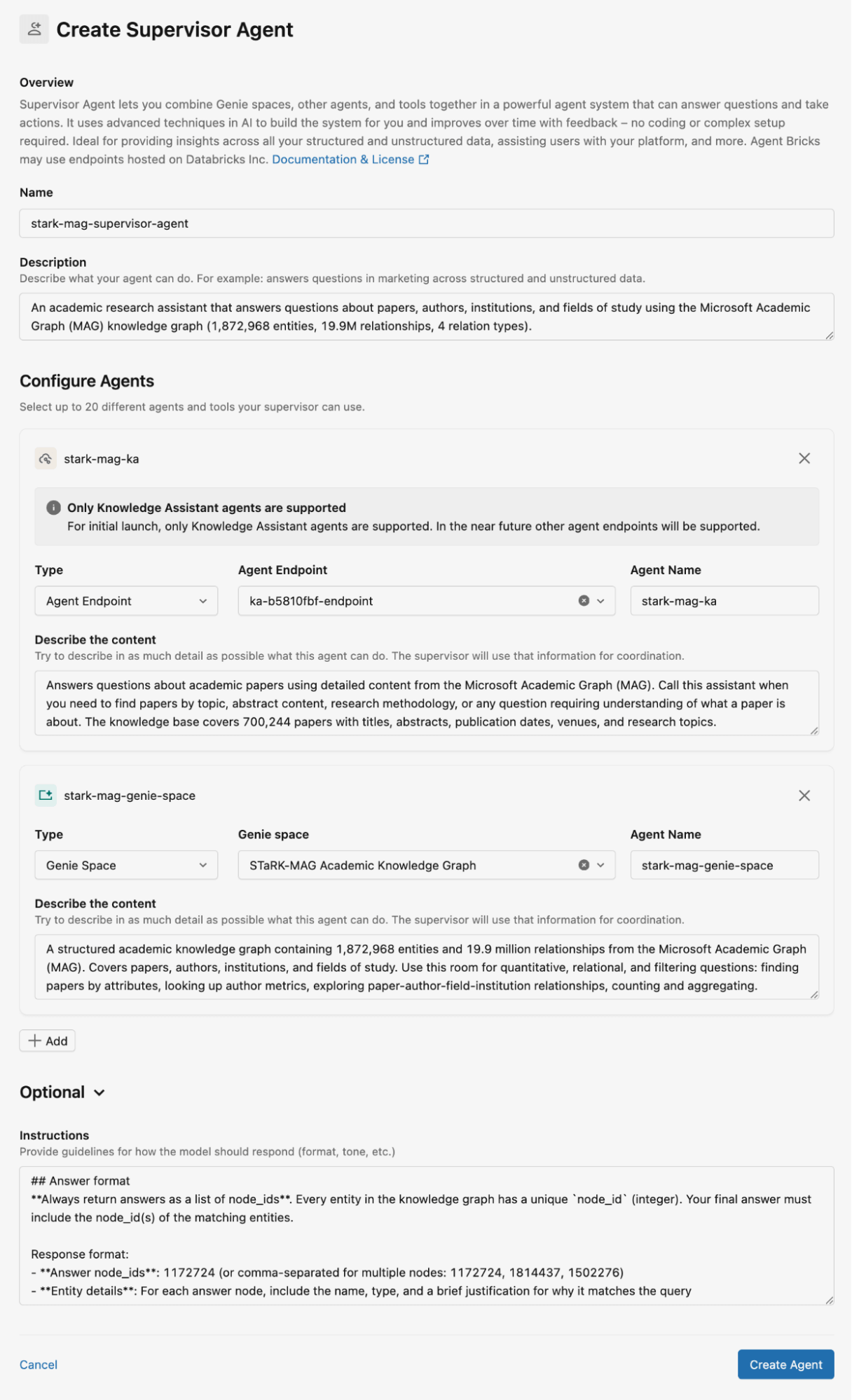

Determine 2 exhibits how we configured the Supervisor Agent for the STaRK-MAG dataset. On this weblog, we use Genie areas for storing the relational data bases and Information Assistants for storing unstructured paperwork for retrieval. We offer detailed descriptions for all Information Assistants and Genie areas, in addition to directions for the agent responses.

Hybrid Reasoning: Structured Meets Unstructured

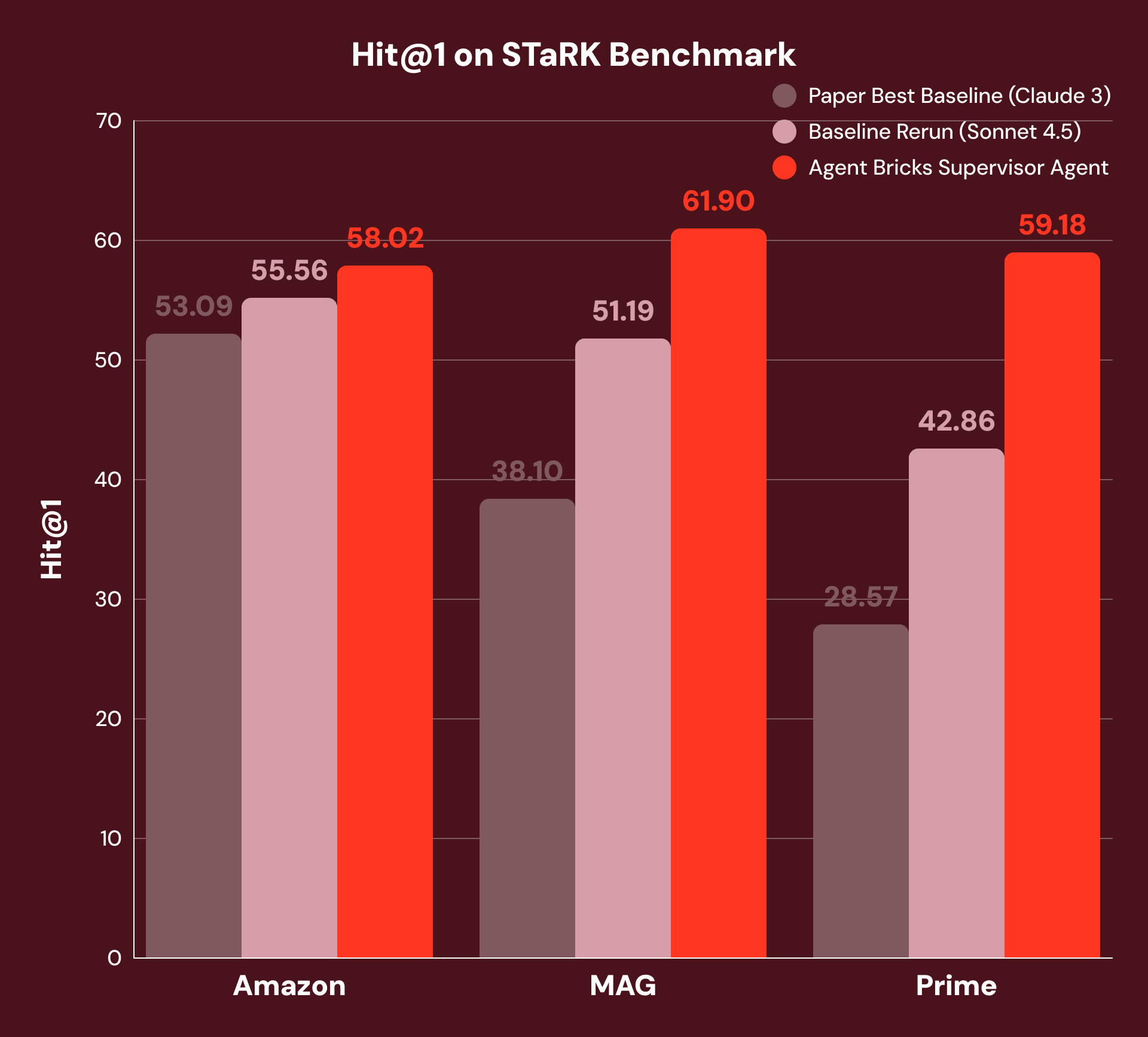

To judge grounded reasoning primarily based on a hybrid of structured and unstructured knowledge, we use the STaRK benchmark, which incorporates three domains:

- Amazon: product attributes (structured) and opinions (unstructured)

- MAG: quotation networks (structured) and tutorial papers (unstructured)

- Prime: biomedical entities (structured) and literature (unstructured)

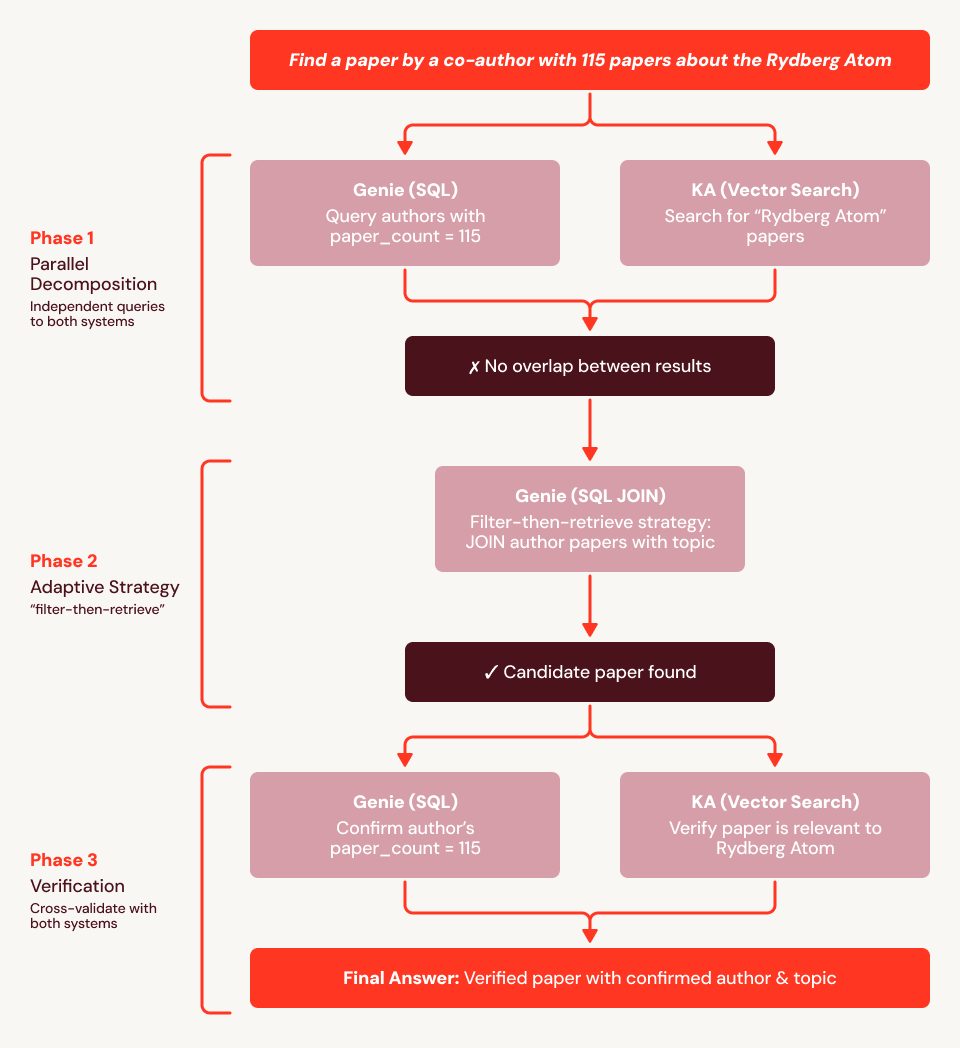

For instance, “Discover me a paper written by a co-author with 115 papers and is in regards to the Rydberg atom” requires the system to mix structured filtering (“co-author with 115 papers”) with unstructured understanding (“in regards to the Rydberg atom”). The greatest printed baselines use vector similarity search with an LLM-based reranker — a robust single-turn method, however one that can’t decompose queries throughout knowledge varieties. To make sure a good comparability, we reran this baseline with the present SoTA foundational mannequin, offering a considerably stronger baseline.

With our method, SA decomposes every query, routes sub-questions to the suitable device, and synthesizes outcomes throughout a number of reasoning steps. As Determine 3 exhibits, this achieves +4% Hit@1 on Amazon, +21% on MAG, and +38% on Prime over each the very best of the unique baselines and our rerun baselines with the present SoTA foundational mannequin. We see the very best enhancements in MAG and Prime the place the reply requires the tightest integration of structured and unstructured knowledge.

Utilizing our instance query from above (“Discover me a paper written by a co-author with 115 papers and is in regards to the Rydberg atom”), we discover the baseline fails as a result of the embeddings can’t encode the structural constraint (“co-author has precisely 115 papers”). In Determine 4, we present an execution hint for SA: it first makes use of Genie to search out all 759 authors with 115 papers and Information Assistant to retrieve Rydberg papers, then cross-references the 2 units. When no overlap is discovered, SA adapts: it points a SQL JOIN of the 115-paper writer record towards all papers mentioning “Rydberg” within the title or summary, surfacing the reply straight from the structured knowledge. It then calls Information Assistant to confirm relevance and Genie to verify the writer’s paper rely, and efficiently returns the appropriate paper.

The Agentic Benefit on Information-Intensive Duties

To match the efficiency of Agent Bricks SA with a robust single-turn baseline (just like the very best printed baseline for STaRK) the place no structured knowledge is required, we consider them utilizing KARLBench, a grounded reasoning benchmark suite that collectively stress-tests totally different retrieval and reasoning capabilities:

- BrowseComp+: process-of-elimination entity search

- TREC BioGen: biomedical literature synthesis

- FinanceBench: numerical reasoning over monetary filings

- QAMPARI: exhaustive entity recall

- FreshStack: technical troubleshooting over documentation

- PMBench: Databricks inside enterprise doc comprehension

General, the Supervisor Agent achieves constant positive factors throughout all six benchmarks, with the most important enhancements on duties that demand both exhaustive evaluation or self-correction. On FinanceBench, it recovers from initially incomplete retrieval by detecting gaps and reformulating queries, yielding general +23% enchancment.

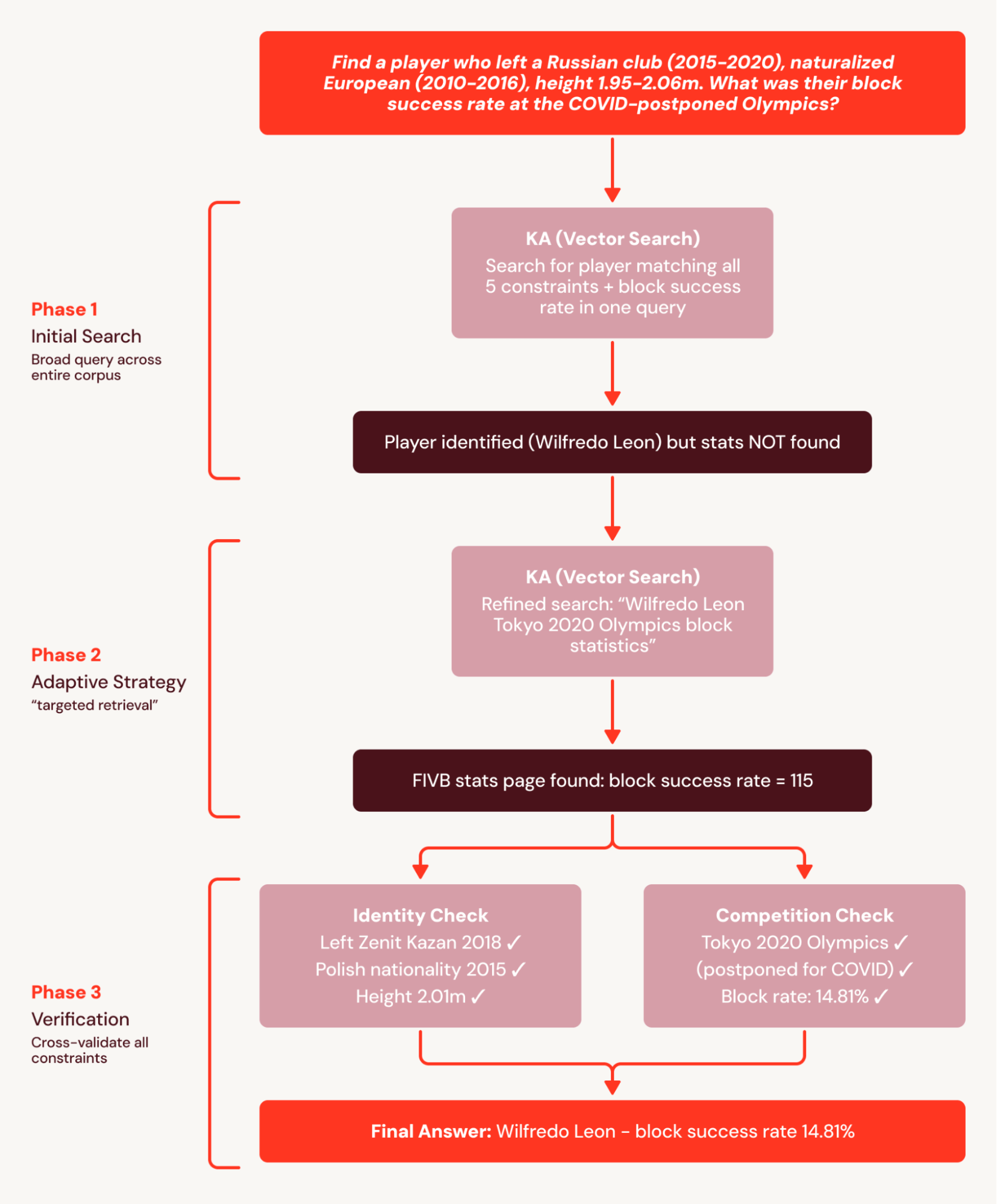

For instance, BrowseComp+’s questions every have 5-10 interlocking constraints, like “Discover a participant who left a Russian membership (2015-2020), naturalized European (2010-2016), top 1.95-2.06m. What was their block success fee on the COVID-postponed Olympics?” The one-turn baseline points one broad question that accurately identifies the participant however fails to floor granular statistics paperwork and fails the query.

SA breaks this process right into a coordinated search plan and decomposes the plan into searchable subsets. This avoids the single-turn baseline failure the place stats will not be discovered as a result of they’re retrieved in a subsequent search. In consequence, SA achieves a +78% relative enchancment.

In one other instance from PMBench, one of many questions is “what are the guardrail varieties prospects are utilizing” which requires 26 nuggets (see definition in KARL report) throughout 10+ buyer dialog paperwork for an exhaustive reply. Single-turn baseline finds just one buyer point out as a result of it can’t search throughout each guardrail class in a single query. SA searches every guardrail class individually (“PII detection,” “hallucination,” “toxicity,” “immediate injection”), and incrementally surfaces increasingly more buyer mentions within the course of.

What We Realized

The outcomes throughout our experiments level to some key takeaways:

- Grounded reasoning brokers can profit from a hybrid of structured and unstructured knowledge retrieval if given entry to the suitable instruments and knowledge representations.

- For prime-quality retrieval situations, constructing customized RAG pipelines over heterogeneous datasets ought to be prevented, even when SoTA fashions are used for the re-ranking stage. Multi-step reasoning the place, at every step, the agent selects the suitable knowledge supply and displays on its utility, is essential for upleveling efficiency.

- A declarative method to agent constructing such because the one carried out by the Databricks Supervisor Agent supplies a great trade-off between ease of use and high quality.

We use the Databricks Supervisor Agent to construct brokers for all three STaRK domains and 6 unstructured datasets in KARLBench. The one issues that differ throughout these 9 duties are the directions and instruments — no customized code was required to course of these numerous datasets. Thus, constructing a performant agent for a brand new enterprise process is essentially a matter of writing exact directions and equipping it with the suitable instruments, relatively than constructing a brand new system from scratch.

Agent Bricks Supervisor Agent is obtainable to all our prospects. You may get began with Agent Bricks SA just by creating an agent and connecting it to your present brokers, instruments and MCP servers. Discover the documentation to see how Supervisor Agent matches into your manufacturing workflows.

Authors: Xinglin Zhao, Arnav Singhvi, Mark Rizkallah, Jonathan Li, Jacob Portes, Elise Gonzales, Sabhya Chhabria, Kevin Wang, Yu Gong, Moonsoo Lee, Michael Bendersky and Matei Zaharia.

1See our current publication “KARL: Information Brokers by way of Reinforcement Studying” for extra particulars how aroll is used for artificial knowledge era, scalable RL coaching and on-line inference for agentic process.