Over the previous few months, we’ve launched thrilling updates to Lakeflow Jobs (previously often known as Databricks Workflows) to enhance knowledge orchestration and optimize workflow efficiency.

For newcomers, Lakeflow Jobs is the built-in orchestrator for Lakeflow, a unified and clever resolution for knowledge engineering with streamlined ETL growth and operations constructed on the Information Intelligence Platform. Lakeflow Jobs is essentially the most trusted orchestrator for the Lakehouse and production-grade workloads, with over 14,600 clients, 187,000 weekly customers, and 100 million jobs run each week.

From UI enhancements to extra superior workflow management, take a look at the most recent in Databricks’ native knowledge orchestration resolution and uncover how knowledge engineers can streamline their end-to-end knowledge pipeline expertise.

Refreshed UI for a extra centered person expertise

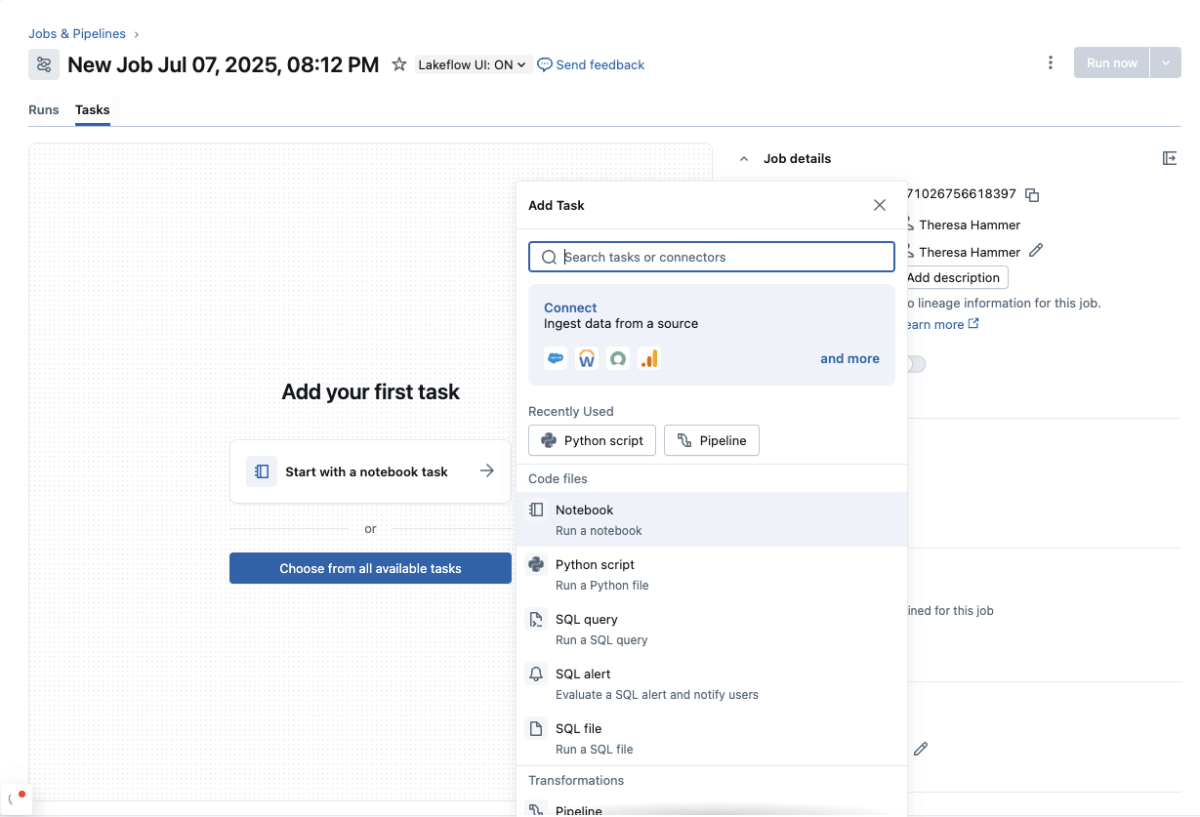

We’ve redesigned our interface to present Lakeflow Jobs a contemporary and trendy look. The brand new compact format permits for a extra intuitive orchestration journey. Customers will take pleasure in a activity palette that now affords shortcuts and a search button to assist them extra simply discover and entry their duties, whether or not it is a Lakeflow Pipeline, an AI/BI dashboard, a pocket book, SQL, or extra.

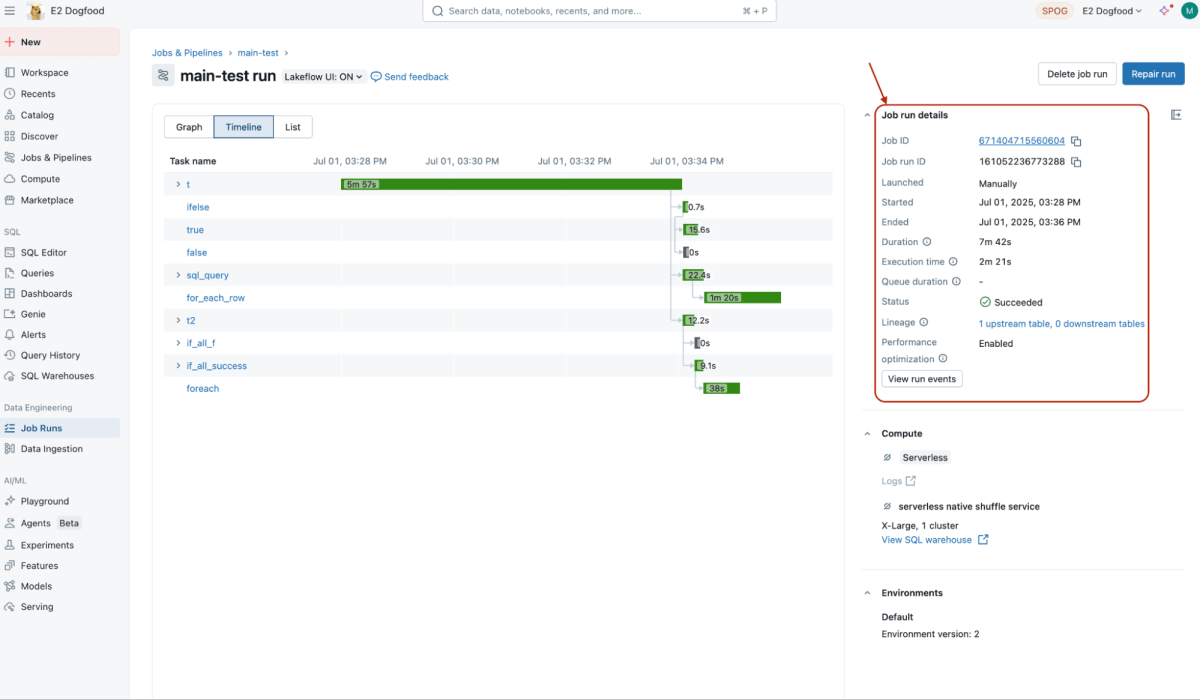

For monitoring, clients can now simply discover data on their jobs’ execution instances in the best panel underneath Job and Process run particulars, permitting them to simply monitor processing instances and shortly establish any knowledge pipeline points.

We’ve additionally improved the sidebar by letting customers select which sections (Job particulars, Schedules & Triggers, Job parameters, and so forth.) to cover or maintain open, making their orchestration interface cleaner and extra related.

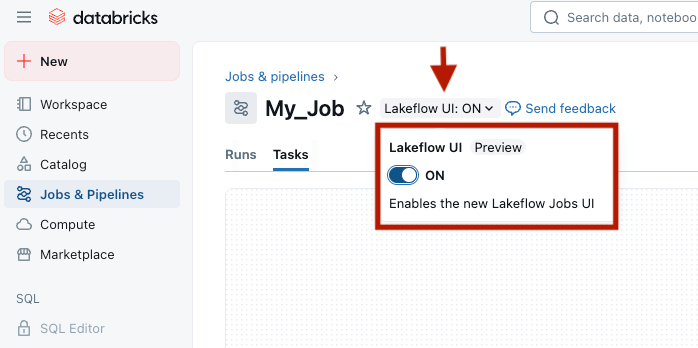

General, Lakeflow Jobs customers can count on a extra streamlined, centered, and simplified orchestration workflow. The brand new format is at present obtainable to customers who’ve opted into the preview and enabled the toggle on the Jobs web page.

Extra managed and environment friendly knowledge flows

Our orchestrator is continually being enhanced with new options. The most recent replace introduces superior controls for knowledge pipeline orchestration, giving customers better command over their workflows for extra effectivity and optimized efficiency.

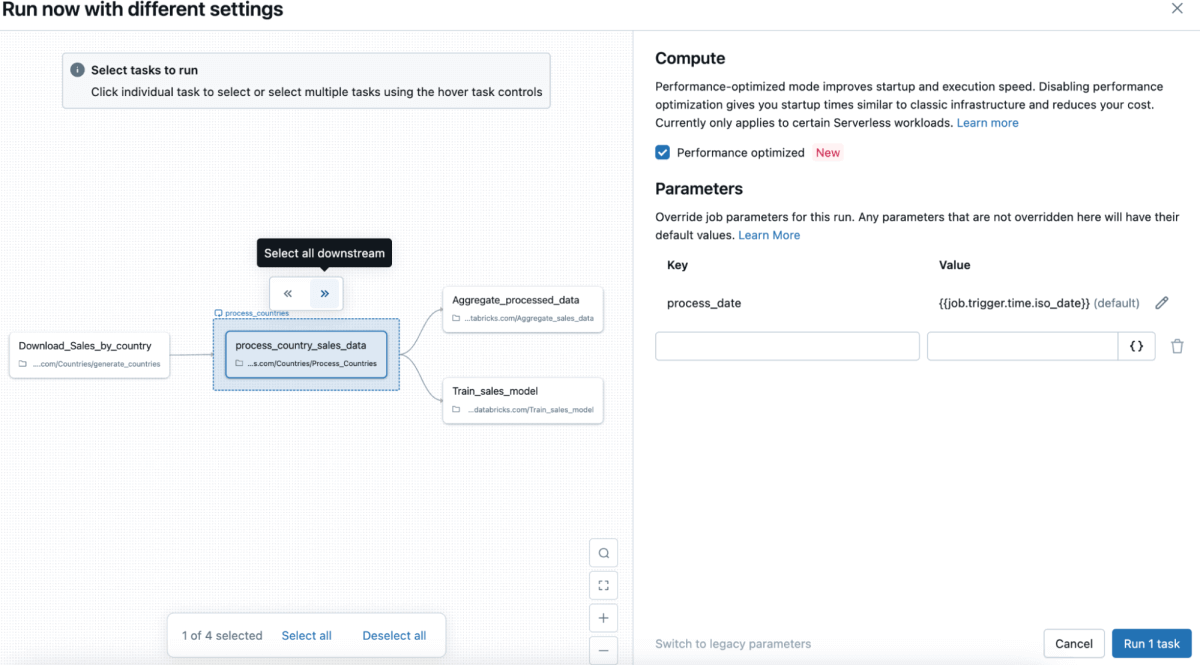

Partial runs permit customers to pick which duties to execute with out affecting others. Beforehand, testing particular person duties required operating all the job, which might be computationally intensive, gradual, and expensive. Now, on the Jobs & Pipelines web page, customers can choose “Run now with totally different settings” and select particular duties to execute with out impacting others, avoiding computational waste and excessive prices. Equally, Partial repairs allow sooner debugging by permitting customers to repair particular person failed duties with out rerunning all the job.

With extra management over their run and restore flows, clients can pace up growth cycles, enhance job uptime, and cut back compute prices. Each Partial runs and repairs are typically obtainable within the UI and the Jobs API.

To all SQL followers on the market, now we have some good news for you! On this newest spherical of updates, clients will have the ability to use SQL queries’ outputs as parameters in Lakeflow Jobs to orchestrate their knowledge. This makes it simpler for SQL builders to move parameters between duties and share context inside a job, leading to a extra cohesive and unified knowledge pipeline orchestration. This function can also be now typically obtainable.

Fast-start with Lakeflow Join in Jobs

Along with these enhancements, we’re additionally making it quick and simple to ingest knowledge into Lakeflow Jobs by extra tightly integrating Jobs with Lakeflow Join, Databricks Lakeflow’s managed and dependable knowledge ingestion resolution, with built-in connectors.

Clients can already orchestrate Lakeflow Join ingestion pipelines that originate from Lakeflow Join, utilizing any of the totally managed connectors (e.g., Salesforce, Workday, and so forth.) or instantly from notebooks. Now, with Lakeflow Join in Jobs, clients can simply create an ingestion pipeline instantly from two entry factors of their Jobs interface, all inside a point-and-click atmosphere. Since ingestion is usually step one in ETL, this new seamless integration with Lakeflow Join allows clients to consolidate and streamline their knowledge engineering expertise, from finish to finish.

Lakeflow Join in Jobs is now typically obtainable for patrons. Study extra about this and different latest Lakeflow Join releases.

A single orchestration for all of your workloads

We’re persistently innovating on Lakeflow Jobs to supply our clients a contemporary and centralized orchestration expertise for all their knowledge wants throughout the group. Extra options are coming to Jobs – we’ll quickly unveil a manner for customers to set off jobs primarily based on desk updates, present help for system tables, and develop our observability capabilities, so keep tuned!

For many who wish to continue learning about Lakeflow Jobs, take a look at our on-demand classes from our Information+AI Summit and discover Lakeflow in quite a lot of use instances, demos, and extra!