This weblog breaks down the accessible pricing and deployment choices, and instruments that help scalable, cost-conscious AI deployments.

While you’re constructing with AI, each resolution counts—particularly in terms of price. Whether or not you’re simply getting began or scaling enterprise-grade purposes, the very last thing you need is unpredictable pricing or inflexible infrastructure slowing you down. Azure OpenAI is designed with that in thoughts: versatile sufficient for early experiments, highly effective sufficient for international deployments, and priced to match the way you really use it.

From startups to the Fortune 500, greater than 60,000 clients are selecting Azure AI Foundry, not only for entry to foundational and reasoning fashions—however as a result of it meets them the place they’re, with deployment choices and pricing fashions that align to actual enterprise wants. That is about extra than simply AI—it’s about making innovation sustainable, scalable, and accessible.

This weblog breaks down the accessible pricing and deployment choices, and instruments that help scalable, cost-conscious AI deployments.

Versatile pricing fashions that match your wants

Azure OpenAI helps three distinct pricing fashions designed to fulfill totally different workload profiles and enterprise necessities:

- Commonplace—For bursty or variable workloads the place you wish to pay just for what you employ.

- Provisioned—For top-throughput, performance-sensitive purposes that require constant throughput.

- Batch—For big-scale jobs that may be processed asynchronously at a reduced charge.

Every method is designed to scale with you—whether or not you’re validating a use case or deploying throughout enterprise items.

Commonplace

The Commonplace deployment mannequin is right for groups that need flexibility. You’re charged per API name primarily based on tokens consumed, which helps optimize budgets in periods of decrease utilization.

Greatest for: Improvement, prototyping, or manufacturing workloads with variable demand.

You’ll be able to select between:

- International deployments: To make sure optimum latency throughout geographies.

- OpenAI Information Zones: For extra flexibility and management over information privateness and residency.

With all deployment choices, information is saved at relaxation inside the Azure chosen area of your useful resource.

Batch

- The Batch mannequin is designed for high-efficiency, large-scale inference. Jobs are submitted and processed asynchronously, with responses returned inside 24 hours—at as much as 50% lower than International Commonplace pricing. Batch additionally options giant scale workload help to course of bulk requests with decrease prices. Scale your large batch queries with minimal friction and effectively deal with large-scale workloads to cut back processing time, with 24-hour goal turnaround, at as much as 50% much less price than international customary.

Greatest for: Giant-volume duties with versatile latency wants.

Typical use circumstances embody:

- Giant-scale information processing and content material technology.

- Information transformation pipelines.

- Mannequin analysis throughout intensive datasets.

Buyer in motion: Ontada

Ontada, a McKesson firm, used the Batch API to remodel over 150 million oncology paperwork into structured insights. Making use of LLMs throughout 39 most cancers sorts, they unlocked 70% of beforehand inaccessible information and minimize doc processing time by 75%. Study extra within the Ontada case research.

Provisioned

The Provisioned mannequin supplies devoted throughput by way of Provisioned Throughput Models (PTUs). This allows secure latency and excessive throughput—preferrred for manufacturing use circumstances requiring real-time efficiency or processing at scale. Commitments may be hourly, month-to-month, or yearly with corresponding reductions.

Greatest for: Enterprise workloads with predictable demand and the necessity for constant efficiency.

Widespread use circumstances:

- Excessive-volume retrieval and doc processing situations.

- Name middle operations with predictable visitors hours.

- Retail assistant with constantly excessive throughput.

Prospects in motion: Visier and UBS

- Visier constructed “Vee,” a generative AI assistant that serves as much as 150,000 customers per hour. By utilizing PTUs, Visier improved response occasions by 3 times in comparison with pay-as-you-go fashions and decreased compute prices at scale. Learn the case research.

- UBS created ‘UBS Crimson’, a safe AI platform supporting 30,000 workers throughout areas. PTUs allowed the financial institution to ship dependable efficiency with region-specific deployments throughout Switzerland, Hong Kong, and Singapore. Learn the case research.

Deployment sorts for normal and provisioned

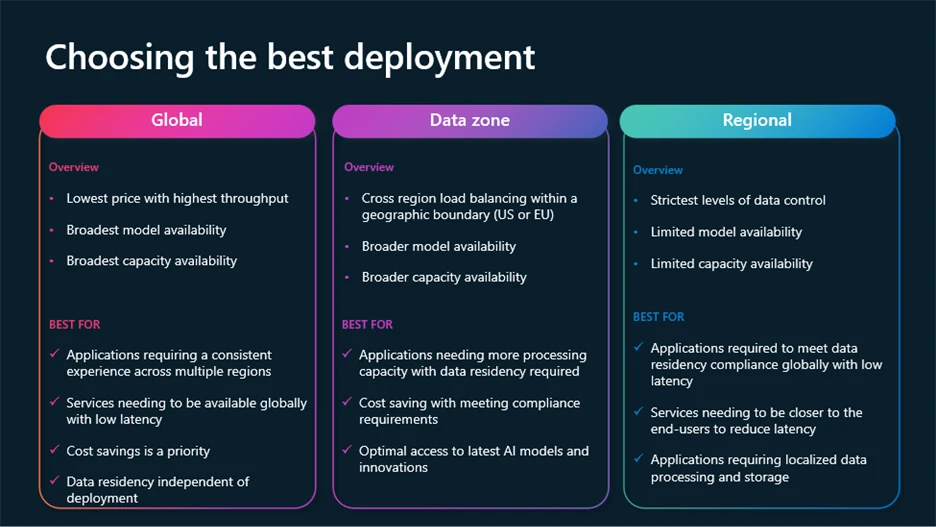

To satisfy rising necessities for management, compliance, and price optimization, Azure OpenAI helps a number of deployment sorts:

- International: Most cost-effective, routes requests via the worldwide Azure infrastructure, with information residency at relaxation.

- Regional: Retains information processing in a selected Azure area (28 accessible right this moment), with information residency each at relaxation and processing within the chosen area.

- Information Zones: Presents a center floor—processing stays inside geographic zones (E.U. or U.S.) for added compliance with out full regional price overhead.

International and Information Zone deployments can be found throughout Commonplace, Provisioned, and Batch fashions.

Dynamic options aid you minimize prices whereas optimizing efficiency

A number of dynamic new options designed that can assist you get the perfect outcomes for decrease prices are actually accessible.

- Mannequin router for Azure AI Foundry: A deployable AI chat mannequin that routinely selects the perfect underlying chat mannequin to answer a given immediate. Excellent for numerous use circumstances, mannequin router delivers excessive efficiency whereas saving on compute prices the place doable, all packaged as a single mannequin deployment.

- Batch giant scale workload help: Processes bulk requests with decrease prices. Effectively deal with large-scale workloads to cut back processing time, with 24-hour goal turnaround, at 50% much less price than international customary.

- Provisioned throughput dynamic spillover: Supplies seamless overflowing on your high-performing purposes on provisioned deployments. Handle visitors bursts with out service disruption.

- Immediate caching: Constructed-in optimization for repeatable immediate patterns. It accelerates response occasions, scales throughput, and helps minimize token prices considerably.

- Azure OpenAI monitoring dashboard: Repeatedly observe efficiency, utilization, and reliability throughout your deployments.

To be taught extra about these options and find out how to leverage the most recent improvements in Azure AI Foundry fashions, watch this session from Construct 2025 on optimizing Gen AI purposes at scale.

Past pricing and deployment flexibility, Azure OpenAI integrates with Microsoft Value Administration instruments to present groups visibility and management over their AI spend.

Capabilities embody:

- Actual-time price evaluation.

- Funds creation and alerts.

- Help for multi-cloud environments.

- Value allocation and chargeback by workforce, venture, or division.

These instruments assist finance and engineering groups keep aligned—making it simpler to know utilization tendencies, observe optimizations, and keep away from surprises.

Constructed-in integration with the Azure ecosystem

Azure OpenAI is an element of a bigger ecosystem that features:

This integration simplifies the end-to-end lifecycle of constructing, customizing, and managing AI options. You don’t need to sew collectively separate platforms—and which means sooner time-to-value and fewer operational complications.

A trusted basis for enterprise AI

Microsoft is dedicated to enabling AI that’s safe, personal, and secure. That dedication reveals up not simply in coverage, however in product:

- Safe future initiative: A complete security-by-design method.

- Accountable AI rules: Utilized throughout instruments, documentation, and deployment workflows.

- Enterprise-grade compliance: Protecting information residency, entry controls, and auditing.

Get began with Azure AI Foundry