Nuclear reactors are among the many most advanced engineered methods we function at scale. Secure, dependable operation depends upon tightly coupled physics, engineered limitations, rotating tools, fluid methods, and management logic that has to behave accurately throughout regular operation and a protracted record of credible faults.

Think about the situation: A feedwater valve closes unexpectedly. Inside seconds, an engineer must know which downstream methods lose margin first, which Technical Specification limits develop into related, and whether or not the present plant lineup impacts their choices. The information to reply these questions exists throughout a dozen methods. The relationships that make the info significant dwell within the heads of skilled workers.

The hole between obtainable knowledge and usable data defines one of many central challenges in nuclear plant operations in the present day. An ontology closes that hole by making plant relationships specific, queryable, and defensible.

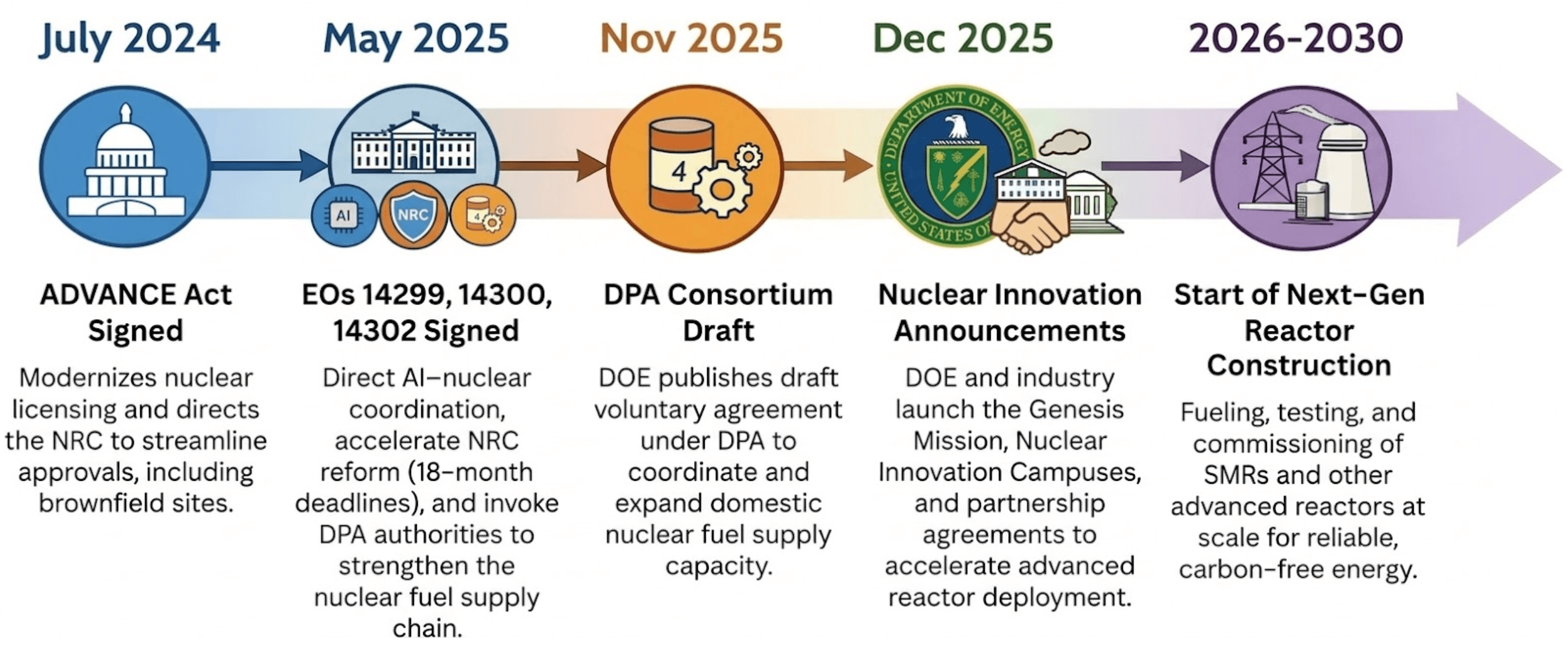

The USA is coming into a “nuclear renaissance” not seen in a long time. Starting in 2024, a wave of laws and government motion created tailwinds for nuclear power to energy every little thing from nationwide safety installations to the large power calls for of the AI race. The ADVANCE Act modernized the U.S. Nuclear Regulatory Fee (NRC) licensing course of, lowered charges, and directed the Fee to guage brownfield websites, comparable to former coal vegetation, for brand spanking new builds. Govt Order (EO) 14300 went additional, basically shifting the NRC’s mission from danger minimization to weighing the advantages of nuclear power for financial and nationwide safety, and compressing the present 42-month common licensing course of right into a binding 18-month deadline for brand spanking new reactors. EO 14302 invoked the Protection Manufacturing Act (DPA) to reinvigorate the home nuclear industrial base, specializing in gasoline provide chains and restarting shuttered vegetation. EO 14299 explicitly linked superior nuclear deployment to AI knowledge middle demand, designating them as essential protection amenities to be powered by onsite reactors. In the meantime, the U.S. Division of Power (DOE) has funded U.S. nuclear corporations with billions of {dollars} to speed up progress on established vegetation and jumpstart newcomers constructing small modular reactors (SMRs).

That enlargement is touchdown on a workforce trending the opposite manner. The variety of individuals obtainable to develop and defend licensing submissions is shrinking by about 10% yearly, and the identical stress extends nicely past licensing. New designs, uprates, life-extension work, and digital upgrades all depend on the identical chain of reasoning: what tools is credited, which constraints apply within the present configuration, and which managed sources help the conclusion. That chain runs by way of each part of the plant lifecycle, from design by way of commissioning into each day operations. At the moment, it nonetheless relies upon largely on the individuals who carry it.

The price of implicit data

Skilled operators and engineers carry exceptional psychological fashions of their vegetation. When a senior reactor operator sees rising vibration on a circulating water pump, they instantly join that sign to the pump’s position within the present lineup, identified failure patterns for that tools class, current work historical past, and the results they’d count on if the situation progresses. They know which corroborating indications matter, which of them mislead, and what inquiries to ask subsequent.

That psychological mannequin represents a long time of accrued context. It additionally represents a vulnerability.

The Worldwide Atomic Power Company (IAEA) tasks world nuclear capability might attain 992 GWe by 2050, roughly 2.6 instances present ranges. New builds imply new designs, extra instrumentation, and extra configuration states that operators and engineers should perceive. In the meantime, DOE workforce knowledge exhibits skilled workers concentrated in older age brackets. The individuals who carry the deepest plant data are retiring, they usually’re taking their psychological fashions with them.

Whereas newer workers deliver technical aptitude, they usually lack publicity to site-specific failure signatures and historic configurations. To optimize operations at a plant, each new and present personnel require direct entry to correct, up to date empirical knowledge. This entry permits the workforce to make knowledgeable choices. Establishing this knowledge availability helps DOE power objectives by getting ready the workforce to handle high-instrumentation designs.

The way in which nuclear vegetation handle data in the present day has labored. It’s stored the U.S. fleet working safely for many years. The engineers who carry plant context of their heads aren’t the issue to be solved, as they’re an asset to be preserved and prolonged. Preservation isn’t sufficient when the mandate shifts from sustaining 100GW towards 400GW. The present method can’t transfer on the velocity the fleet requires in the present day. Not as a result of it’s improper, however as a result of it was designed for a distinct tempo.

An ontology that closes the hole

The nuclear trade has acknowledged this downside, and several other organizations are already engaged on it. Idaho Nationwide Laboratory constructed DeepLynx, an open-source integration framework designed to attach engineering instruments and protect context throughout the lifecycle. Their DIAMOND initiative developed knowledge constructions particularly for nuclear design and operational knowledge. ISO 15926 and IEC 81346 established widespread frameworks for lifecycle knowledge and tools identification. NRC steering on digital methods continues to push towards transparency, traceability, and performance-based proof.

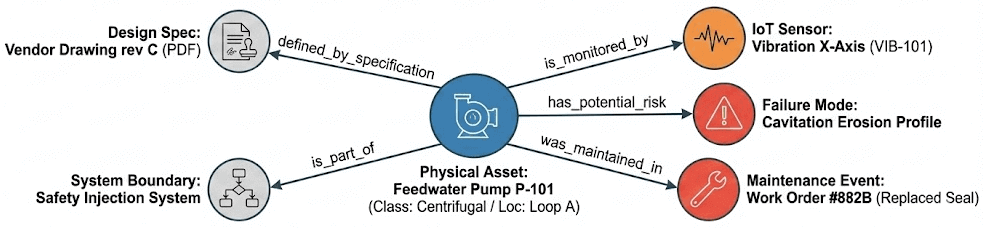

What these efforts share is a standard method. The method begins by defining the objects a plant causes about (methods, elements, sensors, paperwork, constraints, licensing commitments) after which outline how they join. A pump belongs to a system. A sensor measures a variable on a element. A valve defines a part of an isolation boundary. A element inherits qualification necessities from its put in location. A licensing dedication traces to the configuration assumptions that help it. That construction is an ontology.

Again to our aforementioned situation, a single motor-operated valve alternative requires an engineer to tug from 6+ methods, reconcile 3 to 4 naming conventions and confirm roughly 12 doc revisions, which might outcome as much as 4 to eight hours. This work turns into ephemeral when the subsequent query or concern about the identical element resurfaces. Nuclear methods run on relationships and dependencies. An ontology makes these relationships specific, searchable, and defensible. The relationships in a nuclear plant aren’t tabular. A change to at least one element impacts the boundary it helps, the practice it belongs to, and the constraints it inherits. Graph constructions map naturally to that sort of reasoning, however that does not imply you want a separate graph database. Ontologies encode these relationships as triples, atomic items that hyperlink two entities with a particular relationship. Additionally they encode enterprise guidelines straight into the construction requirements, comparable to RDF (Useful resource Description Framework) and SHACL (Form Constraint Language). Concrete standards outline what constitutes legitimate knowledge, issues like security constraints, configuration guidelines, and qualification necessities. These guidelines develop into a part of the info mannequin itself, so violations floor structurally relatively than relying on somebody catching them throughout overview.

The ontology and its curated triples are the sturdy asset. They persist past any particular software or consumer interface. Open requirements like RDF and OWL (Internet Ontology Language) guarantee the info stays moveable, so the info aligns with present trade ontologies and creates clear interchange codecs for provider knowledge and licensing submittals. Nothing will get locked in. However the knowledge nonetheless wants someplace to be ruled, versioned, and queried at scale.

For nuclear functions, the ontology must do three issues nicely to be value constructing.

- Canonical identification over time. The identical pump would possibly seem as “P-123” in work administration, “P123_DIS_PRES” within the historian, and “P-123A” in drawings. The ontology resolves these to a single entity and tracks how that entity adjustments by way of replacements, modifications, and outages. You may reply “what’s put in now” and “what was put in after we made that call” from the identical construction.

- Specific relationships. Not simply “this element exists” however “this element belongs to Prepare A, defines a part of the containment isolation boundary, is measured by these sensors, and inherits environmental qualification (EQ) constraints from its location.” The relationships that skilled engineers maintain of their heads develop into seen and traversable.

- Specific sourcing of asset constraints. When we have now a valve with a particular leakage restrict, it’s vital to know the place that constraint comes from and why. An ontology traces this again explicitly to the precise technical specs that underpin that constraint.

Working inside nuclear’s regulatory boundaries

Nuclear is likely one of the most closely regulated industries on the planet, and for good motive. A variety of regulatory frameworks could apply, together with export management guidelines such because the Export Administration Rules (EAR) and Title 10 of the Code of Federal Rules, Half 810 (10 CFR Half 810), in addition to knowledge safety and rising AI governance necessities comparable to GDPR and the EU AI Act. These obligations can have an effect on the place evaluation happens, how proof is saved, what data might be shared throughout borders or exterior outlined boundaries, and who can entry it. Taken collectively, these rules straight form how digital infrastructure in nuclear is designed, deployed, and ruled.

An ontology gives a strategy to separate construction from delicate content material. Plant relationships, constraints, and configuration logic might be outlined and maintained as a definite layer, separate from the operational knowledge beneath. Engineers can work with the complete relational context of the plant, querying how elements join, what constraints apply, and the place these constraints originate, with out the underlying operational knowledge leaving managed environments. State of affairs libraries constructed on the ontology’s construction might be versioned, reviewed, and shared as ruled property, grounded in actual plant physics with out exposing protected data.

For brand new builds, that is particularly related. Design verification, vendor collaboration, and licensing evaluation all contain a number of organizations exchanging technical data below export management scrutiny. An ontology enables you to share the construction and relationships that help engineering choices with out distributing delicate operational knowledge or proprietary design particulars. Distributors, constructors, and operators can work from a standard framework whereas every group maintains management over its personal protected data. That reduces the friction that usually slows down multi-party nuclear applications and helps maintain first-of-a-kind designs on schedule.

For working amenities, the identical precept applies. You may develop and validate reasoning frameworks, practice new workers on plant context, and put together compliance packages with out transferring delicate knowledge exterior applicable boundaries.

A sensible strategy to perceive what an ontology does is to stroll by way of a single workflow.

Use case: design validation and configuration management

Design validation and configuration management drive the identical query time and again: given the plant’s present configuration, is this modification acceptable, and might we show it from managed sources? Any time you contact a safety-related element, replace a design enter, substitute an element, or revise a calculation, you need to re-establish context throughout methods. What precisely is that this element on this plant? The place is it put in? What security operate or boundary does it help? What necessities does it inherit from that location? Which paperwork management the work window? The information to reply these questions exists. The connections between the info often don’t.

Outages stress-test this. Gear will get changed below schedule stress. Subject work, procurement, and engineering overview run in parallel. The errors that create actual ache are not often dramatic. They’re quiet mismatches that floor late: a qualification foundation that does not match the put in location, a drawing revision that wasn’t present, an incorrect practice task, a boundary assumption that modified, or an working envelope restrict pulled from the improper supply.

A standard instance is changing a motor-operated valve on a safety-related line. Earlier than an engineer may even consider the alternative, they must rebuild the context: what system and practice it belongs to, what boundary or credited operate it helps, which EQ and seismic necessities apply at that location, what working limits govern the element, and which managed paperwork set up these limits.

At the moment, each step of that’s handbook. The engineer opens the work order for a tag quantity. Individually navigates to the drawing set for boundary context. Pulls up qualification and seismic information from one other system. Tracks down the controlling calculations for working limits and checks revision standing. Every lookup is a separate system, a separate search, a separate judgment name about whether or not the data is present. Then the engineer synthesizes all of it of their head to find out whether or not the alternative is suitable. If another person asks the identical query later, an inspector, a reviewer, or a distinct shift, the method begins over.

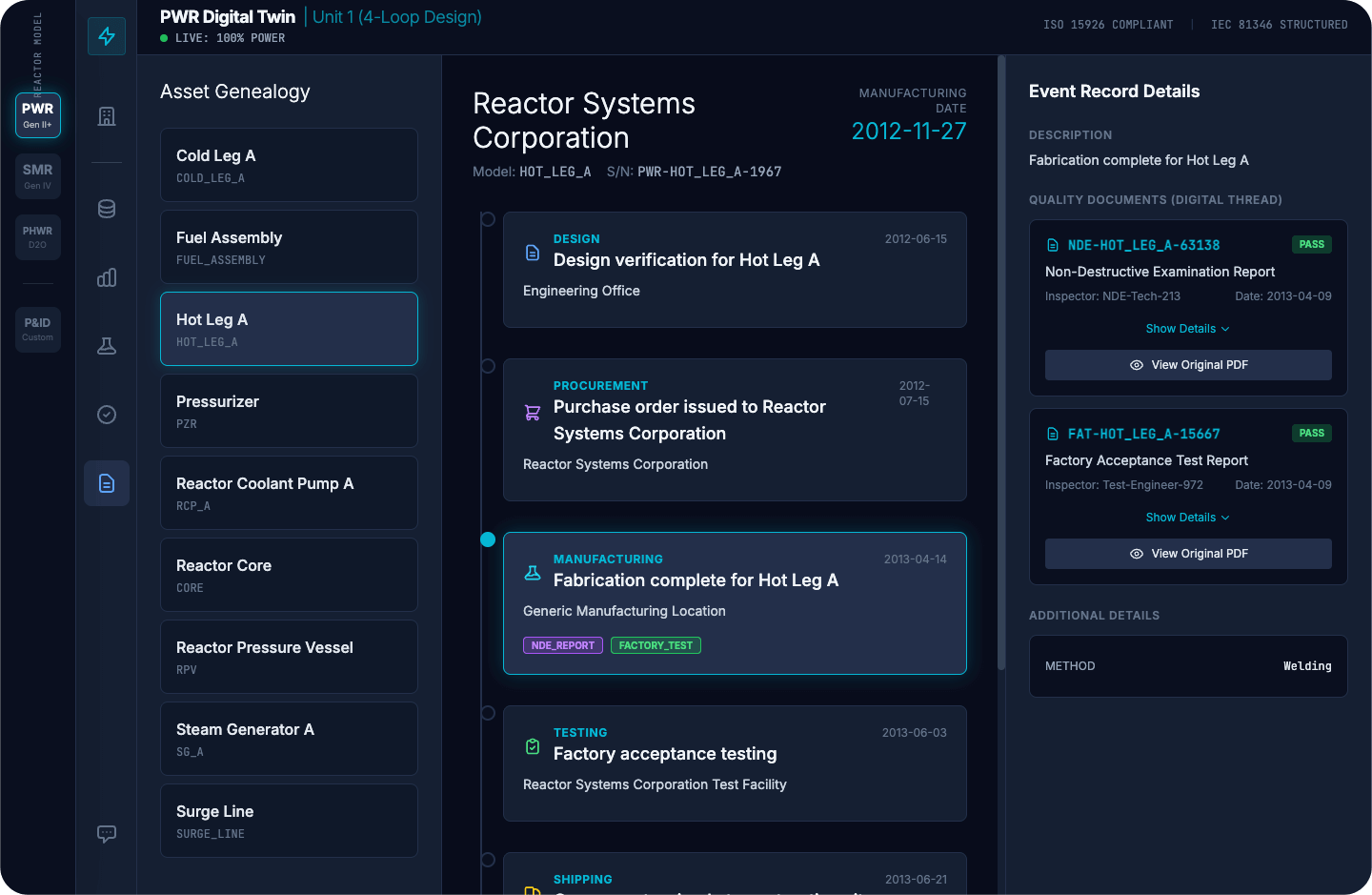

A plant ontology adjustments this by making the proof chain a part of the construction. The element has a canonical identification. That identification hyperlinks to its put in location and configuration state, and from there to the necessities that comply with: practice task, boundary position, EQ and seismic constraints, working envelope limits, and the authoritative sources that outline them. The engineer begins from the element, and the relationships are already there. The total lifecycle document, design verification, procurement, manufacturing, testing, and delivery, is reachable from that single identification. Supporting high quality paperwork like NDE studies, manufacturing facility acceptance exams, and traceable references hyperlink on to the element relatively than sitting in separate methods ready to be discovered.

As a result of the constraints and their sources are encoded within the construction, tooling might be constructed that flags when one thing does not align, comparable to an incorrect EQ foundation, an outdated revision, or a mismatched practice task. The engineer nonetheless makes the decision. The infrastructure will get them there sooner and gives a whole image, relatively than a partial one assembled below time stress.

Working the ontology at scale

An ontology is just as helpful because the platform working it. Relationships, identities, and constraints must be ruled, versioned, and queryable at scale. The platform has to remain aligned with the plant’s precise state all through outages, modifications, short-term alterations, and doc updates, with auditability that holds up below inspection. If it may well’t do this, the ontology drifts, and folks cease trusting it.

The ontology encodes plant relationships, constraints, and configuration logic in open requirements. The platform that governs it must match that openness. If the governance layer is proprietary, it does not matter how moveable the ontology is on paper. In an trade the place a element’s lifecycle document must be auditable by an operator, reviewable by the NRC, and traceable by an OEM throughout a long time, the power to share knowledge cleanly between organizations and instruments is desk stakes.

Databricks is constructed on open codecs and open interfaces. Ontology triples, element registries, relationship tables, and constraint information all sit on Delta Lake and are accessible from different instruments. If you want to share subsets with a companion or regulator, the codecs are standardized. Nothing is locked in.

On that basis, 4 capabilities come up repeatedly in nuclear work:

- Unified governance. When QA or the NRC asks how a particular asset was managed, the reply should be constant throughout element identification, doc management, relationships, and licensing foundation references. That falls aside when every of these lives below a separate permission mannequin. Unity Catalog gives a single governance layer throughout the whole ontology. Permissions, change monitoring, and auditing apply uniformly throughout each asset, so there’s one defensible reply relatively than 4 partial ones.

- Time-indexed configuration. Engineering and licensing choices rely upon the plant state at a particular time limit. Beneath 10 CFR 50.59, vegetation consider whether or not a proposed change requires prior NRC approval by assessing its affect towards the prevailing licensing foundation. That analysis is just pretty much as good because the configuration knowledge behind it, and the identical is true for operability determinations, setpoint foundation questions, post-modification validation, and routine outage critiques. All of them require figuring out what was put in and the controlling revisions on the time a call was made. Delta Lake’s time-travel functionality helps as-designed, as-built, as-installed, and as-maintained views from the identical underlying knowledge, with out requiring separate handbook snapshots. Each desk model is retained and queryable, so reconstructing the plant state at any prior resolution level is a question relatively than an archaeology challenge.

- Reproducible proof chains. 10 CFR 50 Appendix B establishes the standard assurance necessities for safety-related methods, constructions, and elements. Having the correct conclusion is not ample if you cannot reproduce the premise from managed sources. Unity Catalog’s automated lineage monitoring captures which doc revisions, constraint information, and relationship variations have been utilized in a particular workflow. Delta Lake’s audit log information each mutation to the underlying knowledge. Collectively, when a reviewer or inspector must see what supported a call, the platform gives a whole, timestamped reply relatively than requiring somebody to piece it collectively after the actual fact.

- Analytics on ruled knowledge. Governance, versioning, and lineage guarantee the info is in a reliable state. The subsequent query is what you are able to do with it as soon as it is there. Databricks Lakeflow Jobs present the orchestration layer for analytical pipelines that function straight on the ontology’s ruled property. MLflow tracks mannequin variations, coaching knowledge, parameters, and outputs with the identical rigor that Unity Catalog applies to the info itself. Situation monitoring fashions can monitor degradation patterns throughout a whole valve class by pulling upkeep historical past, sensor traits, and design limits from the ruled construction. Proposed adjustments might be screened routinely towards the licensing foundation as a result of the constraints and their sources are already encoded. The fashions and their outputs hint again to managed sources by way of the identical lineage that the platform gives for every little thing else. That traceability is what separates analytics that inform choices from analytics that may truly be credited in a regulated setting.

This connects on to the place DOE funding is heading. The DOE’s Genesis Mission is constructing the subsequent technology of digital instruments for the power sector, overlaying superior simulation, digital twins, AI-assisted design, and operational analytics. The ontology and ruled knowledge you get up in the present day for configuration management and compliance are the identical property that these applications will construct on. The infrastructure that reduces in the present day’s cycle time and rework turns into the inspiration for what comes subsequent. An open platform means the funding carries ahead relatively than requiring a rewrite when the necessities evolve.

Enterprise and strategic implications

The worth of an ontology compounds. As a result of the construction persists, the work accomplished to resolve a element’s context for one resolution carries ahead to the subsequent.

For the prevailing fleet, vegetation are extending operations, taking up extra advanced modifications, and doing it with a smaller pool of skilled workers below tighter regulatory timelines. What used to take days of pulling from separate methods to assemble a conformance package deal can now be compressed right into a structured question towards relationships that exist already. Inspection-ready proof bundles that used to require reconstructing the premise from reminiscence might be assembled from the construction that is already in place. The proportion of property with resolved canonical identification throughout knowledge sources climbs steadily because the ontology matures.

For brand new builds, the benefits start within the design part and proceed by way of licensing. If the ontology is in place early, the relationships between design intent, credited features, and licensing commitments are structured earlier than the primary element ships. Constraint mismatches get flagged throughout design overview as a result of constraints and their sources are encoded within the construction. With out that, they’re usually found throughout area set up, when the price of correction is orders of magnitude larger. Licensing proof assembles because the design matures relatively than getting reconstructed after the actual fact. The result’s fewer rework cycles, sooner coordination amongst distributors and constructors, and decrease prices to display security. The protection customary does not change. The work required to point out you have met it does.

As soon as the ontology is working for configuration management, it does not keep there. The identical relationships that help a valve alternative additionally help the condition-monitoring program monitoring degradation for that valve class. The identical constraint lineage that feeds a compliance package deal feeds the licensing evaluation for the subsequent uprate. As a result of the ontology is constructed on standards-aligned identification and constraint lineage, it gives OEMs, engineering corporations, and regulators with a standard reference level relatively than one other system to combine with.

That adjustments how new engineers come in control. As an alternative of constructing context by discovering the correct particular person to ask, they will question a element and see its practice task, boundary position, constraint sources, and upkeep historical past in a single place. Institutional data turns into infrastructure relatively than one thing that walks out the door with retirement. Skilled workers spend much less time answering the identical contextual questions and extra time on the judgment calls that really want their experience.

If the fleet goes to quadruple in capability and modernize on the similar time, that is the sort of infrastructure that needs to be deliberate early and carried ahead.

Constructing the inspiration for nuclear digital transformation

Able to discover how ontologies can strengthen data administration and decision-making for the nuclear trade? Obtain the Databricks Answer Accelerator for Digital Twins in Manufacturing, speed up your implementation utilizing Ontos from Databricks Labs, or learn How one can Construct Digital Twins for Operational Effectivity on the Databricks Weblog to see the reference structure in follow.

If you wish to apply these ideas to your personal methods, workflows, and governance constraints, attain out to your Databricks account crew to debate a scoped start line.