Organizations face an ever-increasing have to course of and analyze information in actual time. Conventional batch processing strategies now not suffice in a world the place immediate insights and instant responses to market adjustments are essential for sustaining aggressive benefit. Streaming information has emerged because the cornerstone of contemporary information architectures, serving to companies seize, course of, and act upon information because it’s generated.

As clients transfer from batch to real-time processing for streaming information, organizations are dealing with one other problem: scaling information administration throughout the enterprise, as a result of the centralized information platform can grow to be the bottleneck. Information mesh for streaming information has emerged as an answer to deal with this problem, constructing on the next ideas:

- Distributed domain-driven structure – Transferring away from centralized information groups to domain-specific possession

- Information as a product – Treating information as a first-class product with clear possession and high quality requirements

- Self-serve information infrastructure – Enabling domains to handle their information independently

- Federated information governance – Following international requirements and insurance policies whereas permitting area autonomy

A streaming mesh applies these ideas to real-time information motion and processing. This mesh is a contemporary architectural strategy that allows real-time information motion throughout decentralized domains. It supplies a versatile, scalable framework for steady information movement whereas sustaining the information mesh ideas of area possession and self-service capabilities. A streaming mesh represents a contemporary strategy to information integration and distribution, breaking down conventional silos and serving to organizations create extra dynamic, responsive information ecosystems.

AWS supplies two main options for streaming ingestion and storage: Amazon Managed Streaming for Apache Kafka (Amazon MSK) or Amazon Kinesis Information Streams. These providers are key to constructing a streaming mesh on AWS. On this publish, we discover the best way to construct a streaming mesh utilizing Kinesis Information Streams.

Kinesis Information Streams is a serverless streaming information service that makes it easy to seize, course of, and retailer information streams at scale. The service can repeatedly seize gigabytes of information per second from a whole lot of hundreds of sources, making it best for constructing streaming mesh architectures. Key options embrace computerized scaling, on-demand provisioning, built-in safety controls, and the power to retain information for as much as three hundred and sixty five days for replay functions.

Advantages of a streaming mesh

A streaming mesh can ship the next advantages:

- Scalability – Organizations can scale from processing hundreds to thousands and thousands of occasions per second utilizing managed scaling capabilities comparable to Kinesis Information Streams on-demand, whereas sustaining clear operations for each producers and customers.

- Pace and architectural simplification – Streaming mesh permits real-time information flows, assuaging the necessity for advanced orchestration and extract, rework, and cargo (ETL) processes. Information is streamed immediately from supply to customers because it’s produced, simplifying the general structure. This strategy replaces intricate point-to-point integrations and scheduled batch jobs with a streamlined, real-time information spine. For instance, as an alternative of operating nightly batch jobs to synchronize stock information of bodily items throughout areas, a streaming mesh permits for immediate stock updates throughout all programs as gross sales happen, considerably lowering architectural complexity and latency.

- Information synchronization – A streaming mesh captures supply system adjustments one time and permits a number of downstream programs to independently course of the identical information stream. For example, a single order processing stream can concurrently replace stock programs, transport providers, and analytics platforms whereas sustaining replay functionality, minimizing redundant integrations and offering information consistency.

The next personas have distinct obligations within the context of a streaming mesh:

- Producers – Producers are chargeable for producing and emitting information merchandise into the streaming mesh. They’ve full possession over the information merchandise they generate and should be certain that these information merchandise adhere to predefined information high quality and format requirements. Moreover, producers are tasked with managing the schema evolution of the streaming information, whereas additionally assembly service stage agreements for information supply.

- Customers – Customers are chargeable for consuming and processing information merchandise from the streaming mesh. They depend on the information merchandise offered by producers to help their purposes or analytics wants.

- Governance – Governance is chargeable for sustaining each the operational well being and safety of the streaming mesh platform. This contains managing scalability to deal with altering workloads, imposing information retention insurance policies, and optimizing useful resource utilization for effectivity. Additionally they oversee safety and compliance, imposing correct entry management, information encryption, and adherence to regulatory requirements.

The streaming mesh establishes a typical platform that allows seamless collaboration between producers, customers, and governance groups. By clearly defining obligations and offering self-service capabilities, it removes conventional integration boundaries whereas sustaining safety and compliance. This strategy helps organizations break down information silos and obtain extra environment friendly, versatile information utilization throughout the enterprise.A streaming mesh structure consists of two key constructs: stream storage and the stream processor. Stream storage serves all three key personas—governance, producers, and customers—by offering a dependable, scalable, on-demand platform for information retention and distribution.

The stream processor is crucial for customers studying and reworking the information. Kinesis Information Streams integrates seamlessly with numerous processing choices. AWS Lambda can learn from a Kinesis information stream by way of occasion supply mapping, which is a Lambda useful resource that reads objects from the stream and invokes a Lambda perform with batches of information. Different processing choices embrace the Kinesis Consumer Library (KCL) for constructing {custom} shopper purposes, Amazon Managed Service for Apache Flink for advanced stream processing at scale, Amazon Information Firehose, and extra. To study extra, confer with Learn information from Amazon Kinesis Information Streams.

This mix of storage and versatile processing capabilities helps the varied wants of a number of personas whereas sustaining operational simplicity.

Frequent entry patterns for constructing a streaming mesh

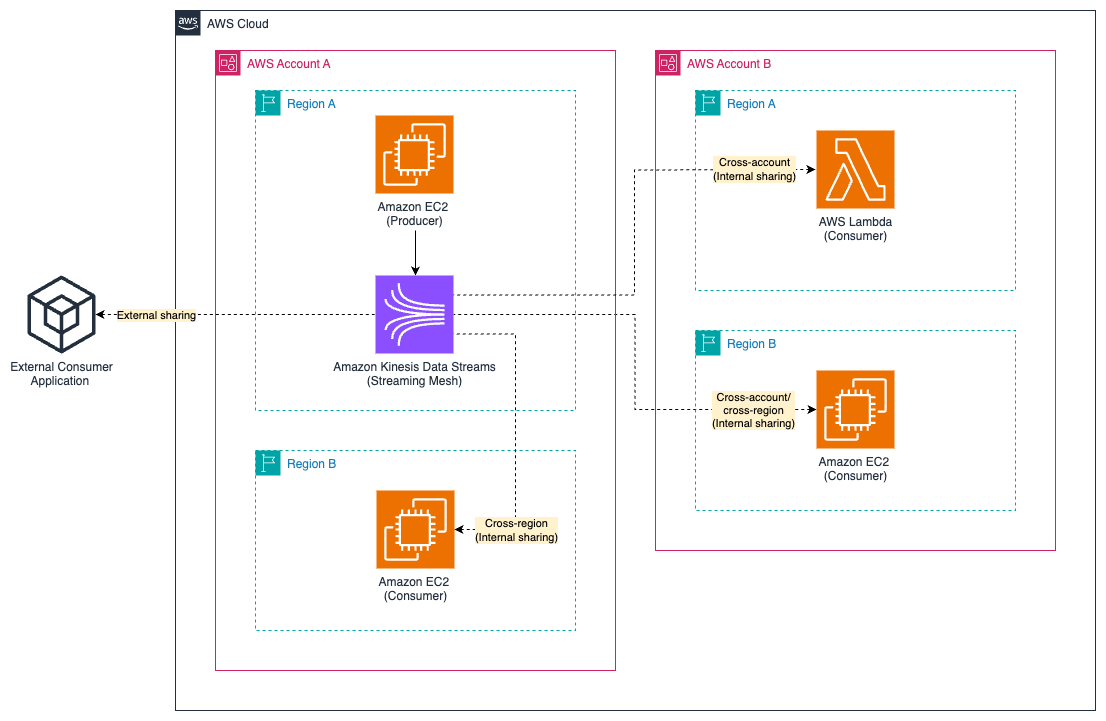

When constructing a streaming mesh, you must contemplate information ingestion, governance, entry management, storage, schema management, and processing. When implementing the elements that make up the streaming mesh, you could correctly deal with the wants of the personas outlined within the earlier part: producer, shopper, and governance. A key consideration in streaming mesh architectures is the truth that producers and customers may also exist outdoors of AWS completely. On this publish, we study the important thing situations illustrated within the following diagram. Though the diagram has been simplified for readability, it highlights a very powerful situations in a streaming mesh structure:

- Exterior sharing – This entails producers or customers outdoors of AWS

- Inside sharing – This entails producers and customers inside AWS, doubtlessly throughout completely different AWS accounts or AWS Areas

Constructing a streaming mesh on a self-managed streaming answer that facilitates inner and exterior sharing could be difficult as a result of producers and customers require the suitable service discovery, community connectivity, safety, and entry management to have the ability to work together with the mesh. This may contain implementing advanced networking options comparable to VPN connections with authentication and authorization mechanisms to help safe connectivity. As well as, you could contemplate the entry sample of the customers when constructing the streaming mesh.The next are frequent entry patterns:

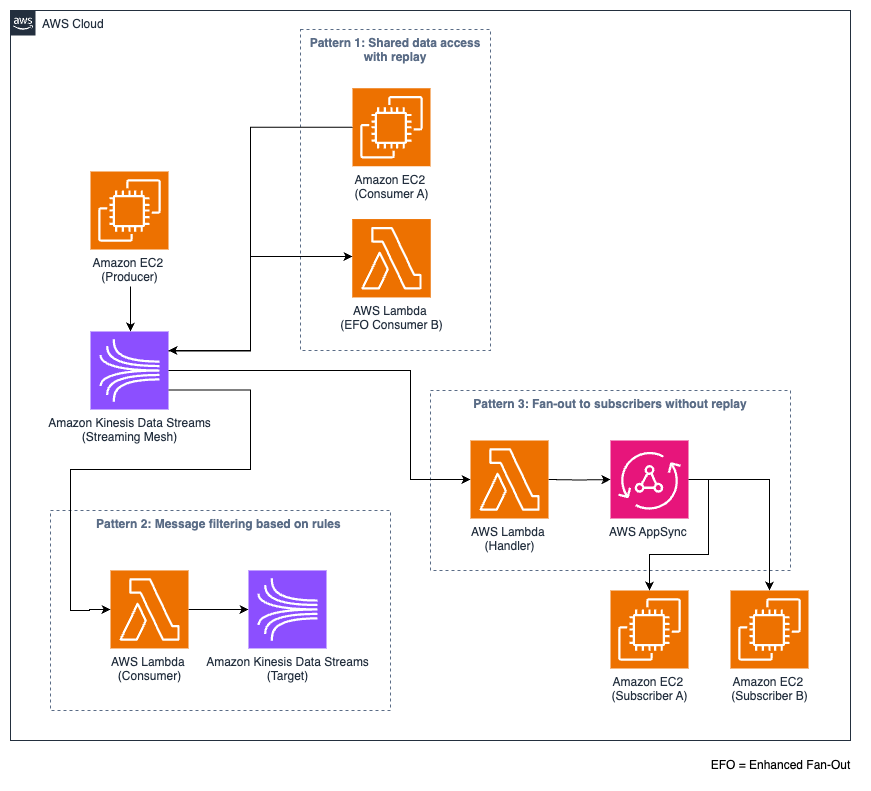

- Shared information entry with replay – This sample permits a number of (normal or enhanced fan-out) customers to entry the identical information stream in addition to the power to replay information as wanted. For instance, a centralized log stream may serve numerous groups: safety operations for risk detection, IT operations for system troubleshooting, or growth groups for debugging. Every crew can entry and replay the identical log information for his or her particular wants.

- Messaging filtering based mostly on guidelines – On this sample, you could filter the information stream, and customers are solely studying a subset of the information stream. The filtering is predicated on predefined guidelines on the column or row stage.

- Fan-out to subscribers with out replay – This sample is designed for real-time distribution of messages to a number of subscribers with every subscriber or shopper. The messages are delivered underneath at-most-once semantics and could be dropped or deleted after consumption. The subscribers can’t replay the occasions. The info is consumed by providers comparable to AWS AppSync or different GraphQL-based APIs utilizing WebSockets.

The next diagram illustrates these entry patterns.

Construct a streaming mesh utilizing Kinesis Information Streams

When constructing a streaming mesh that entails inner and exterior sharing, you need to use Kinesis Information Streams. This service affords a built-in API layer that ship safe and extremely accessible HTTP/S endpoints accessible by way of the Kinesis API. Producers and customers can securely write and skim from the Kinesis Information Streams endpoints utilizing the AWS SDK, the Amazon Kinesis Producer Library (KPL), or Kinesis Consumer Library (KCL), assuaging the necessity for {custom} REST proxies or further API infrastructure.

Safety is inherently built-in by way of AWS Identification and Entry Administration (IAM), supporting fine-grained entry management that may be centrally managed. You may as well use attribute-based entry management (ABAC) with stream tags assigned to Kinesis Information Streams sources for managing entry management to the streaming mesh, as a result of ABAC is especially useful in advanced and scaling environments. As a result of ABAC is attribute-based, it permits dynamic authorization for information producers and customers in actual time, robotically adapting entry permissions as organizational and information necessities evolve. As well as, Kinesis Information Streams supplies built-in fee limiting, request throttling, and burst dealing with capabilities.

Within the following sections, we revisit the beforehand talked about frequent entry patterns for customers within the context of a streaming mesh and focus on the best way to construct the patterns utilizing Kinesis Information Streams.

Shared information entry with replay

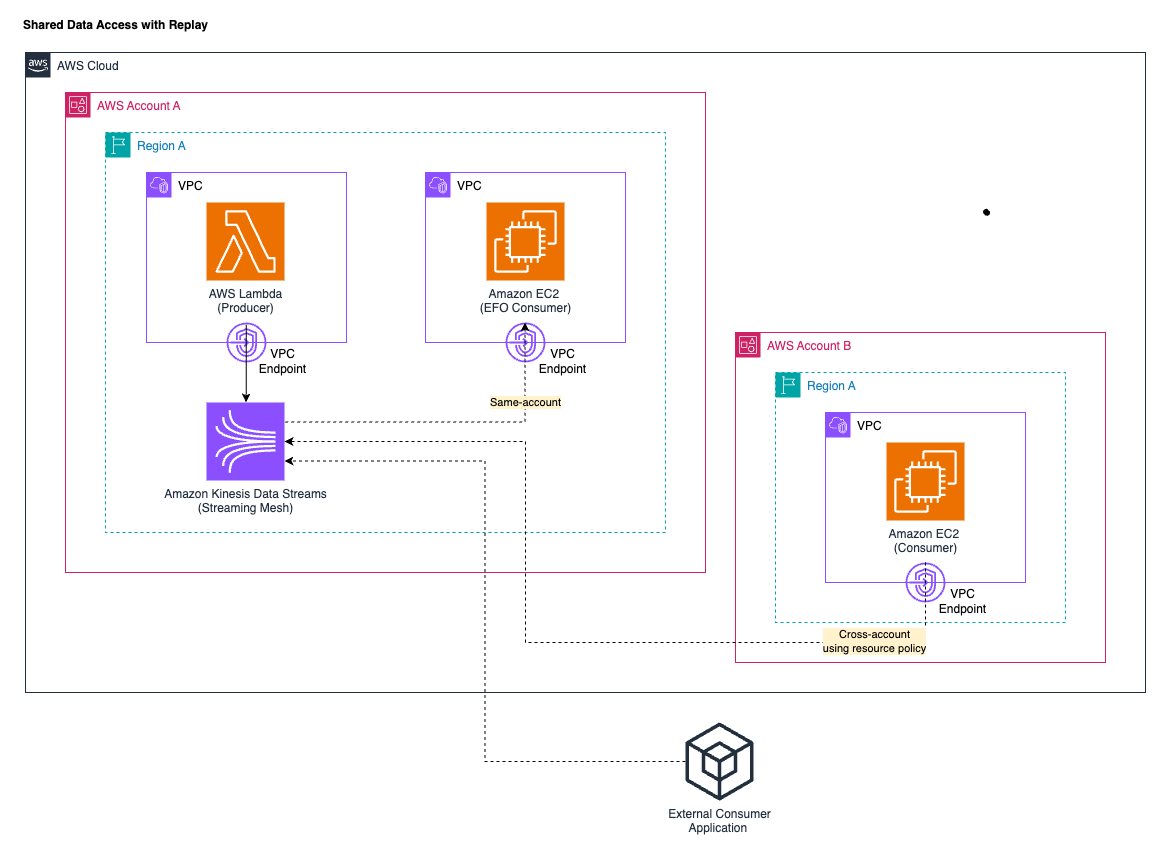

Kinesis Information Stream has built-in help for the shared information entry with replay sample. The next diagram illustrates this entry sample, specializing in same-account, cross-account, and exterior customers.

Governance

While you create your information mesh with Kinesis Information Streams, you must create an information stream with the suitable variety of provisioned shards or on-demand mode based mostly in your throughput wants. On-demand mode ought to be thought of for extra dynamic workloads. Notice that message ordering can solely be assured on the shard stage.

Configure the information retention interval of as much as three hundred and sixty five days. The default retention interval is 24 hours and could be modified utilizing the Kinesis Information Streams API. This manner, the information is retained for the desired retention interval and could be replayed by the customers. Notice that there may be a further payment for long-term information retention payment past the default 24 hours.

To boost community safety, you need to use interface VPC endpoints. They be certain that the site visitors between your producers and customers residing in your digital non-public cloud (VPC) and your Kinesis information streams stay non-public and don’t traverse the web. To offer cross-account entry to your Kinesis information stream, you need to use useful resource insurance policies or cross-account IAM roles. Useful resource-based insurance policies are immediately connected to the useful resource that you simply need to share entry to, such because the Kinesis information stream, and a cross-account IAM position in a single AWS account delegates particular permissions, comparable to learn entry to the Kinesis information stream, to a different AWS account. On the time of writing, Kinesis Information Streams doesn’t help cross-Area entry.

Kinesis Information Streams enforces quotas on the shard and stream stage to forestall useful resource exhaustion and keep constant efficiency. Mixed with shard-level Amazon CloudWatch metrics, these quotas assist establish sizzling shards and stop noisy neighbor situations that might influence total stream efficiency.

Producer

You’ll be able to construct producer purposes utilizing the AWS SDK or the KPL. Utilizing the KPL can facilitate the writing as a result of it supplies built-in features comparable to aggregation, retry mechanisms, pre-shard fee limiting, and elevated throughput. The KPL can incur an further processing delay. It is best to contemplate integrating Kinesis Information Streams with the AWS Glue Schema Registry to centrally management uncover, management, and evolve schemas and ensure produced information is repeatedly validated by a registered schema.

You have to be certain that your producers can securely connect with the Kinesis API whether or not from inside or outdoors the AWS Cloud. Your producer can doubtlessly dwell in the identical AWS account, throughout accounts, or outdoors of AWS completely. Usually, you need your producers to be as shut as doable to the Area the place your Kinesis information stream is operating to attenuate latency. You’ll be able to allow cross-account entry by attaching a resource-based coverage to your Kinesis information stream that grants producers in different AWS accounts permission to put in writing information. On the time of writing, the KPL doesn’t help specifying a stream Amazon Useful resource Identify (ARN) when writing to a knowledge stream. You have to use the AWS SDK to put in writing to a cross-account information stream (for extra particulars, see Share your information stream with one other account). There are additionally limitations for cross-Area help if you wish to produce information to Kinesis Information Streams from Information Firehose in a unique Area utilizing the direct integration.

To securely entry the Kinesis information stream, producers want legitimate credentials. Credentials shouldn’t be saved immediately within the shopper software. As a substitute, you must use IAM roles to supply short-term credentials utilizing the AssumeRole API by way of AWS Safety Token Service (AWS STS). For producers outdoors of AWS, you can even contemplate AWS IAM Roles Anyplace to acquire short-term credentials in IAM. Importantly, solely the minimal permissions which might be required to put in writing the stream ought to be granted. With ABAC help for Kinesis Information Streams, particular API actions could be allowed or denied when the tag on the information stream matches the tag outlined within the IAM position precept.

Shopper

You’ll be able to construct customers utilizing the KCL or AWS SDK. The KCL can simplify studying from Kinesis information streams as a result of it robotically handles advanced duties comparable to checkpointing and cargo balancing throughout a number of customers. This shared entry sample could be carried out utilizing normal in addition to enhanced fan-out customers. In the usual consumption mode, the learn throughput is shared by all customers studying from the identical shard. The utmost throughput for every shard is 2 MBps. Data are delivered to the customers in a pull mannequin over HTTP utilizing the GetRecords API. Alternatively, with enhanced fan-out, customers can use the SubscribeToShard API with information pushed over HTTP/2 for lower-latency supply. For extra particulars, see Develop enhanced fan-out customers with devoted throughput.

Each consumption strategies permit customers to specify the shard and sequence quantity from which to begin studying, enabling information replay from completely different factors inside the retention interval. Kinesis Information Streams recommends to pay attention to the shard restrict that’s shared and use fan-out when doable. KCL 2.0 or later makes use of enhanced fan-out by default, and you could particularly set the retrieval mode to POLLING to make use of the usual consumption mannequin. Relating to connectivity and entry management, you must intently comply with what’s already urged for the producer facet.

Messaging filtering based mostly on guidelines

Though Kinesis Information Streams doesn’t present built-in filtering capabilities, you possibly can implement this sample by combining it with Lambda or Managed Service for Apache Flink. For this publish, we concentrate on utilizing Lambda to filter messages.

Governance and producer

Governance and producer personas ought to comply with the very best practices already outlined for the shared information entry with replay sample, as described within the earlier part.

Shopper

It is best to create a Lambda perform that consumes (shared throughput or devoted throughput) from the stream and create a Lambda occasion supply mapping with your filter standards. On the time of writing, Lambda helps occasion supply mappings for Amazon DynamoDB, Kinesis Information Streams, Amazon MQ, Managed Streaming for Apache Kafka or self-managed Kafka, and Amazon Easy Queue Service (Amazon SQS). Each the ingested information information and your filter standards for the information subject have to be in a legitimate JSON format for Lambda to correctly filter the incoming messages from Kinesis sources.

When utilizing enhanced fan-out, you configure a Kinesis dedicated-throughput shopper to behave because the set off to your Lambda perform. Lambda then filters the (aggregated) information and passes solely these information that meet your filter standards.

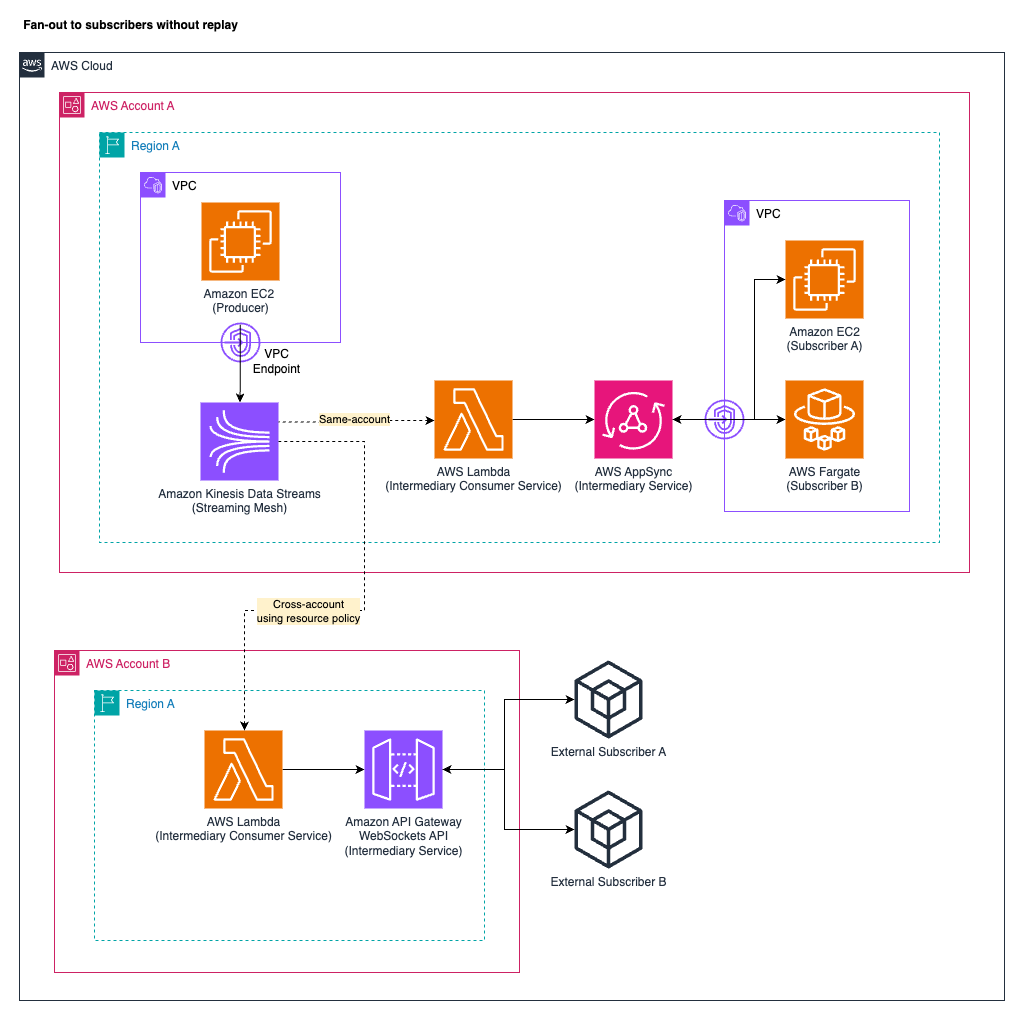

Fan-out to subscribers with out replay

When distributing streaming information to a number of subscribers with out the power to replay, Kinesis Information Streams helps an middleman sample that’s significantly efficient for internet and cellular shoppers needing real-time updates. This sample introduces an middleman service to bridge between Kinesis Information Streams and the subscribers, processing information from the information stream (utilizing a normal or enhanced fan-out shopper mannequin) and delivering the information information to the subscribers in actual time. Subscribers don’t immediately work together with the Kinesis API.

A standard strategy makes use of GraphQL gateways comparable to AWS AppSync, WebSockets API providers just like the Amazon API Gateway WebSockets API, or different appropriate providers that make the information accessible to the subscribers. The info is distributed to the subscribers by way of networking connections comparable to WebSockets.

The next diagram illustrates the entry sample of fan-out to subscribers with out replay. The diagram shows the managed AWS providers AppSync and API Gateway as middleman shopper choices for illustration functions.

Governance and producer

Governance and producer personas ought to comply with the very best practices already outlined for the shared information entry with replay sample.

Shopper

This consumption mannequin operates in another way from conventional Kinesis consumption patterns. Subscribers join by way of networking connections comparable to WebSockets to the middleman service and obtain the information information in actual time with out the power to set offsets, replay historic information, or management information positioning. The supply follows at-most-once semantics, the place messages could be misplaced if subscribers disconnect, as a result of consumption is ephemeral with out persistence for particular person subscribers. The middleman shopper service have to be designed for top efficiency, low latency, and resilient message distribution. Potential middleman service implementations vary from managed providers comparable to AppSync or API Gateway to custom-built options like WebSocket servers or GraphQL subscription providers. As well as, this sample requires an middleman shopper service comparable to Lambda that reads the information from the Kinesis information stream and instantly writes it to the middleman service.

Conclusion

This publish highlighted the advantages of a streaming mesh. We demonstrated why Kinesis Information Streams is especially suited to facilitate a safe and scalable streaming mesh structure for inner in addition to exterior sharing. The explanations embrace the service’s built-in API layer, complete safety by way of IAM, versatile networking connection choices, and versatile consumption fashions. The streaming mesh patterns demonstrated—shared information entry with replay, message filtering, and fan-out to subscribers—showcase how Kinesis Information Streams successfully helps producers, customers, and governance groups throughout inner and exterior boundaries.

For extra data on the best way to get began with Kinesis Information Streams, confer with Getting began with Amazon Kinesis Information Streams. For different posts on Kinesis Information Streams, flick through the AWS Large Information Weblog.

In regards to the authors