In case you’ve ever shopped on Amazon, you’ve used Your Orders. This function maintains your full order historical past courting again to 1995, so you possibly can observe and handle each buy you’ve made. The order historical past search function helps you to discover your previous purchases by getting into key phrases within the search bar. Past simply discovering gadgets, it gives a simple technique to repurchase the identical or related gadgets, saving you effort and time.

Varied options throughout Amazon’s procuring expertise, comparable to Rufus and Alexa, use order historical past search that will help you discover your previous purchases. Subsequently, it’s essential that order historical past search can find your previous bought gadgets as precisely and rapidly as doable.

On this put up, we present you the way the Your Orders workforce improved order historical past search by introducing semantic search capabilities on prime of our present lexical search system, utilizing Amazon OpenSearch Service and Amazon SageMaker.

Limitations of lexical search

Order historical past search makes use of lexical matching to search out gadgets from your complete order historical past of a buyer that match no less than one phrase of the search key phrases. For instance, if a buyer searches for “orange juice,” the system retrieves all orange juice gadgets in addition to recent oranges and different fruit juices the client had beforehand ordered. Though lexical matching can present a excessive recall of things with phrases matching the search key phrases exactly, it doesn’t work properly for associated or generic search key phrases, like “well being drinks” on this instance.

For the reason that launch of Rufus, Amazon’s AI-enabled procuring assistant, a rising variety of prospects are experiencing a streamlined and richer procuring journey, together with looking for their earlier purchases with Rufus. Prospects can now ask “Present me wholesome drinks” with out worrying about utilizing prolonged, extra exact phrases like “kombucha”, “inexperienced tea”, and “protein shakes”. This makes the search expertise extra conversational and intent-based, presenting a chance to make merchandise discovery extra intuitive. For Rufus to reply order historical past searches with the identical intuitive expertise comparable to “Present me the wholesome drinks I purchased final yr”, the underlying order historical past information retailer (“Your Orders”) wants semantic search functionality to know the underlying semantics of search key phrases past the traditional lexical matching.

Challenges implementing semantic search

Implementing semantic search at our scale introduced a number of technical challenges:

- Scale – We would have liked to allow semantic search throughout billions of data akin to prospects’ order historical past globally.

- Zero downtime – We would have liked to maintain the system 100% obtainable whereas making adjustments on the backend to introduce semantic search.

- Stopping search high quality degradation – Semantic search is meant to enhance the standard of search outcomes. Nonetheless, in some instances, it will possibly cut back search high quality. For instance, if a buyer remembers their merchandise identify precisely and needs to search out solely gadgets matching that identify, surfacing related gadgets along with the precisely matching gadgets will enhance crowding in outcomes and make it more durable to search out the related merchandise. Equally, semantic search won’t work for instances the place the client intends to look by identifier values, like order ID, which lack an inherent semantic that means. For these situations, we use lexical search solely.

Answer overview

Semantic search is powered by giant language fashions (LLMs), that are principally skilled on human languages. These fashions could be tailored to take a chunk of textual content in any language they had been skilled in and emit an embedding vector of a set size, no matter the enter textual content size. By design, embedding vectors seize the semantic that means of enter textual content such that two semantically related textual content strings have excessive cosine similarity computed on their respective embedding vectors. For semantic search on order historical past, the enter textual content topic to embedding era and similarity computation are the client search phrases and the product textual content of bought gadgets.

We divide our answer into two elements:

- Bettering system scalability and resiliency for dealing with requests at scale – Earlier than implementing semantic search, we wanted to make sure our infrastructure may deal with the elevated computational load, main us to undertake a cell-based structure. This step shouldn’t be wanted for each use case, however programs with very excessive scale by way of request or information quantity can profit so much from its use earlier than implementing a resource-intensive use case like semantic search.

- Implementing semantic search – We started by evaluating the obtainable embedding fashions, utilizing the offline analysis capabilities of Amazon Bedrock to check totally different fashions. After we chosen our mannequin, we may set up the infrastructure for producing embedding vectors.

Bettering system scalability and resiliency

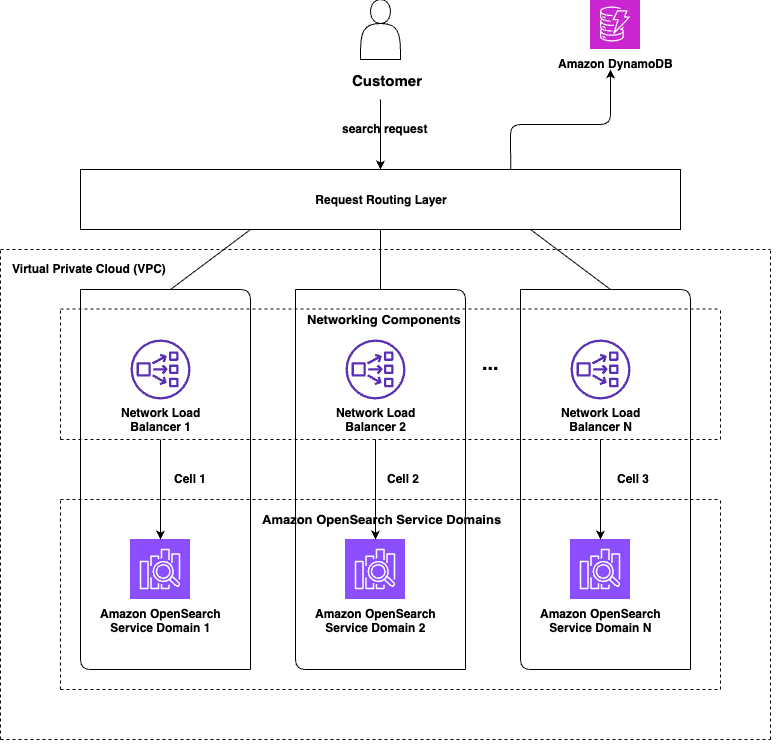

We used the cell-based structure design sample for bettering our scalability and resiliency. A cell-based design entails partitioning the system into equivalent, smaller, self-contained chunks, or cells, which deal with solely part of the general site visitors acquired by the system. The next diagram reveals a high-level illustration of a cell-based design for order historical past search.

Every cell serves an outlined subset of our prospects. Cells don’t want to speak with each other to serve a buyer request. Every buyer is assigned to a cell and every request from that buyer is routed to that cell. The OpenSearch Service area in every cell holds information just for the subset prospects that it’s imagined to serve. The variety of cells (N) and distribution of knowledge amongst these cells depends upon the enterprise use case, however the purpose is to realize as even a distribution of knowledge and site visitors as doable.

The routing logic could be saved as easy or as subtle because the use case requires it to be. The cell project values can both be computed at runtime for every request, or they are often computed one time and written to a cache or persistent information retailer like Amazon DynamoDB, from the place cell project values could be fetched for subsequent requests. For order historical past search, the logic was easy and fast sufficient to be executed at runtime for every request. Trying up cell project from a persistent information retailer is particularly helpful for instances the place there’s a threat of some cells turning into “heavier” than others over time. In such instances, it turns into simpler to redistribute the heavy cell’s information by merely overriding cell project values for particular keys within the information retailer, as a substitute of getting to vary the partitioning logic instantly, which could have an effect on information distribution throughout all of the cells.

Because the system’s load grows, the variety of cells within the system could be elevated to deal with the extra site visitors. Even with out rising the variety of cells within the system, we are able to redistribute present information among the many present N cells by reassigning some keys from a number of closely populated cells to totally different frivolously populated cells to unfold out the load extra evenly throughout all of the cells and make extra environment friendly use of the infrastructure.

A cell-based structure additionally helps make the system extra resilient. For instance, if we lose one cell, our capability is diminished solely by 1/N, as a substitute of 100%. This association can be improved to cut back the capability loss even additional by assigning partitioning keys to 2 or extra cells such that they get written to 2 or extra cells. In such instances, lack of a single cell doesn’t lead to information loss.

Implementing semantic search

Implementing semantic seek for our order historical past search required a number of key choices and technical steps. We started by evaluating the obtainable embedding fashions, utilizing the offline analysis capabilities of Amazon Bedrock to check totally different fashions in opposition to our particular enterprise area necessities. This analysis course of helped us establish which mannequin would ship the very best efficiency for our use case. After we chosen our mannequin, we wanted to ascertain the infrastructure for producing embedding vectors. We containerized our embedding mannequin and registered it in Amazon Elastic Container Registry (Amazon ECR), then deployed it utilizing SageMaker inference endpoints to deal with the precise vector computation at scale.

For the search infrastructure itself, we selected OpenSearch Service to implement our semantic search capabilities. OpenSearch Service offered each the vector storage we wanted and the search algorithms required to ship related outcomes to our customers.

Considered one of our largest challenges was updating our historic information to assist semantic search on present orders. We constructed an information processing pipeline utilizing AWS Step Capabilities to orchestrate the workflow and AWS Lambda capabilities to deal with the precise vector era for our legacy information, so we may present semantic seek for all of the data we needed to.

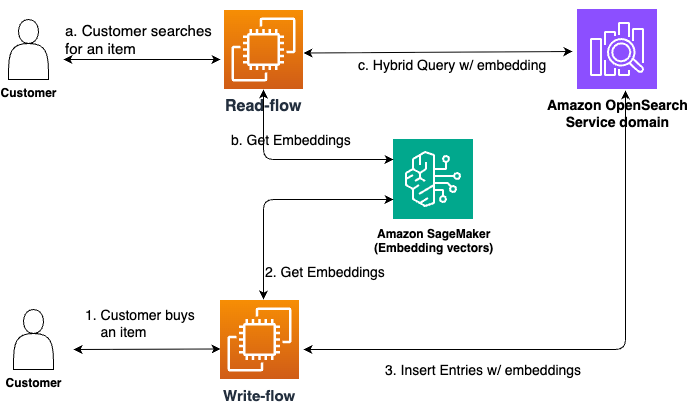

The next diagram illustrates the high-level structure.

Mannequin analysis and choice

Order historical past search makes use of an embedding mannequin skilled on Amazon-specific information. Area-specific coaching is essential as a result of the generated embedding vectors should work properly for the enterprise context to return high quality outcomes.

We used an LLM-as-a-judge methodology with Anthropic’s Claude on Amazon Bedrock to judge candidate fashions. Anthropic’s Claude acquired prompts containing anonymized merchandise textual content and search phrases from buyer order historical past, then filtered and ranked gadgets by relevance. These outcomes served as floor fact for comparability.

We evaluated fashions utilizing normal rating metrics:

- Normalized Discounted Cumulative Acquire (NDCG) – Measures rating high quality in opposition to preferrred order

- Imply Reciprocal Rank (MRR) – Considers place of first related merchandise

- Precision – Charges accuracy of retrieved outcomes

- Recall – Charges capacity to retrieve all related gadgets

This course of helped us decide the very best mannequin.

Retrieval technique: Buyer-scoped complete search

Order historical past search has two key necessities:

- Search solely by means of the requesting buyer’s order historical past – We don’t need gadgets from one buyer’s order historical past exhibiting up in search outcomes for an additional buyer

- Search all of that buyer’s historical past – We don’t wish to miss exhibiting an merchandise that will have been related for the client’s search phrase simply because the search algorithm missed evaluating it for some cause

Our method entails utilizing OpenSearch Service to retrieve all gadgets for the client who issued the search question, calculating relevance scores for every of them in opposition to the search phrase, sorting by rating, and returning prime Okay outcomes. This gives complete outcomes protection for every buyer.

Vector storage with OpenSearch Service

We used two OpenSearch Service options for environment friendly vector storage and search:

- knn_vector datatype – Constructed-in assist for storing embedding vectors. Current domains can add this subject sort with out reindexing, enabling actual kNN search throughout all data. We didn’t want approximate kNN as a result of the variety of data for many prospects was sufficiently small for actual kNN to scale.

- Scripted scoring – Painless scripts compute vector similarity server-side, decreasing consumer complexity and sustaining low latency.

Hybrid search

Hybrid search refers to combining the outcomes of lexical and semantic search to profit from the strengths of every. The hybrid question capabilities of OpenSearch Service simplify implementing hybrid search by letting shoppers specify each sorts of queries in a single request. OpenSearch Service runs each queries in parallel, merges their outcomes, normalizes the relevance scores of the sub-queries, and kinds outcomes by the offered kind order (relevance rating by default) earlier than returning them to shoppers.

This provides shoppers the very best of each sorts of searches. For instance, there are particular situations the place the search phrase doesn’t make a lot sense semantically, like when prospects search by their orderId values. Semantic search shouldn’t be designed for such instances; these are finest served utilizing key phrase matching.

The hybrid search performance helped save implementation effort and potential latency enhance for order historical past search.

Updating historic information

After the infrastructure has been arrange, newly ingested data are endured with the related embedding vectors and assist semantic search on these data. Nonetheless, when prospects search, they usually seek for merchandise they’d bought earlier. Subsequently, the system may not assist enhance buyer expertise a lot until the older data are up to date to incorporate the related embeddings. The method to populate this information depends upon the size of the issue at hand.

Releasing the change to attenuate potential buyer impression

Our closing step was to launch the change to shoppers in a fashion such that the impression of any potential issues is as small as doable. There are a number of methods to do this, together with:

- Implementing semantic search in a fashion such that any transient points within the semantic search circulation make the logic fall again to lexical-only search, as a substitute of failing the request fully. Even when semantic search doesn’t execute, the system ought to nonetheless be capable of return outcomes of lexical search to the consumer, as a substitute of empty outcomes.

- Gating the change such that the default conduct stays lexical-only search and shoppers who want the semantic search function should cross an extra flag within the request, for instance, which executes the semantic or hybrid circulation just for these requests.

- Conserving the brand new circulation behind a function flag throughout the preliminary interval such that it could possibly be turned off fully if some essential downside is detected.

Examples of improved buyer expertise

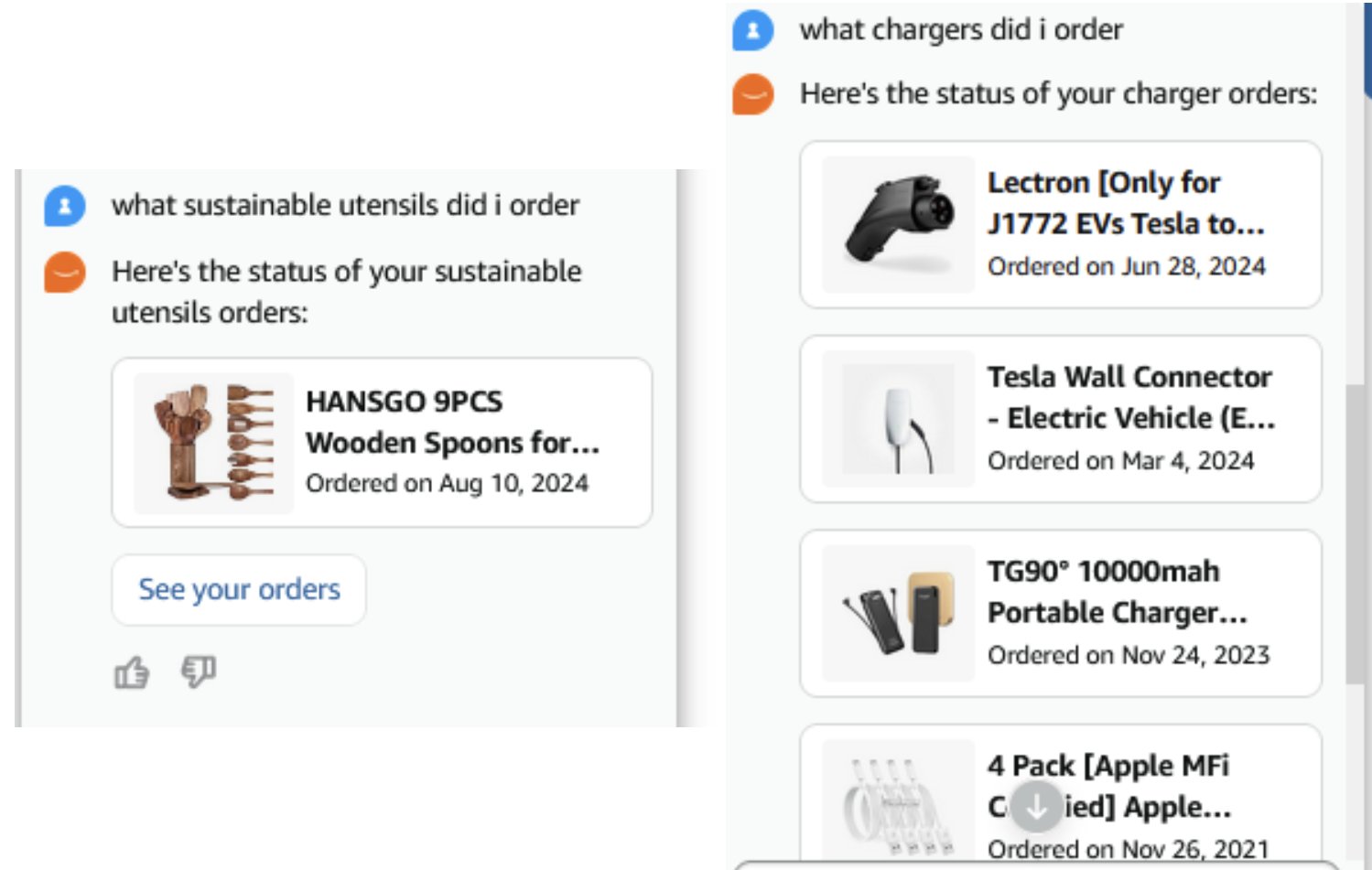

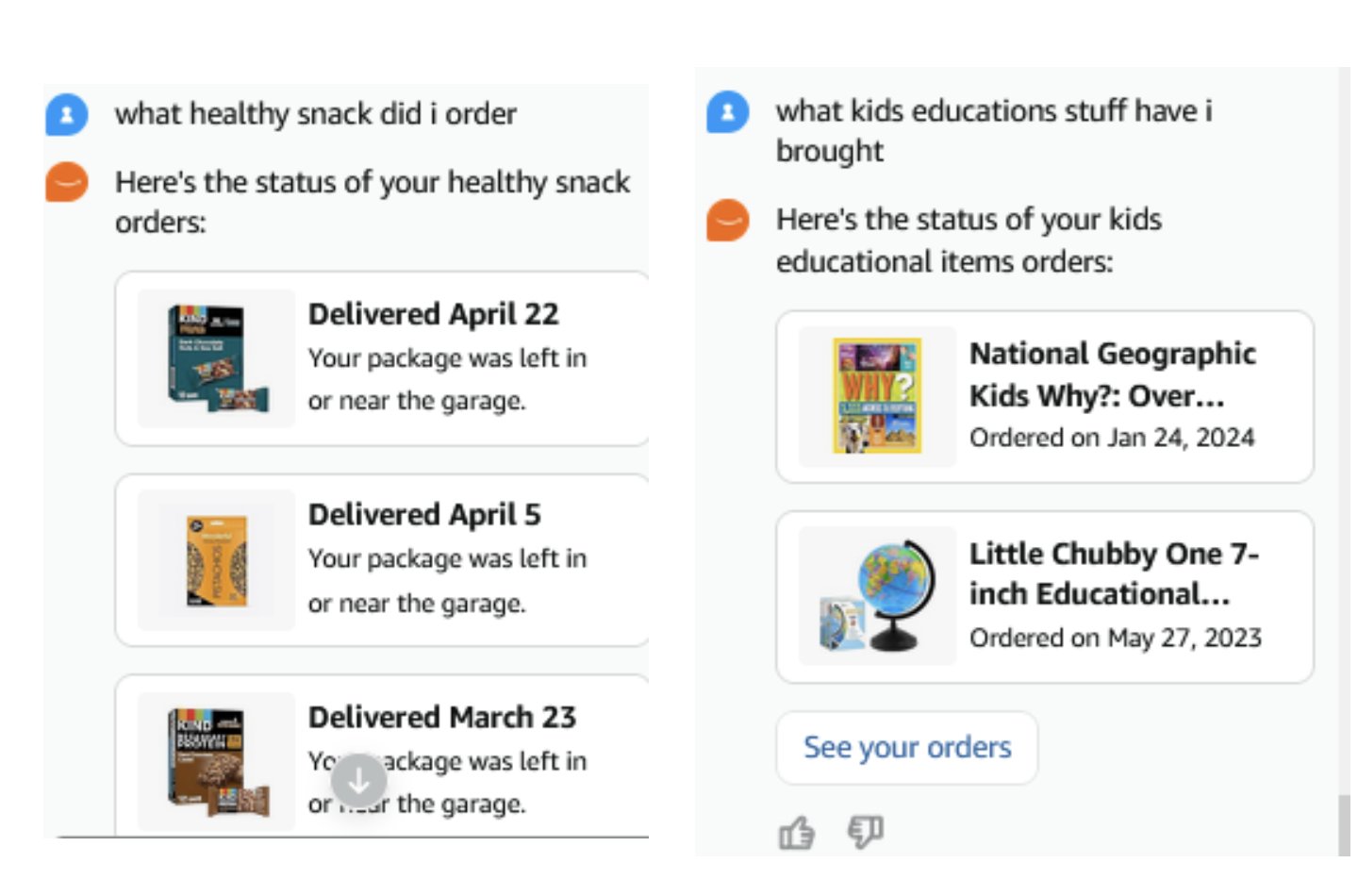

The next are some examples of buyer interactions with Rufus that required Rufus to question the respective buyer’s order historical past to reply their query and provides them the required items of data.

The next screenshots present how semantic search picks up wood spoons for a “sustainable utensils” question and totally different sorts of chargers regardless of not having the key phrase “charger” within the title description, within the case of the wall connector.

The next screenshots present how semantic search picks up related outcomes though the title description doesn’t embrace the queried key phrases.

The semantic search function of order historical past search helped Rufus fetch them and present to the purchasers. Earlier than semantic search, Rufus wasn’t in a position to present any outcomes to prospects for such queries.

Enterprise impression

Our answer resulted within the following key enterprise impacts:

- Buyer expertise enhancements – The answer achieved 10% enchancment in question recall, rising the share of searches that return related outcomes. It additionally diminished customer support contacts for points associated to finding previous orders.

- Accomplice integration success – The answer strengthened pure language processing capabilities for Alexa and Rufus, enhancing their capacity to interpret order historical past queries. It additionally diminished the necessity for reranking and postprocessing by associate groups. We improved question success price by 20%, that means extra buyer searches now return no less than one related merchandise. We additionally noticed enhanced end result protection by 48%, with semantic search persistently surfacing further related matches that lexical search would have missed.

Conclusion

On this put up, we confirmed you the way we advanced Amazon order historical past search to assist semantic search capabilities. This transition concerned utilizing cutting-edge AI know-how whereas working inside present infrastructure limitations to develop options that prevented disruption and maintained SLAs throughout the function improve. The implementation additionally concerned backfilling, the place billions of paperwork had been processed at charges a number of instances larger than regular ingestion to compute embedding vectors for beforehand bought gadgets. This operation required cautious engineering and took benefit of the resilience OpenSearch Service provides even underneath excessive load.

Past the rapid implementation, this basis allows continued innovation in search know-how. The embedding vectors framework can incorporate improved fashions as they turn into obtainable, and the structure helps growth into new capabilities comparable to personalization and multi-modal search.

You will get began with actual k-NN search at the moment following the directions in Actual k-NN search. In case you’re on the lookout for a managed answer in your OpenSearch cluster, try Amazon OpenSearch Service.

In regards to the authors