Guaranteeing the reliability, security, and efficiency of AI brokers is important. That’s the place agent observability is available in.

This weblog put up is the third out of a six-part weblog sequence known as Agent Manufacturing unit which can share greatest practices, design patterns, and instruments to assist information you thru adopting and constructing agentic AI.

Seeing is realizing—the facility of agent observability

As agentic AI turns into extra central to enterprise workflows, making certain reliability, security, and efficiency is important. That’s the place agent observability is available in. Agent observability empowers groups to:

- Detect and resolve points early in growth.

- Confirm that brokers uphold requirements of high quality, security, and compliance.

- Optimize efficiency and person expertise in manufacturing.

- Preserve belief and accountability in AI methods.

With the rise of complicated, multi-agent and multi-modal methods, observability is crucial for delivering AI that’s not solely efficient, but in addition clear, protected, and aligned with organizational values. Observability empowers groups to construct with confidence and scale responsibly by offering visibility into how brokers behave, make choices, and reply to real-world eventualities throughout their lifecycle.

What’s agent observability?

Agent observability is the apply of attaining deep, actionable visibility into the interior workings, choices, and outcomes of AI brokers all through their lifecycle—from growth and testing to deployment and ongoing operation. Key facets of agent observability embrace:

- Steady monitoring: Monitoring agent actions, choices, and interactions in actual time to floor anomalies, sudden behaviors, or efficiency drift.

- Tracing: Capturing detailed execution flows, together with how brokers cause by way of duties, choose instruments, and collaborate with different brokers or providers. This helps reply not simply “what occurred,” however “why and the way did it occur?”

- Logging: Information agent choices, device calls, and inner state adjustments to help debugging and conduct evaluation in agentic AI workflows.

- Analysis: Systematically assessing agent outputs for high quality, security, compliance, and alignment with person intent—utilizing each automated and human-in-the-loop strategies.

- Governance: Imposing insurance policies and requirements to make sure brokers function ethically, safely, and in accordance with organizational and regulatory necessities.

Conventional observability vs agent observability

Conventional observability depends on three foundational pillars: metrics, logs, and traces. These present visibility into system efficiency, assist diagnose failures, and help root-cause evaluation. They’re well-suited for typical software program methods the place the main target is on infrastructure well being, latency, and throughput.

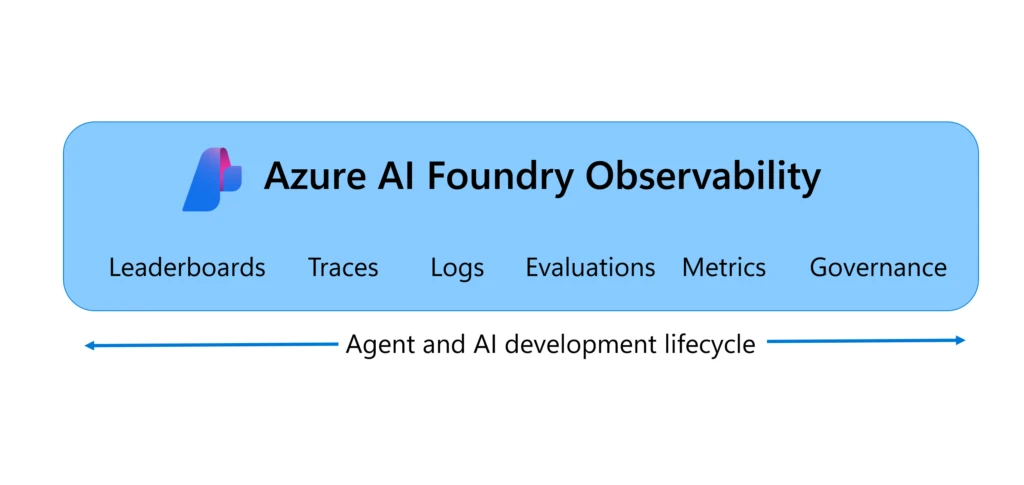

Nonetheless, AI brokers are non-deterministic and introduce new dimensions—autonomy, reasoning, and dynamic resolution making—that require a extra superior observability framework. Agent observability builds on conventional strategies and provides two important parts: evaluations and governance. Evaluations assist groups assess how properly brokers resolve person intent, adhere to duties, and use instruments successfully. Agent governance can guarantee brokers function safely, ethically, and in compliance with organizational requirements.

This expanded method allows deeper visibility into agent conduct—not simply what brokers do, however why and the way they do it. It helps steady monitoring throughout the agent lifecycle, from growth to manufacturing, and is crucial for constructing reliable, high-performing AI methods at scale.

Azure AI Foundry Observability gives end-to-end agent observability

Azure AI Foundry Observability is a unified resolution for evaluating, monitoring, tracing, and governing the standard, efficiency, and security of your AI methods finish to finish in Azure AI Foundry—all constructed into your AI growth loop. From mannequin choice to real-time debugging, Foundry Observability capabilities empower groups to ship production-grade AI with confidence and pace. It’s observability, reimagined for the enterprise AI period.

With built-in capabilities just like the Brokers Playground evaluations, Azure AI Crimson Teaming Agent, and Azure Monitor integration, Foundry Observability brings analysis and security into each step of the agent lifecycle. Groups can hint every agent circulate with full execution context, simulate adversarial eventualities, and monitor dwell site visitors with customizable dashboards. Seamless CI/CD integration allows steady analysis on each commit and governance help with Microsoft Purview, Credo AI, and Saidot integration helps allow alignment with regulatory frameworks just like the EU AI Act—making it simpler to construct accountable, production-grade AI at scale.

5 greatest practices for agent observability

1. Choose the precise mannequin utilizing benchmark pushed leaderboards

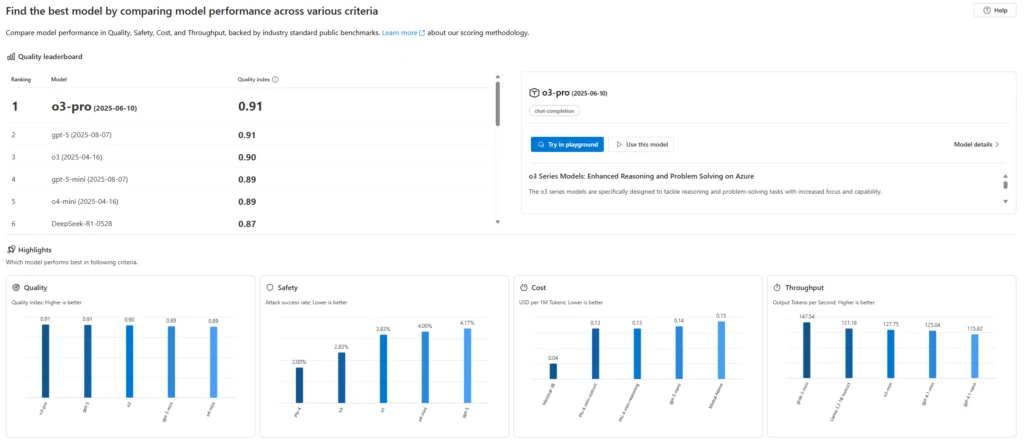

Each agent wants a mannequin and selecting the best mannequin is foundational for agent success. Whereas planning your AI agent, it is advisable resolve which mannequin can be the very best in your use case when it comes to security, high quality, and price.

You may decide the very best mannequin by both evaluating the mannequin by yourself information or use Azure AI Foundry’s mannequin leaderboards to match basis fashions out-of-the-box by high quality, price, and efficiency—backed by trade benchmarks. With Foundry mannequin leaderboards, you’ll find mannequin leaders in varied choice standards and eventualities, visualize trade-offs among the many standards (e.g., high quality vs price or security), and dive into detailed metrics to make assured, data-driven choices.

Azure AI Foundry’s mannequin leaderboards gave us the boldness to scale consumer options from experimentation to deployment. Evaluating fashions facet by facet helped clients choose the very best match—balancing efficiency, security, and price with confidence.

—Mark Luquire, EY International Microsoft Alliance Co-Innovation Chief, Managing Director, Ernst & Younger, LLP*

2. Consider brokers repeatedly in growth and manufacturing

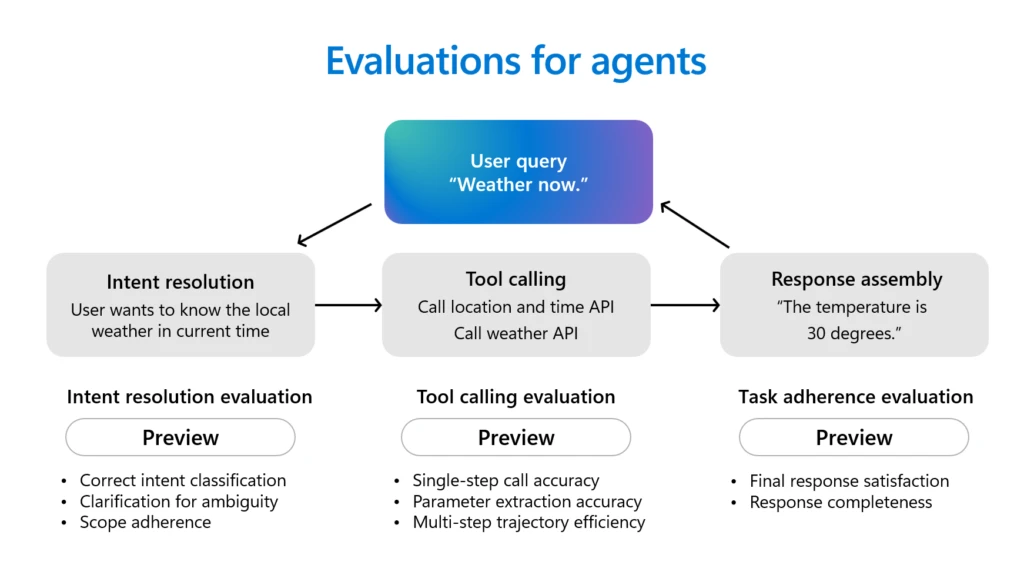

Brokers are highly effective productiveness assistants. They’ll plan, make choices, and execute actions. Brokers usually first cause by way of person intents in conversations, choose the right instruments to name and fulfill the person requests, and full varied duties based on their directions. Earlier than deploying brokers, it’s important to judge their conduct and efficiency.

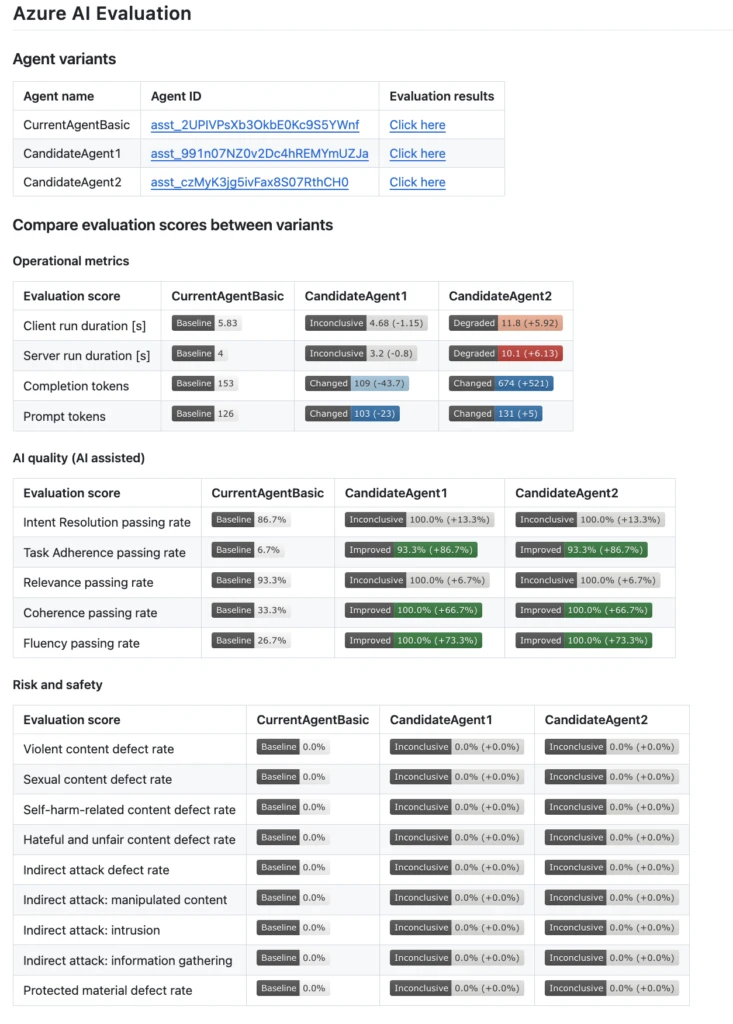

Azure AI Foundry makes agent analysis simpler with a number of agent evaluators supported out-of-the-box, together with Intent Decision (how precisely the agent identifies and addresses person intentions), Activity Adherence (how properly the agent follows by way of on recognized duties), Instrument Name Accuracy (how successfully the agent selects and makes use of instruments), and Response Completeness (whether or not the agent’s response consists of all obligatory data). Past agent evaluators, Azure AI Foundry additionally gives a complete suite of evaluators for broader assessments of AI high quality, threat, and security. These embrace high quality dimensions similar to relevance, coherence, and fluency, together with complete threat and security checks that assess for code vulnerabilities, violence, self-harm, sexual content material, hate, unfairness, oblique assaults, and using protected supplies. The Azure AI Foundry Brokers Playground brings these analysis and tracing instruments collectively in a single place, letting you check, debug, and enhance agentic AI effectively.

The sturdy analysis instruments in Azure AI Foundry assist our builders repeatedly assess the efficiency and accuracy of our AI fashions, together with assembly requirements for coherence, fluency, and groundedness.

3. Combine evaluations into your CI/CD pipelines

Automated evaluations needs to be a part of your CI/CD pipeline so each code change is examined for high quality and security earlier than launch. This method helps groups catch regressions early and will help guarantee brokers stay dependable as they evolve.

Azure AI Foundry integrates together with your CI/CD workflows utilizing GitHub Actions and Azure DevOps extensions, enabling you to auto-evaluate brokers on each commit, examine variations utilizing built-in high quality, efficiency, and security metrics, and leverage confidence intervals and significance exams to help choices—serving to to make sure that every iteration of your agent is manufacturing prepared.

We’ve built-in Azure AI Foundry evaluations instantly into our GitHub Actions workflow, so each code change to our AI brokers is routinely examined earlier than deployment. This setup helps us shortly catch regressions and preserve top quality as we iterate on our fashions and options.

—Justin Layne Hofer, Senior Software program Engineer, Veeam

4. Scan for vulnerabilities with AI crimson teaming earlier than manufacturing

Safety and security are non-negotiable. Earlier than deployment, proactively check brokers for safety and security dangers by simulating adversarial assaults. Crimson teaming helps uncover vulnerabilities that may very well be exploited in real-world eventualities, strengthening agent robustness.

Azure AI Foundry’s AI Crimson Teaming Agent automates adversarial testing, measuring threat and producing readiness stories. It allows groups to simulate assaults and validate each particular person agent responses and complicated workflows for manufacturing readiness.

Accenture is already testing the Microsoft AI Crimson Teaming Agent, which simulates adversarial prompts and detects mannequin and utility threat posture proactively. This device will assist validate not solely particular person agent responses, but in addition full multi-agent workflows by which cascading logic would possibly produce unintended conduct from a single adversarial person. Crimson teaming lets us simulate worst-case eventualities earlier than they ever hit manufacturing. That adjustments the sport.

—Nayanjyoti Paul, Affiliate Director and Chief Azure Architect for Gen AI, Accenture

5. Monitor brokers in manufacturing with tracing, evaluations, and alerts

Steady monitoring after deployment is crucial to catch points, efficiency drift, or regressions in actual time. Utilizing evaluations, tracing, and alerts helps preserve agent reliability and compliance all through its lifecycle.

Azure AI Foundry observability allows steady agentic AI monitoring by way of a unified dashboard powered by Azure Monitor Utility Insights and Azure Workbooks. This dashboard gives real-time visibility into efficiency, high quality, security, and useful resource utilization, permitting you to run steady evaluations on dwell site visitors, set alerts to detect drift or regressions, and hint each analysis outcome for full-stack observability. With seamless navigation to Azure Monitor, you possibly can customise dashboards, arrange superior diagnostics, and reply swiftly to incidents—serving to to make sure you keep forward of points with precision and pace.

Safety is paramount for our giant enterprise clients, and our collaboration with Microsoft allays any issues. With Azure AI Foundry, we have now the specified observability and management over our infrastructure and might ship a extremely safe atmosphere to our clients.

Get began with Azure AI Foundry for end-to-end agent observability

To summarize, conventional observability consists of metrics, logs, and traces. Agent Observability wants metrics, traces, logs, evaluations, and governance for full visibility. Azure AI Foundry Observability is a unified resolution for agent governance, analysis, tracing, and monitoring—all constructed into your AI growth lifecycle. With instruments just like the Brokers Playground, easy CI/CD, and governance integrations, Azure AI Foundry Observability empowers groups to make sure their AI brokers are dependable, protected, and manufacturing prepared. Be taught extra about Azure AI Foundry Observability and get full visibility into your brokers as we speak!

What’s subsequent

Partially 4 of the Agent Manufacturing unit sequence, we’ll give attention to how one can go from prototype to manufacturing quicker with developer instruments and speedy agent growth.

Did you miss these posts within the sequence?

*The views mirrored on this publication are the views of the speaker and don’t essentially replicate the views of the worldwide EY group or its member corporations.